In High Demand

Data Science with Python Certification Course

Data Science with Python Certification Course

Have queries? Ask us+1 833 429 8868 (Toll Free)

130791 Learners4.2 49300 Ratings

View Course Preview Video

Live Online Classes starting on 8th May 2026

Why Choose Edureka?

Google Reviews

G2 Reviews

Sitejabber Reviews

Instructor-led Mastering Python live online Training Schedule

Flexible batches for you

499

Powered By![PayPal Payments PayPal Payment mode]()

Why enroll for Data Science with Python Certification Course?

Data Science with Python Certification Course Benefits

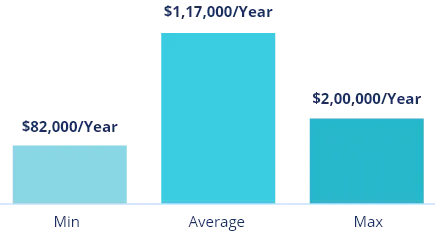

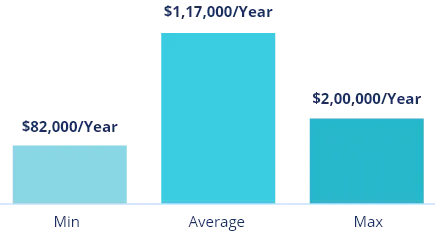

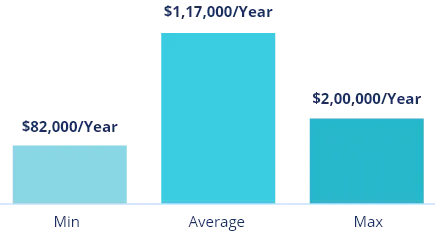

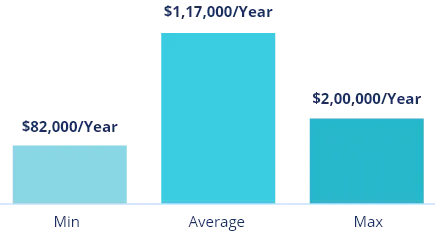

Data Science with Python training covers industry-relevant skills for the fast-growing worldwide job market. The worldwide datasphere is expected to reach 181 zettabytes by 2025, driving the need for Python experts. Data science jobs are predicted to grow by 35–36%, creating 21,000 new positions annually, making this training a gateway to high-value, future-proof careers.

Annual Salary

Hiring Companies

Annual Salary

Hiring Companies

Annual Salary

Hiring Companies

Annual Salary

Hiring Companies

Why Data Science with Python Certification Course from edureka

Live Interactive Learning

- World-Class Instructors

- Expert-Led Mentoring Sessions

- Instant doubt clearing

24x7 Support

- One-On-One Learning Assistance

- Help Desk Support

- Resolve Doubts in Real-time

Hands-On Project Based Learning

- Industry-Relevant Projects

- Course Demo Dataset & Files

- Quizzes & Assignments

Industry Recognised Certification

- Edureka Training Certificate

- Graded Performance Certificate

- Certificate of Completion

About your Data Science with Python Certification Course

Data Science with Python Skills Covered

Data Science with Python Tools Covered

Data Science with Python Course Syllabus

Curriculum Designed by Experts

Introduction to Python for Data Science

10 Topics

Topics

- Python scripting

- Variables & types

- Conditions & loops

- Function basics

- Lambda usage

- Lists & tuples

- Dictionaries

- File reading

- Error handling

- Jupyter setup

![Hands On Experience skill]()

- Writing a “Hello World” script

- Manipulating lists and dictionaries

- Reading a CSV file

![skill you will learn skill]()

- Core Python programming

- Data science environment setup

Working with Python Programming

8 Topics

Topics

- Set operations

- List comprehensions

- Generator functions

- Using modules

- Regex patterns

- Special collections

- Map & filter

- Custom exceptions

![Hands On Experience skill]()

- Using regex for data cleaning

- Creating a generator

- Building a custom module

![skill you will learn skill]()

- Intermediate Python techniques

- Efficient code structures

Advanced Python Programming for Data Science

10 Topics

Topics

- OOP concepts

- Class inheritance

- Context managers

- Unit testing

- API requests

- Code profiling

- Logging basics

- JSON handling

- Project packaging

- Type hints

![Hands On Experience skill]()

- Building a preprocessing class

- Fetching API data

- Writing a unit test

![skill you will learn skill]()

- Advanced Python programming

- Modular code development

Data Analysis with NumPy and Pandas

10 Topics

Topics

- NumPy arrays

- Vector operations

- Math functions

- Series handling

- DataFrames

- Dataset merging

- Missing values

- Pivot tables

- Data summaries

- Memory tuning

![Hands On Experience skill]()

- NumPy array calculations

- Cleaning data with Pandas

- Creating a pivot table

![skill you will learn skill]()

- Data manipulation

- Basic statistical analysis

Data Visualization and Preprocessing Techniques

9 Topics

Topics

- Matplotlib Plotting

- Seaborn Visualization Styles

- Line and Bar Charts

- Histogram Analysis

- Web Scraping Basics

- Missing Data Treatment

- Feature Scaling Techniques

- Encoding Categorical Data

- Data Storytelling Approaches

![Hands On Experience skill]()

- Creating Seaborn plots

- Scraping website data

- Normalizing a dataset

![skill you will learn skill]()

- Data visualization

- Data preprocessing

Statistical Methods for Data Science

10 Topics

Topics

- Descriptive stats

- Variance, standard deviation

- Probability

- Normal distribution

- Hypothesis testing: t-tests

- Correlation: Pearson coefficient

- Outlier detection: z-score

- Sampling: random sampling

- Statistical visualization

- P-values: significance

![Hands On Experience skill]()

- Conducting a t-test

- Visualizing correlations

- Detecting outliers

![skill you will learn skill]()

- Statistical analysis

- Result interpretation

Fundamentals of Machine Learning

9 Topics

Topics

- CRISP-DM process

- ML categories

- Python for ML

- ML tools

- Data lifecycle

- Evaluation

- Feature basics

- AI ethics

- Industry insights

![Hands On Experience skill]()

- Setting up an ML project

- Exploring a dataset

![skill you will learn skill]()

- ML workflows

- Industry trends

Supervised Learning – Regression Analysis

10 Topics

Topics

- Linear regression

- Gradient descent

- Polynomial regression

- Ridge regression

- Error metrics

- R-squared

- Cross-validation

- Residual analysis

- Feature selection

- Overfitting mitigation

![Hands On Experience skill]()

- Building a linear regression model

- Evaluating with RMSE

![skill you will learn skill]()

- Regression modeling

- Model evaluation

Supervised Learning – Classification Fundamentals

10 Topics

Topics

- Logistic regression

- Binary labels

- Decision trees

- Confusion matrix

- Precision & recall

- ROC curve

- Overfitting

- Feature ranking

- Model validation

- Class imbalance

![Hands On Experience skill]()

- Logistic regression model

- Decision tree visualization

![skill you will learn skill]()

- Binary classification

- Evaluation metrics

Supervised Learning – Advanced Classification

11 Topics

Topics

- Random forests

- SVM

- XGBoost

- Grid search

- Random search

- SHAP values

- SMOTE

- Model stacking

- Association rules

- Recommendation engines

- Model evaluation

![Hands On Experience skill]()

- Random Forest model

- Using SHAP for insights

- Building Apriori rules

![skill you will learn skill]()

- Advanced classification

- Interpretability, recommendations

Unsupervised Learning and Clustering Techniques

9 Topics

Topics

- K-Means clusters

- Elbow method

- Hierarchical clustering

- DBSCAN logic

- PCA reduction

- Anomaly detection

- Silhouette score

- Segmentation use

- Cluster visuals

![Hands On Experience skill]()

- K-Means clustering

- Applying PCA

- Detecting anomalies

![skill you will learn skill]()

- Unsupervised learning

- Dimensionality reduction

AutoML and No-Code Data Science Solutions

7 Topics

Topics

- AutoML tools

- DataRobot

- KNIME workflows

- H2O.ai models

- Synthetic data

- Rapid prototyping

- AI fairness

![Hands On Experience skill]()

- DataRobot model building

- KNIME workflow creation

- Generating synthetic data

![skill you will learn skill]()

- AutoML prototyping

- No Code workflows

Reinforcement Learning Essentials (Self-paced)

10 Topics

Topics

- Agent-Environment Interaction

- OpenAI Gym Setup

- Markov Decision Process

- Q-Learning Fundamentals

- Exploration-Exploitation Tradeoff

- Epsilon-Greedy Strategy

- Reward Shaping Concepts

- Reinforcement Learning Applications

- Q-Table Implementation

- Reinforcement Learning Limitations

![Hands On Experience skill]()

- Q-Learning in a game

- OpenAI Gym experiment

![skill you will learn skill]()

- RL algorithms

- Practical applications

Time Series Analysis and Forecasting Methods (Self-paced)

10 Topics

Topics

- Time Series Components

- Stationarity Testing (ADF)

- ARIMA Model Parameters

- Forecasting with Prophet

- Forecast Error Metrics

- Backtesting Techniques

- Trend Visualization Methods

- Confidence Interval Analysis

- External Variable Integration

- Model Selection Strategies

![Hands On Experience skill]()

- ARIMA model

- Prophet forecasting

- Visualizing trends

![skill you will learn skill]()

- Time series analysis

- Forecasting

Machine Learning on Cloud Platforms (Self-paced)

8 Topics

Topics

- Cloud ML Introduction

- AWS SageMaker

- Google Cloud AI

- Azure ML

- Cloud storage

- Serverless ML

- Model deployment

- Scalability

![Hands On Experience skill]()

- Training a model in SageMaker

- Deploying with Google Cloud AI

- Using S3 for data storage

![skill you will learn skill]()

- Cloud-based ML

- Scalable model deployment

MLOps Fundamentals (Self-paced)

7 Topics

Topics

- MLOps Introduction

- CI/CD for ML

- Flask API Deployment

- MLflow Model Tracking

- Docker Containerization

- Model Drift Monitoring

- Model Lifecycle Management

![Hands On Experience skill]()

- Deploying with Flask

- MLflow pipeline setup

![skill you will learn skill]()

- MLOps practices

Data Science with Python Course Description

About Data Science with Python Certification Course

Our Data Science with Python Certification Course gives you all the skills you need, from basic Python programming and data processing with NumPy and Pandas to making detailed visualizations with Matplotlib and Seaborn. You will learn how to do statistical analysis, test hypotheses, and preprocess data. Then you will develop and test machine learning models (regression, classification, clustering) using projects that are like real business situations.

You can improve your skills even more by looking into AutoML platforms like H2O.ai and DataRobot.

Why Learn Data Science using Python?

Python is popular for learning data science because of its clean, accessible syntax, which allows you to focus on analysis rather than boilerplate coding. With an extensive ecosystem that includes NumPy for rapid arrays, Pandas for DataFrames, Matplotlib/Seaborn for graphs, scikit-learn for machine learning, and TensorFlow/PyTorch for deep learning, there's little need to recreate everything from scratch.

Python operates on Windows, macOS, and Linux, connects seamlessly with databases, Excel, business intelligence tools, and cloud platforms, and is scalable from simple scripts to production pipelines. Finally, a large, engaged community ensures ongoing library enhancements and an abundance of learning resources.

Why do we need Python for data science?

One of the primary advantages of Python is its straightforward syntax and intelligibility. It reduces the time that data analysts would otherwise spend acquainting themselves with a programming language.

Why do we need to learn data science?

Data science is a modern field that continues to affect how industries behave. Learning this skill may help you keep up with trends in the industry you work in and provide important insights.

Why learn Machine Learning using Python?

Data science is a set of techniques that enables computers to learn the desired behavior from data without being explicitly programmed. It employs techniques and theories drawn from many fields within the broad areas of mathematics, statistics, information science, and computer science. This certification training exposes you to different classes of machine learning algorithms, like supervised, unsupervised, and reinforcement learning algorithms.

This Data Science with Python Training imparts the necessary skills like data pre-processing, dimensional reduction, and model evaluation, and also exposes you to different machine learning algorithms like regression, clustering, decision trees, random forests, Naive Bayes, and many more.

What are the objectives of our Data Science with Python Training?

The primary goals of our Python-based Data Science training programme are to:

- Enable learners to write clear, efficient code for any data task by providing them with a \ comprehensive understanding of core and advanced Python skills.

- Educate learners about the practical application of NumPy and Pandas for the purpose of cleansing, transforming, and summarizing datasets, as well as Matplotlib/Seaborn for the purpose of generating visually appealing charts and dashboards.

- Build and evaluate regression, classification, clustering, and recommendation models with scikit-learn (and deep learning frameworks), and perform hypothesis testing.

- Interpret metrics such as RMSE, F1, and ROC.

- Deploy models on cloud, implement CI/CD pipelines, containerization (Docker), and model monitoring (MLflow) to ensure production readiness.

- Provide End-to-End Data Solutions Guide learners through real-world projects.

Who should go for this Python Data Science and ML online course?

- Programmers, Developers, Technical Leads, Architects

- Developers aspiring to be a ‘Machine Learning Engineer'

- Analytics Managers who are leading a team of analysts

- Business Analysts who want to understand Machine Learning (ML) Techniques

- Information Architects who want to gain expertise in Predictive Analytics

- Professionals who want to design automatic predictive models

What are the prerequisites for this Data Science with Python Training?

The prerequisites for Edureka's Python Data Science and ML course training include the fundamental understanding of Computer Programming Languages.

How will I execute the practicals in this online Python course?

You will do your assignments and case studies using Jupyter Notebook, which is already installed on your Cloud LAB environment (access it from a browser). The access credentials are available on your LMS. Should you have any queries, the 24*7 Support Team will promptly assist you.

Data Science with Python Course Projects

Data Science with Python Certification

To unlock the Edureka’s Data Science with Python Training course completion certificate, you must ensure the following:

- Completely participate in this Edureka’s Data Science with Python Training Course.

- Evaluation and completion of the quizzes and projects listed.

Yes, Data Scientist is a good career option for those interested in working with data and extracting insights from it. With the explosive growth of data in recent years, the demand for skilled data scientists has increased significantly. As a Data Scientist, one can work in a variety of industries such as healthcare, finance, marketing, and more. The job typically requires a strong foundation in statistics, machine learning, and programming skills, as well as a good understanding of business and domain knowledge. Data Scientist is responsible for collecting, analyzing, and interpreting large and complex data sets to inform business decisions and strategies. Overall, data science is a challenging and rewarding career option with a promising outlook for the future.

Yes, Machine Learning Engineer is a good career option for those interested in working with machine learning algorithms and implementing them in real-world applications. Machine learning is a rapidly growing field with increasing demand for professionals who can build and deploy machine learning models to automate tasks and extract insights from large amounts of data. As a Machine Learning Engineer, one can work in a variety of industries such as healthcare, finance, e-commerce, and more. The job typically requires a strong foundation in machine learning, programming skills, and a good understanding of software engineering principles. Overall, machine learning engineering can be a challenging and rewarding career option with a promising outlook for the future.

To learn data science and machine learning as a beginner, one can start by learning Python programming and then move on to data analysis. After understanding data analysis, one can learn the basics of machine learning, and apply machine learning algorithms to real-world problems. Edureka’s Data Science with Python Certification Training provides a structured learning experience that helps beginners gain practical experience and develop the skills necessary to become proficient in data science and machine learning.

Data Science with Python Certification provides a strong foundation in data science, machine learning, and Python programming. This certification is valuable for several reasons:

- Demonstrates Mastery of Key Skills: Certification indicates that an individual has a strong understanding of data science concepts, machine learning techniques, and Python programming skills.

- Improves Job Prospects: Data science and machine learning are high-growth industries, and certification can improve job prospects by demonstrating expertise in these areas.

- Increases Earning Potential: Certified data scientists and machine learning engineers often earn higher salaries compared to their non-certified peers.

- Enhances Credibility: Certification is a recognized indicator of expertise and can enhance an individual's credibility in the field.

- Keeps Skills Up-to-Date: Data science and machine learning are constantly evolving fields, and certification requires individuals to stay up-to-date with the latest technologies and techniques.

- Enables Career Advancement: Certification can enable individuals to advance their careers by demonstrating mastery of key skills and increasing their value to their organization.

Data Science with Python Certification can open up various job roles in the field of data science and machine learning. Some of the common job roles available after completing this certification include:

- Data Analyst: A data analyst collects, analyzes, and interprets large datasets to help businesses make informed decisions.

- Machine Learning Engineer: A Machine Learning Engineer is responsible for designing, building, and deploying machine learning models that can automate certain tasks.

- Data Scientist: A Data Scientist is responsible for analyzing and interpreting complex data to extract insights and build predictive models.

- Business Intelligence Analyst: A Business Intelligence Analyst is responsible for analyzing data to provide insights that can help businesses make informed decisions.

- AI Architect: An AI Architect is responsible for designing and implementing AI systems, including machine learning algorithms and neural networks.

- Research Scientist: A Research Scientist is responsible for conducting research and experiments to develop new machine learning algorithms and techniques.

You do not need a coding background to enroll in this Data Science with Python course. The course begins with basic modules in which we cover the fundamentals of Python coding. In fact, you do not need prior knowledge in data science or machine learning either. All relevant topics are a part of this course from scratch.

Please visit the following pages, which will guide you through the top interview questions:

John Doe

Title

Zoom-in

reviews

Read learner testimonials

Hear from our learners

Data Science with Python Course FAQs

What is the main purpose of using Python?

Python is designed to be a high-level, versatile programming language that is suitable for a diverse array of applications, such as web development, software development, data analysis, and automation. It is powerful for complex tasks and accessible to novices due to its extensive libraries and simple syntax.

How much Python is required for data science?

You don't need to be a Python specialist to work in data science, but you do need to know about the basics and essential libraries.

Is Python enough to become a data scientist?

Python is an important and commonly used language in data science, but it's not enough on its own to make you a data scientist. Python is excellent because it has a lot of libraries, like as Pandas, NumPy, and Scikit-learn, which are essential to interacting with data, analyzing it, and learning how to use machines. However, data science needs a wider range of abilities, including statistical concepts, data visualization, communication, and domain expertise.

What is the use of data science?

Data science is the process that extracts useful information and insights from data so that clients may make better decisions and solve problems in numerous domains.

Does data science have scope in the future?

Yes, the future of data science is extremely bright. Data is becoming more and more common, and businesses need people who can make decisions based on data. This is causing the area to grow rapidly and to need skilled employees.

What is the role of a data scientist?

A data scientist's job is to look at and understand complicated data in order to find useful information that can help businesses make decisions. They use a mix of computer science, machine learning, and statistical analysis abilities to connect raw data with strategies that can be put into action.

What is the cycle of data science?

The data science cycle, or data science lifecycle, is a way to solve problems with data that is organized. There are several processes that are repeated, such as describing the problem, gathering and preparing data, exploring and modeling the data, evaluating the model, and sharing the results. The cycle isn't a straight line; it's an iterative process where new information learned at one level may cause you to go back to prior phases.

What are the five steps of the data science process?

Defining the problem, obtaining the data, exploring the data, modeling the data, and communicating the results are the five stages of the data science process.

Have more questions?

Course counsellors are available 24x7

For Career Assistance :