In this blog, let us see how to build Spark for a specific Hadoop version.

We will also learn how to build Spark with HIVE and YARN.

Considering that you have Hadoop, jdk, mvn and git pre-installed and pre-configured on your system.

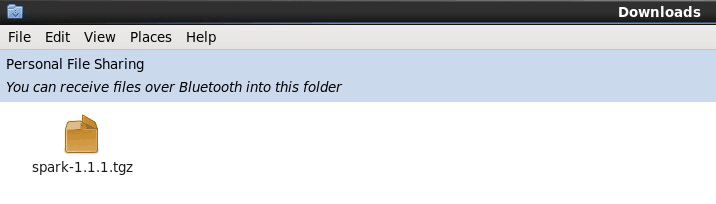

Open Mozilla browser and Download Spark using below link.

https://edureka.wistia.com/medias/k14eamzaza/

Open terminal.

Command: tar -xvf Downloads/spark-1.1.1.tgz

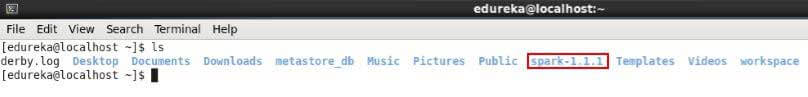

Command: ls

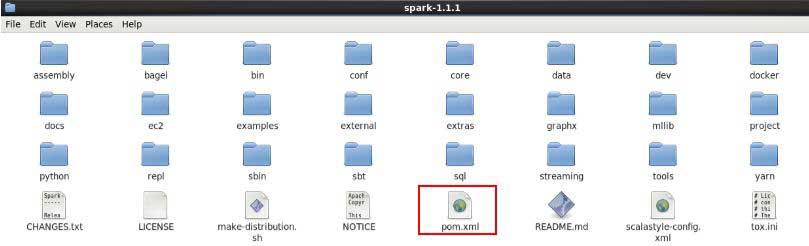

Open spark-1.1.1 directory.

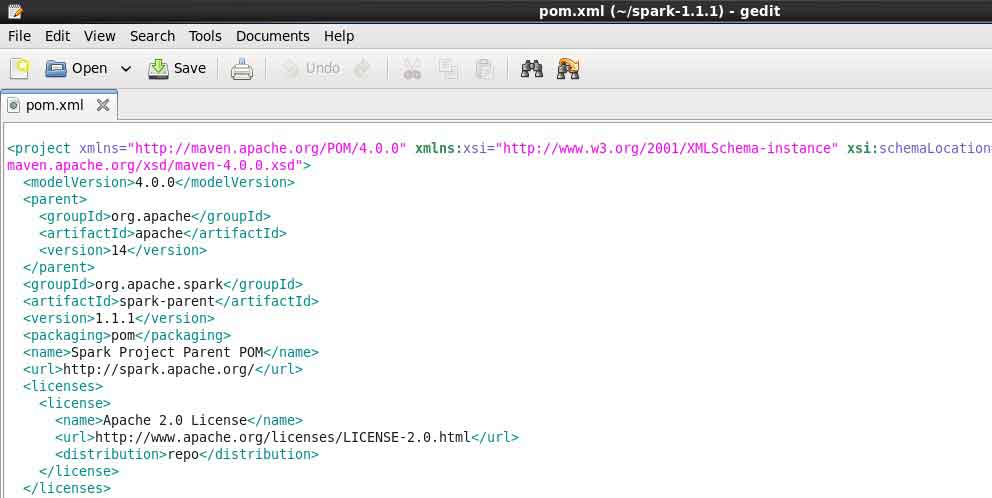

You can open pom.xml file. This file gives you the information about all the dependencies you need.

Do not edit it to stay out of trouble.

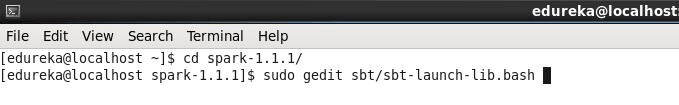

Command: cd spark-1.1.1/

Command: sudo gedit sbt/sbt-launch-lib.bash

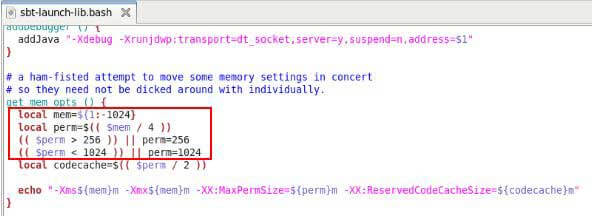

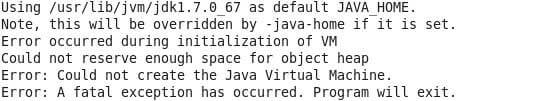

Edit the file as below snapshot, save it and close it.

We are reducing the memory to avoid object heap space issue as mentioned in below snapshot.

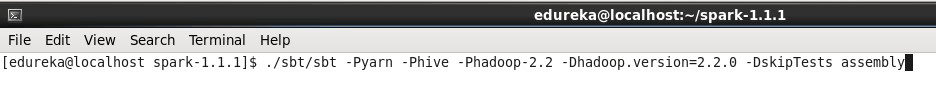

Now, run the below command in the terminal to build spark for Hadoop 2.2.0 with HIVE and YARN.

Command: ./sbt/sbt -Pyarn -Phive -Phadoop-2.2 -Dhadoop.version=2.2.0 -DskipTests assembly

Note: My Hadoop version is 2.2.0, you can change it according to your Hadoop version.

For other Hadoop versions

# Apache Hadoop 2.0.5-alpha

-Dhadoop.version=2.0.5-alpha

# Cloudera CDH 4.2.0

-Dhadoop.version=2.0.0-cdh4.2.0

# Apache Hadoop 0.23.x

-Phadoop-0.23 -Dhadoop.version=0.23.7

# Apache Hadoop 2.3.X

-Phadoop-2.3 -Dhadoop.version=2.3.0

# Apache Hadoop 2.4.X

-Phadoop-2.4 -Dhadoop.version=2.4.0

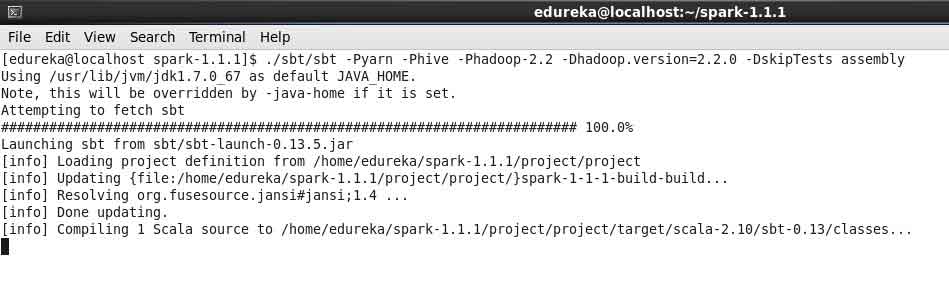

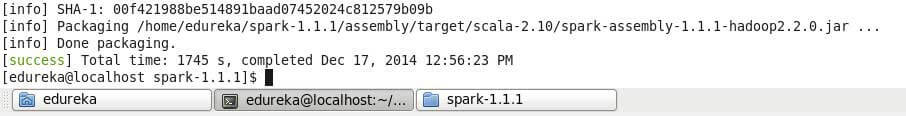

It will take some time for compiling and packaging, please wait till it completes.

Two jars spark-assembly-1.1.1-hadoop2.2.0.jar and spark-examples-1.1.1-hadoop2.2.0.jar gets created.

Path of spark-assembly-1.1.1-hadoop2.2.0.jar : /home/edureka/spark-1.1.1/assembly/target/scala-2.10/spark-assembly-1.1.1-hadoop2.2.0.jar

Path of spark-examples-1.1.1-hadoop2.2.0.jar : /home/edureka/spark-1.1.1/examples/target/scala-2.10/spark-examples-1.1.1-hadoop2.2.0.jar

Congratulations, you have successfully built Spark for Hive & Yarn.

Got a question for us? Please mention them in the comments section and we will get back to you.

Related Posts:

Apache Spark Lighting up the Big Data World