Informatica Capabilities As An ETL Tool

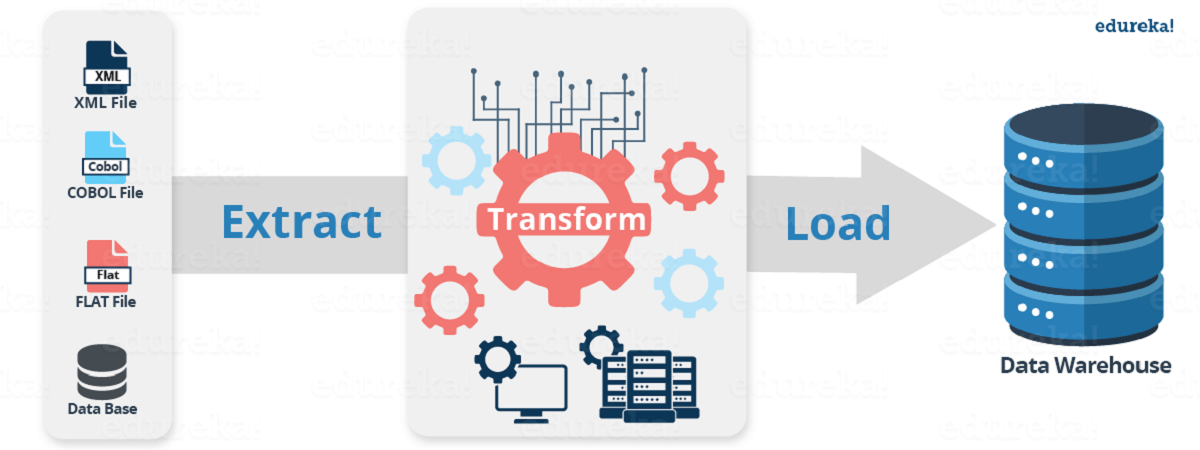

Informatica is one of the most popular ETL tools in the market today. These days Informatica is also being used as an Integration tool. Informatica is known for its ease of use and has a simple visual interface like forms in Visual Basic. Informatica has the ability to communicate with all major data sources like Mainframe, RDBMS, Flat Files, XML, SAP etc., and can move or transform data between them. Thereby moving huge volumes of data effectively.

- Understand Informatica and Informatica product suite

- Understand Informatica PowerCenter Designer

- Work with PowerCenter Workflow Manager

Implement Aggregation and Sorting in Informatica

Common challenges in data integration:

As the field of data integration progresses, the common challenges and inhibitions can be easily identified. The following are some of the important data integration issues that one needs to address when embarking on an integration implementation:

Rising complexity of data

- Dirty data

- Increasing business demands

Cost effective and high standard enterprise data integration

Before we start dissecting how the Designer and Workflow Manager works, let us have a quick introduction to Informatica and its history.

Some facts and figures about Informatica Corporation:

Informatica Corp. provides data integration software and services for various businesses, industries and government organizations including telecommunication, health care, financial and insurance services.

Founded: 1993

2015 Revenue : $1.06 billion

Seven-year Annual CAGR: 30% per year

Employees: 5,500+

Partners: 450+

Customers: Over 5,000 spread across in 82 countries with direct presence in 28 countries

# 1 in Customer Loyalty Rankings (Seven Years in a Row)

Informatica products and their functionalities:

PowerCenter – Fully integrated end-to-end data integration platform, Informatica PowerCenter Enterprise converts raw data into information to drive analysis, daily operations, and data governance initiatives.

Information Lifecycle Management – Informatica’s Information Lifecycle Management software empowers your IT organizations to cost-effectively handle data growth, safely retire legacy systems and applications, optimize test data management and protect sensitive data.

Complex Event Processing – Informatica RulePoint is a complex event processing software that delivers robust and effective complex event processing with real-time alerts and insights into pertinent information to operate in a smarter, faster, efficient and competitive way.

Data Masking – Informatica Data Masking products dynamically mask sensitive production data from unauthorized access, permanently and irreversibly mask nonproduction data thereby helping IT organizations to comply with data privacy regulations, organization-wide data privacy mandates and reduce the risk of a data breach.

Data Quality – Informatica Data Quality provides clean, high-quality data regardless of size, data format, platform, or technology to the business. Helps in validating and improving address information, profiling, and cleansing business data, or implementing a data governance practice and ensuring that the data quality requirements are met.

Data Replication – Informatica Data Replication is database-agnostic, real-time transaction replication software that’s highly scalable, reliable, and non-disruptive to the performance of operational source systems.

Data Virtualization – Informatica Data Services provides a single scalable architecture for both data integration and data federation, creating a data virtualization layer that hides and handles the complexity of accessing underlying data sources – all while insulating them from change.

Master Data Management – The Informatica Master Data Management (MDM) product family delivers consolidated and reliable business-critical data—also known as master data—to the applications that employees rely on everyday.

Ultra Messaging – Informatica Ultra Messaging is a family of next-generation, low-latency messaging middleware products. With very high throughput and 24×7 reliability, they deliver extremely low-latency application messaging over both network-based and shared-memory (inter-process) based transports.

Introduction to PowerCenter:

PowerCenter is a single, unified enterprise data integration platform that allows companies and government organizations of all sizes to access, discover and integrate data from virtually any business system, in any format and deliver that data throughout the enterprise at any speed.

As an ETL tool, whose main advantages of PowerCenter over other ETL tools lies in its robustness, as it can be used in both Windows and Unix based systems. PowerCenter can read from a variety of different sources and write to as many targets while transforming data in between.

The other advantage of PowerCenter when compared to other ETL tools is that it is a high performing yet very simple tool for developing, maintaining and administering.

PowerCenter version history:

The current version of PowerCenter is Informatica PowerCenter 9.6.1 HF2 (as of Feb ’15). From version 9.x onwards, PowerCenter has become service-oriented, with each server component being identified as a service. (Repository service, Integration service etc).

The previous versions of Informatica are neither in use nor under the support of Informatica.

PowerCenter architecture – Single unified architecture:

Overview of PowerCenter Architecture:

The PowerCenter tool consists of the following components:

- Client

- Server

Here’s a list of Client Components of PowerCenter:

- PowerCenter Repository Manager

- PowerCenter Designer

- PowerCenter Workflow Manager

- PowerCenter Workflow Monitor

- PowerCenter Administration Console (browser-based)

Designer overview:

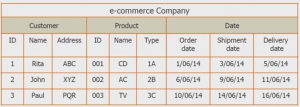

The PowerCenter Designer is the client where we specify how to move the data between various sources and targets. This is where we interpret the various business requirements by using different PowerCenter components called transformations, and pass the data through them (transformations). The Designer is used to create source definitions, target definitions, and transformations, that can be further utilized for developing mappings.

Designer tools:

The Designer provides the following tools:

- Source Analyzer – Import or create source definitions for flat files, XML, COBOL, Application, and relational sources.

- Target Designer – Import or create target definitions.

- Transformation Developer – Create reusable transformations.

- Mapplet Designer – Create mapplets.

- Mapping Designer – Create mappings.

Workflow Manager:

The Workflow Manager is the PowerCenter application that enables designers to build and run Workflows. It can be launched from Designer by clicking on the ‘W’ icon. It can be opened independently from the path shown below:

Start > All Programs > Informatica PowerCenter 9.5.1 > Client > PowerCenter Client > PowerCenter Workflow Manager

The Workflow Designer is the tool necessary for creating Workflow objects. The Workflow Manager displays the following windows to help you create and organize workflows:

Navigator – You can connect to and work in multiple repositories and folders. In the Navigator, the Workflow Manager displays a red icon over invalid objects.

- Workspace – You can create, edit, and view tasks, workflows, and Worklets.

- Output – Contains tabs to display different types of output messages.

The Workflow Manager also displays a status bar that shows the status of the operation you perform.

Workflow Manager tools:

The following are the various tools that come under Workflow Manager:

- Workflow Designer – Maps the execution order and dependencies of sessions, Tasks and Worklets, for the Informatica Server

- Task Developer – Create Session, Shell Command and Email Tasks and the Tasks created in the Task Developer are reusable.

- Worklet Designer – Creates objects that represent a set of Tasks and the Worklet objects are reusable.

Workflow structure:

A Workflow is a set of instructions for the Integration Service to perform data transformation and load. It combines the logic of Session Tasks, other types of Tasks and Worklets. The simplest Workflow is composed of a Start Task, a Link and one other Task

The two additional components are Worklets and Links. Worklets are objects that contain a series of Tasks and Links are required to connect objects in a Workflow.

Scheduling a Workflow:

In order to schedule a workflow, the workflow has to be opened in the Workflow Designer. Once done, the following steps have to be followed:

Click on Workflows > Edit and select “Scheduler” tab.

In the scheduler tab, select ‘Non-reusable’ to create a non-reusable set of scheduler settings for the workflow. Or select ‘Reusable’ to use an existing reusable scheduler for the Workflow.

For ‘Non-reusable’ scheduling, click on the right side of the scheduler field to edit the scheduling settings. And for ‘Reusable’ scheduling, choose a reusable scheduler from the scheduler browser dialog box.

PowerCenter Workflow Monitor:

The Workflow Monitor is the PowerCenter tool which is used to monitor the execution of workflows and tasks. The Workflow Monitor can be used to the following:

View details about a workflow or task run in Gantt chart view or task view.

Run, stop, abort, and resume Workflows or Tasks.

- Displaying Workflows that have run at least once.

Fetching information from the repository to display historic information.

Opening Workflow Monitor:

To open the Workflow Monitor, do the following:

Start>All Programs>lnformatica PowerCenter 9.5.1>Client>PowerCenter Client > PowerCenter Workflow Monitor

The Monitor can also be opened from the Workflow Manager Navigator. The Workflow Manager can be configured to open the Workflow Monitor when a workflow is run from the Workflow Manager.

From Tools > Workflow Monitor in the Designer, Workflow Manager, or Repository Manager. Or, from the Workflow Monitor icon on the Tools toolbar.

Monitoring workflows:

The following are the initial steps to monitor workflows:

- Open the Workflow Monitor.

Connect to the repository containing the Workflow.

- Connect to the Integration Service.

- Select the workflow to be monitored.

- Select Gantt Chart view or Task view.

The Workflow Monitor display can be customized by configuring the maximum days / workflow runs the Workflow Monitor displays. There is also an option to filter Task and Integration Services in both Gantt chart view as well as Task view.

Aggregator:

The Aggregator calculates aggregates such as sums, averages, minimums and maximums, across multiple groups of rows. The aggregator transformation, unlike the Expression transformation, can be used to perform calculations on groups.

Aggregator also enables calculation of gross profits or margins, summaries by period, average values, etc.

Sorter:

Sorter transformation is an active and connected transformation. It sorts incoming data based on one or more key values and the sort order may be ascending, descending, or mixed. The Sorter transformation is often more efficient than a sort performed on a database with an ORDER BY clause. It can also sort data from relational or flat file sources.

The Sorter transformation can be used to sort data passing through an Aggregator transformation configured to use sorted input. It can be configured for case-sensitive sorting, and specify whether the output rows should be distinct.

Webinar presentation:

Why is Informatica dominant?

Features like user-friendly and combined transformations make it stand apart from the competitors. It remains popular due to the unique features offered by Informatica.

Is it compulsory to work only with SQL server databases?

No! Other databases like Oracle can also be used.

What is an active and passive transformation?

An active transformation is the one that changes the number of rows between transformation input and output, changes the transaction boundary by defining commit or rollback points, changes the row type, etc.

Passive transformation is the one which does not change the number of rows that pass through it.

What improves the performance of Aggregator Transformation?

Aggregator performance improves when the records are sorted before passing to the aggregator and ‘sorted input’ option under aggregator properties is selected.

When does Sorted Input in Aggregator fails?

If the input data is not sorted correctly, the session will fail. Even if the input data is properly sorted, the session may fail if the sort order by ports and the group by ports of the aggregator are not in the same order.

Gain a deep understanding of the industry-leading data integration and management platform through our interactive and hands-on training program. Join our Informatica Course and unlock a world of opportunities.

Got a question for us? Please mention them in the comments section and we will get back to you.

Related Posts:

Related Posts: