Does your organization manage data using mainframe and, are you a mainframe professional? If yes, then you might want to be ready for the elephant in the room! Your organization, like numerous others might soon offload mainframe batch to Hadoop. If that happens, you, as a mainframe professional must be Hadoop-ready too.

Let us quickly understand why it is intelligent for a mainframe professional and be ready for this move with the Hadoop Certification.

Pro-activeness can help you get more job responsibility after the Shift

Due to recent advances in computing, many core businesses that are batch oriented, running on mainframes, are moving to modern platforms. The idea of mainframe transition is to adapt flexibly to the changes in the business needs. Earlier, the data that we captured was structured and quiet simple, for example: Sales data, purchase orders and other standard enterprise data. But now, the entry of big data, with more unstructured information like text, documents, images and so on are a challenge to our enterprise system. Mainframe lives in the world of structured data, where handling high volume of unstructured data is time consuming and expensive. Fortunately, Hadoop, an open source platform seems to be a viable alternate to mainframe that handles high volume and variety of data generated by the business. Being open-source makes Hadoop cost effective and easy to use. Therefore, more than 150 enterprises are already using this open source big data management system, and the rest are in a rush to join in. So, if you know Hadoop before your organization does, then you are ready to take on a new role, and more responsibility.

Let us imagine that your organization has recently moved its data management to Hadoop. After this transition, they would require workforce with Hadoop knowledge and skills. If you have acquired a working knowledge of big data and Hadoop beforehand, your value to the organization would increase manifold.

The other crucial reasons, why as a mainframe professional, moving to Hadoop can be an advantage, are:

- As we have seen, the main reason why many organizations are moving to Hadoop is the incapability of mainframe to handle enterprise workload. However, Hadoop handles enterprise work load, reduces strain, and mainly reduces the cost.

- Hadoop features the ability to handle complex business logics. This will make you more efficient as you already have the knowledge of working with mainframe.

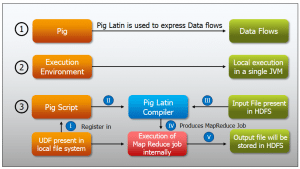

- In a way, working with mainframes might block you from meeting the service level agreements. Reason for this is the growing volume of data. If you know Hadoop and its other features like PIG, Hive, Sqoop, Hbase etc, you will be able to handle any volume and velocity of data in different conditions.

- Generally, mainframes take longer time to process the data with batch processing. This results in the delay of reports and their analysis. With Hadoop in place, batch processing is going to be simpler.

- When you have mastered mainframe, learning Hadoop would be very easy for you, as it has simple and short codes.

Many IT professionals have predicted that, Hadoop will be the future of data management system. It is not only the IT companies, but the other industries like retail, food manufacturing, consulting companies, e-learning business, financial firms online travel, insurance companies and so on are moving their data management system from mainframe to Big Data and Hadoop. Therefore, Hadoop has become an emerging skill, which is in great demand.

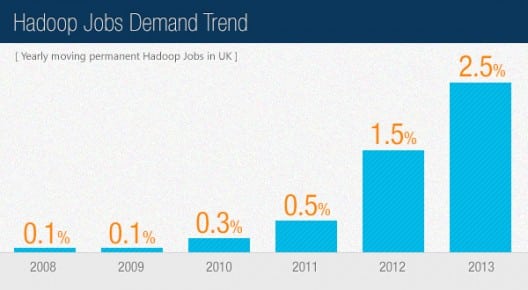

Huge Demand for Big Data Professionals

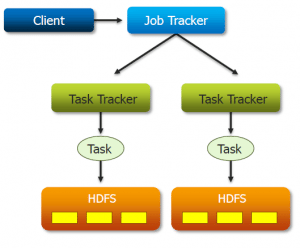

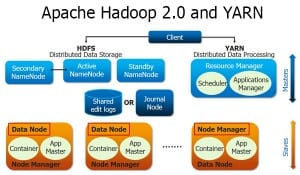

The growing enterprise interest in Hadoop and its technologies are driving huge demand for professionals with big data skills. We can say, big data is creating big career opportunities for mainframe professionals. Organizations that are migrating to Hadoop are looking for people with knowledge and experience of Hadoop and its approaches like MapReduce and R. Therefore, mainframe professionals transitioning to big data space along with Hadoop skill set will have a great career ahead.

According to Alice Hill, Managing Director of Dice.com, “Postings for Hadoop jobs are up 64 percent from a year ago, and Hadoop is the leader in the big data category for job postings.”

Learning or using Hadoop requires a level of analytical expertise. This is made easier with the Hadoop training in Pune. With mainframe knowledge as the base, your attempt to learn Hadoop will make you more efficient and sound to deal with different and changing technologies. As a techie, I am sure you will be up to indulge and build new things, and presently, Big data and data analytics is gaining a lot of momentum and is going to be a bigger future. So, if you have knowledge of Hadoop, it will greatly benefit your career.

So, why shouldn’t IT professionals move from Mainframe to Big Data Hadoop, when they can make it big and advantageous!

Got a question for us? Please mention them in the comments section and we will get back to you.

Related Posts:

10 Reasons Why Big Data Analytics is the Best Career Move

I have 10 years of mainframe experience. Worked in different roles and technologies lilke Assembler, Cobol, CICS, DB2, VSAM etc. What advantages do I have if I decide to change to Hadoop? Will my previous mainframe experience will help me transition to Hadoop faster than other non-mainframers? What roles should I expect once into Hadoop.

Thanks!

Nadia

I have similar experience and similar question. Can someone answer this?

Hello Vinay! Please list down your questions and our in-house tech experts will be answer to answer them for you.

Hi,

Myself shruti, i have 3.5 years of experience in mainframe and currently working in MNC. But i dont find my job exciting and challenging. I am planning to switch technology. By doing a hadoop course will i be able to get a job considering my previous experience in mainframes or will i be recruited as fresher?? And considering the fact are there any freshers job in hadoop ????

Hi Shruthi, this has been the doubt even in my mind but after reading various journals what I feel is you need to search for those jobs which is of migrating from mainframes to big data.

Hi arjuna , In my mind same question which ask the shruti..but less opening will present migrating form mainframes to BIg Data

Hi Mahesh,

We understand that you have a few specific queries for us, and would recommend that you get in touch with us for further clarification by contacting our sales team on +91-8880862004 (India) or 1800 275 9730 (US toll free). You can mail us on sales@edureka.co.

Hi Shruti, I also got stuck in the same dilemma like yours. Could you please share your experience ? Have u switched your technology?

Great Gaurav. It gives awareness about Big Data.

Thanks Amit for dropping by. You can get data from http://www.indeed.com/jobtrends/Hadoop.html

That’s great article Gaurav. But the facts show data related to UK. Is there any information regarding Asian countries, like India.