SQL Essentials Training

- 12k Enrolled Learners

- Weekend/Weekday

- Self Paced

Relational Databases for a long time were enough to handle small or medium datasets. But the colossal rate at which data is growing makes the traditional approach to data storage and retrieval unfeasible. This problem is being solved by newer technologies which can handle Big Data. Hadoop, Hive and Hbase are the popular platforms to operate this kind of large data sets. NoSQL or Not Only SQL databases such as MongoDB® provide a mechanism to store and retrieve data in loser consistency model with advantages like:

The MongoDB® engineering team has recently updated the MongoDB® Connector for Hadoop to have better integration. This makes it easier for Hadoop users to:

Let’s look at a high-level description of how MongoDB® and Hadoop can fit together in a typical Big Data stack. Primarily we have:

Read on to know why MongoDB is the database for Big Data processing and how MongoDB® was used by companies and organizations such as Aadhar, Shutterfly, Metlife and eBay.

In most scenarios the built-in aggregation functionality provided by MongoDB® is sufficient for analyzing data. However in certain cases, significantly more complex data aggregation may be necessary. This is where Hadoop can provide a powerful framework for complex analytics.

In this scenario:

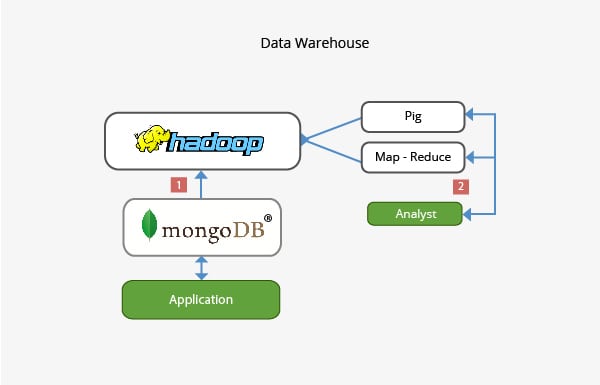

In a typical production setup, application’s data may reside on multiple data stores, each with their own query language and functionality. To reduce complexity in these scenarios, Hadoop can be used as a data warehouse and act as a centralized repository for data from the various sources.

In this kind of scenario:

The team working behind MongoDB® has ensured that with its rich integration with Big Data technologies like Hadoop, it’s able to integrate well in the Big Data Stack and help solve some complex architectural issues when it comes to data storage, retrieval, processing, aggregating and warehousing. Stay tuned for our upcoming post on career prospects for those who take up Hadoop with MongoDB®. If you are already working with Hadoop or just picking up MongoDB®, do check out the courses we offer for MongoDB® here

Explore more about Hadoop concepts. Check out this Online Big Data Course, which was created by Top Industrial working Experts.

Immerse yourself in the world of NoSQL databases with our MongoDB Course.

If you wish to learn Microsoft SQL Server and build a career in the relational databases, functions, queries, variables, etc domain, then check out our interactive, live-online SQL Server Certification here, which comes with 24*7 support to guide you throughout your learning period.

Thank you for registering Join Edureka Meetup community for 100+ Free Webinars each month JOIN MEETUP GROUP

Thank you for registering Join Edureka Meetup community for 100+ Free Webinars each month JOIN MEETUP GROUPedureka.co