In this blog, we shall discuss about Map side join and its advantages over the normal join operation in Hive. This is an important concept that you’ll need to learn to implement your Big Data Hadoop Certification projects. But before knowing about this, we should first understand the concept of ‘Join’ and what happens internally when we perform the join in Hive.

Join is a clause that combines the records of two tables (or Data-Sets).

Assume that we have two tables A and B. When we perform join operation on them, it will return the records which are the combination of all columns o f A and B.

Now let us understand the functionality of normal join with an example..

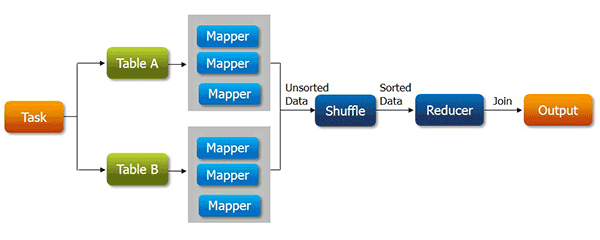

Whenever, we apply join operation, the job will be assigned to a Map Reduce task which consists of two stages- a ‘Map stage’ and a ‘Reduce stage’. A mapper’s job during Map Stage is to “read” the data from join tables and to “return” the ‘join key’ and ‘join value’ pair into an intermediate file. Further, in the shuffle stage, this intermediate file is then sorted and merged. The reducer’s job during reduce stage is to take this sorted result as input and complete the task of join.

Map-side Join is similar to a join but all the task will be performed by the mapper alone.

The Map-side Join will be mostly suitable for small tables to optimize the task.

How will the map-side join optimize the task?

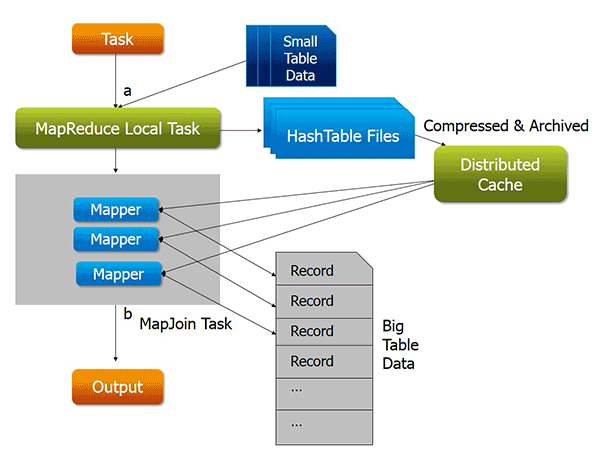

Assume that we have two tables of which one of them is a small table. When we submit a map reduce task, a Map Reduce local task will be created before the original join Map Reduce task which will read data of the small table from HDFS and store it into an in-memory hash table. After reading, it serializes the in-memory hash table into a hash table file.

In the next stage, when the original join Map Reduce task is running, it moves the data in the hash table file to the Hadoop distributed cache, which populates these files to each mapper’s local disk. So all the mappers can load this persistent hash table file back into the memory and do the join work as before. The execution flow of the optimized map join is shown in the figure below. After optimization, the small table needs to be read just once. Also if multiple mappers are running on the same machine, the distributed cache only needs to push one copy of the hash table file to this machine.

Advantages of using map side join:

- Map-side join helps in minimizing the cost that is incurred for sorting and merging in the shuffle and reduce stages.

- Map-side join also helps in improving the performance of the task by decreasing the time to finish the task.

Disadvantages of Map-side join:

- Map side join is adequate only when one of the tables on which you perform map-side join operation is small enough to fit into the memory. Hence it is not suitable to perform map-side join on the tables which are huge data in both of them.

Simple Example for Map Reduce Joins:

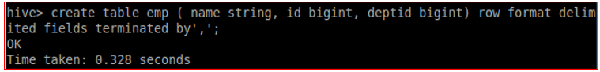

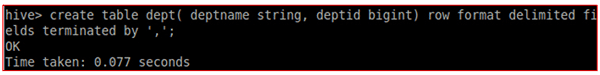

Let us create two tables:

- Emp: contains details of an Employee such as Employee name, Employee ID and the Department she belongs to.

- Dept: contains the details like the Name of the Department, Department ID and so on.

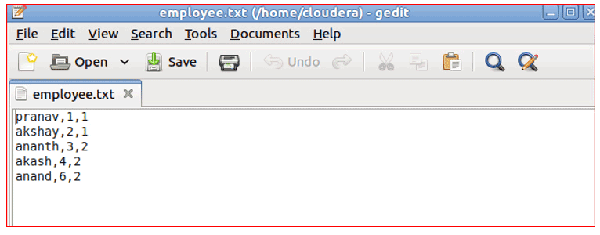

Create two input files as shown in the following image to load the data into the tables created.

employee.txt

dept.txt

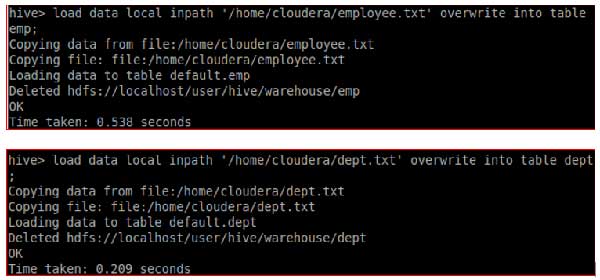

Now, let us load the data into the tables.

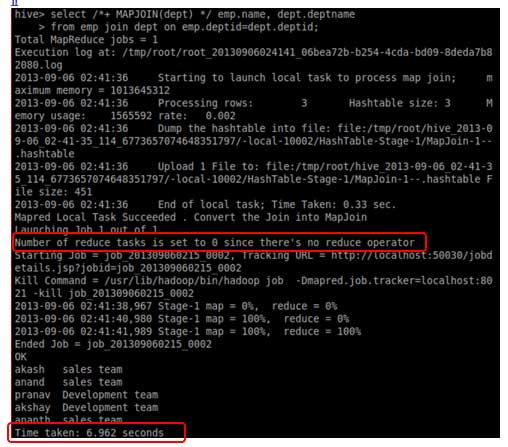

Let us perform the Map-side Join on the two tables to extract the list of departments in which each employee is working.

Here, the second table dept is a small table. Remember, always the number of department will be less than the number of employees in an organization.

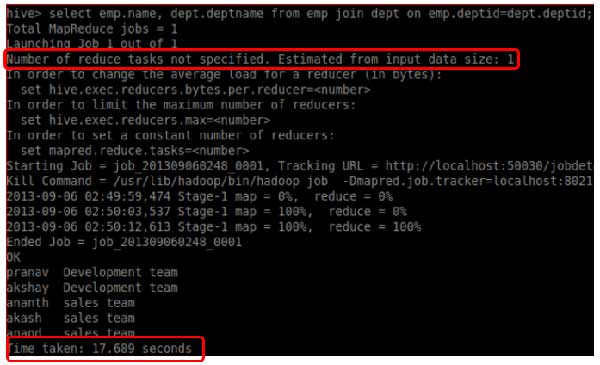

Now let’s perform the same task with the help of normal Reduce-side join.

While executing both the joins, you can find the two differences:

Map-reduce join has completed the job in less time when compared with the time taken in normal join.

Map-reduce join has completed its job without the help of any reducer whereas normal join executed this job with the help of one reducer.

Hence, Map-side Join is your best bet when one of the tables is small enough to fit in memory to complete the job in a short span of time.

In Real-time environment, you will be have data-sets with huge amount of data. So performing analysis and retrieving the data will be time consuming if one of the data-sets is of a smaller size. In such cases Map-side join will help to complete the job in less time.

There has never been a better time to master Hadoop! Get started now with the specially curated Big Data and Hadoop course by Edureka.

References:

https://www.facebook.com/notes/facebook-engineering/join-optimization-in-apache-hive/470667928919

Related Posts:

7 Ways Big Data Training Can Change Your Organization

10 Reasons Why Big Data Analytics is the Best Career Move

Get started with Big Data and Hadoop

Get started with Comprehensive MapReduce

how can we join these two tables, If i have two tables of size 2 GB and 50 GB respectively, in Hive?

can we join these two tables of size 2 GB and of 50 GB in Hive?

Thank you for the detailed article!

My query is – will the map join be applied for data across all partitions when only one of the tables (say the larger table) is partitioned?

Hey Kirthika, thanks for checking out our blog. We’re glad you liked it.

The answer is No. Each input dataset must be divided into the same number of partitions, and it must be sorted by the same key (the join key) in each source.

Hope this helps. Cheers!

Hey it is really nice and crystal clear.. thanks for sharing it…. however, i have 3 questions in this context.

1. Is that the key word /*+MAPJOIN(tablename)*/ which distinguishes normal join and map side join? or anything we need to modify in configuration files before running the join task?

2. You mentioned that, to the mapside join is worth when one of the table is small and specifically it must fit into in-memory/distributed cache … can we increase or decrease this cache size.. if is it yes then where we need to set?

3. Same scenario goes incase of multiple tables, if one of them is small and fit into cache?

Thanks in advance!

Hey Kishore, thanks for checking out our blog. We’re glad you found our explanation useful.

Here are the answers to these queries:

1. Yes, by using this argument we can do mapside join.

2. You need to set hive.mapjoin.smalltable.filesize=(default it will be 25MB)

3. Yes, for multiple tables as well same concept would be applicable.

Hope this helps. Cheers!

Nice one

Thanks Siddesh!!

Informative and clearly explained.

Can you please post an article on Reduce side joins as well.

Thanks.

Thanks Mahanth!! We will consider your suggestion.

Explained very clearly. Thanks for your post………

Thanks Gopi! Feel free to go through our other posts as well.

Great Job. nicely explained and example helps a lot. Even a small explain can do a big thing.

Thanks ..looking for more blogs like this, with examples.

Informative Article! Thanks a ton!

You are welcome, Santhosh!! Feel free to browse through our other blog posts as well.

Thanks, Nice Description

Thanks Amit!! Feel free to go through our other blog posts as well.