Most of us know the story of the woodcutter, who went to work for a timber merchant. His salary was based on the number of trees he could cut for the day. On the first day, he could cut 20 trees. Happy with the result, and even more motivated, he tried harder the next day and came back with 30 trees. However, his success was short-lived. After a week, the number of trees he was cutting was dwindling. “I must be losing my strength,” the woodcutter thought. He decided to go and see his boss and apologized for not living up to the expectations. He was taken aback, when his boss asked him,

“When did you last sharpen your axe?”

He replied, “I have been busy cutting more trees for you and I just did not have the time to sharpen my axe.”

Cut to modern world: I am sure many of us can relate to this story to some extent. What is crystal clear is this: the axe — or in today’s context, technology — needs to be upgraded constantly, without which progress is impossible.

DOES UPGRADING TO NEW TECHNOLOGY HOLD THE KEY?

Most of the companies today are waking up to the needs of technology across all domains and reaping the benefits. Let’s take the example of book retailers. Earlier, traditional booksellers in stores could easily track which books were popular and which were not, based on the number of those particular books sold. If there was a loyalty program, they could tie some of those purchases to individual customers. That was just it.

But once the focus was on online shopping, there has been a 360 degree turnaround in understanding customers. Online retailers — the biggest example being Amazon — were able to track not only what customers bought, but also their viewing history; how did they navigate; how did the reviews, page layout and promotions influence them. They even came up with algorithms to predict which book a particular customer would love to read next. Booksellers in physical stores just could not have this kind of information.

No prizes for guessing why Amazon pushed several bookstores out of business. It is evident that it tapped in to the need to manage its volumes of customer data that was being overlooked by the traditional booksellers.

This is where big data comes into the picture. The hype surrounding big data is not just hype. We now live in a world that is dominated by big data, whether we accept the fact or not. The amount of data doubling every day across the world is undeniable. Pat Gelsinger, the CEO of VMware, has rightly said, “Data is the new science. Big data holds the answers.” Using that data effectively is the crux of the matter.

Companies like Facebook and Twitter have been efficiently using big data for quite some time now. Today, organizations across all domains whether big MNCs or startups — be it social media or health care or finance or airlines — are embracing the big data wave and are investing big time in it. The domino effect of these upgrades and new initiatives are bringing in a lot of changes in job titles and job roles.

ARE YOU READY TO TAKE THE PLUNGE?

But the big question is: Are professionals ready to upgrade to the latest technology and take up new challenges? Shifting to big data is imperative, as it touches nearly every aspect of our lives, whether we realize it or not.

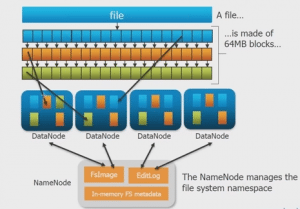

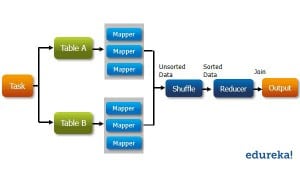

Technology moves at a very fast pace. And, if a Java professional is still fiddling with Java 1.3 code, he needs to look past and upgrade to the most up-to-date technology. Big data and Hadoop are synonymous. Going by the demand and the growing popularity, Hadoop, an open-source — Java-based programming structure — rules the market today. International Data Corporation predicts that the big data and Hadoop market worldwide will hit the $23.8 billion mark by 2016.

Is it prudent for a Software testing professional to jump the Hadoop bandwagon? The answer, I am sure, will be ‘yes’ for many. A testing professional’s job, which entails ironing out bugs and improving the quality of the finished product, can get monotonous at times. He may feel stuck in the rut after a point doing the same kind of work day in and day out. This is when the need to upgrade his skills to big data and Hadoop can come in handy. His realm of opportunities will also open up.

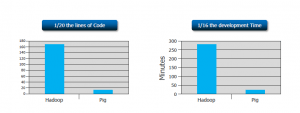

Even a mainframe professional’s work involves bulk data processing. And, handling volumes of unstructured data can be time consuming besides getting monotonous. Take the case of a person, who is involved in census data processing in mainframe. His job includes monitoring and collecting questionnaires, checking, data entry, storage, tabulation etc. This can get mind boggling, right? The process is not only time consuming, but also expensive. Hadoop being an open-source platform can be the most viable alternative to manage volumes of data for him. With Hadoop he will also have better career opportunities that are increasing by the day.

What about the data warehousing professional and the ETL developer, who handle loads and loads of data? Given the enormous flow of data today, they get so caught up in this data that their work is restricted to just handling the flow of data. But by upgrading to Hadoop, these professionals can effortlessly handle volumes of data. Also, how can they forget the big opportunities in the data management sector?

There is also the Business Intelligence professional whose challenge lies in storing Big Data. For example, in an advertising agency, he will constantly need answers to analytic questions, such as: What drives people to certain content? What’s their profile? How do we draw more people to an area? It is only with the help of Hadoop that he can scale up and deliver good answers frequently.

CONCLUSION

Whether you are a Java professional or a software testing engineer or business intelligence professional, there is no debating the fact that big data technologies are becoming a common accompaniment. Therefore, you need to look beyond and upgrade to the challenges of big data technologies. Lest, you become like the woodcutter who was so busy felling trees that he forgot to sharpen his axe.

Is your profession/doma

If you also want to learn Big data and make your career in it then I would suggest you must take up the following Big Data Architect Certification.

Got a question for us? Mention them in the comments section and we will get back to you.

Related Posts:

All You Need to Know About Hadoop

I am Fresher in IT and have done MCA from IGNOU, can i go through Big data hadoop? if yes then what are the prerequisite skills needed and how much salary can i get after doing this.

Hi Abhishek, knowledge in core Java is the only pre-requisite for learning Hadoop. Since you have completed MCA, you would be having the required knowledge in Java. This makes it easier for you to learn Hadoop.

And as far as the salary goes, this is a qualitative scenario and will depend highly upon your expertise on Hadoop. What we are seeing as of now is just a start and there is a lot of potential for professionals who will be the early movers in Big Data space. You can refer to these articles for more information: https://www.edureka.co/blog/big-prospects-for-big-data/ and https://www.edureka.co/blog/5-reasons-to-learn-hadoop/

You can go through this link for more information about the course: https://www.edureka.co/big-data-hadoop-training-certification You can also call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss about this in detail

I work on Middleware tool called MQSeries .How about me?

Hi Abhijeet,

Professionals from different backgrounds like Middleware/MQSeries/Mainframe are opting to learn Hadoop as the scope for jobs is immense. This is the right time as the demand is more than the skill available. You can learn something that new, challenging and has lots of potential from a career prospective. You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail. You can find more course information at https://www.edureka.co/big-data-hadoop-training-certification

Hi, I want to know if this is useful for people belonging to or one who want to pursue Business Analytics with a IT technical background?

Hi Gaurav,

If you are inclined to learn analytics then R is the right course . There is no prerequisite as such but basic understanding of statistics would be beneficial. You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail. You can find more course information at https://www.edureka.co/data-analytics-with-r-certification-training

I am a btech student i have learnt some basic information about bigdata and hadoop from edureka online classes in youtube can you specify me that for a btech student after his completion how this bigdata and hadoop is useful… and iam very interested in learning this course …..

I am a B.com graduate along with a PGDM in IT E-Business, currently working as a SEO Executive in Pune. I am willing to do Bigdata Hadoop course,but don’t have any knowledge of any programming language, also are there jobs available for bigdata hadoop freshers in India, could you please advice me whether I should do it or not.

Hi Sameer, your understanding is right! Core java is the only pre-requisite to learn Hadoop. We also provide a complimentary course on Java for good grasp of MapReduce concepts. It would be better to discuss this over a call so that we can get a better understanding of your professional background and recommend the right Big Data course for you. You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail.

I am a UNIX admin and I am planning to move to Hadoop development.

I am aware of Python programming and have extensive knowledge on Linux.

Hi Indradev, Java is the pre-requisite for learning Big Data and Hadoop, its course which covers the developer and Architect point of view. As a Unix admin, you can also choose Hadoop Administrator course, where you have a knowledge of Linux will be very advantageous. If you want get into the development side of Hadoop then you can go for Big Data and hadoop course or you can choose Hadoop Admin course which is realated to your domain. Please find course information at https://www.edureka.co/big-data-hadoop-training-certification. You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail.

ios dev

Hi Newb, it would be good to have more information about your professional background to effectively answer this question. You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail.

I am Teradata Database administrator,how would learning BIG DATA will help me.

Absolutely Kasi! For professionals from admin background, Hadoop Administration course is a natural progression. The pre requisite for this course is exposure to basic Linux fundamental commands. You can check out this link for more details : https://www.edureka.co/hadoop-administration-training-certification You can also contact us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss about this in detail.

I’m Solaris and storage admin with sun hardware knowledge , is Big data useful for me or not,kindly send email to my email id suresh.pallapothu@yahoo.com

Absolutely Suresh! For professionals from admin background, Hadoop Administration course is a natural progression. The pre requisite is exposure to basic Linux fundamental commands. You can check out this link for more details : https://www.edureka.co/hadoop-administration-training-certification You can also contact us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss about this in detail.

Hi, I am .Net Deeloper. I have keen interest in the BigData, but i don’t think market is ready to hire Bidata developer from other background, what you think what is scope for the person like us

Hi Ak, Professionals from various backgrounds like Data warehousing, Mainframe, Testing and Unix admin background are moving to Hadoop as there is so much scope in it. The requirement for Hadoop skilled professionals is huge and any professionals (irrespective of their current field) with Hadoop skills can ace in the Big Data field. You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail. lease find course information at https://www.edureka.co/big-data-hadoop-training-certification.