What is Pig and Pig Latin?

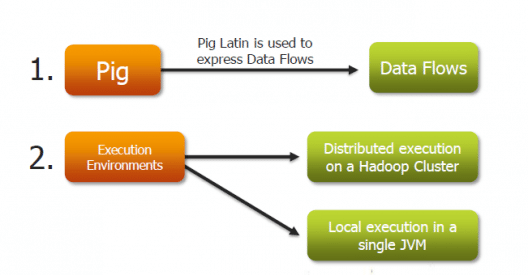

Pig is an open-source high level data flow system. It provides a simple language called Pig Latin, for queries and data manipulation, which are then compiled in to MapReduce jobs that run on Hadoop.

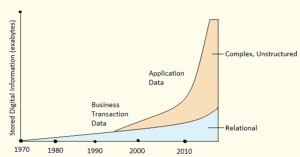

Pig is important as companies like Yahoo, Google and Microsoft are collecting huge amounts of data sets in the form of click streams, search logs and web crawls. Pig is also used in some form of ad-hoc processing and analysis of all the information.

Why Do you Need Pig?

- It’s easy to learn, especially if you’re familiar with SQL.

- Pig’s multi-query approach reduces the number of times data is scanned. This means 1/20th the lines of code and 1/16th the development time when compared to writing raw MapReduce.

- Performance of Pig is in par with raw MapReduce

- Pig provides data operations like filters, joins, ordering, etc. and nested data types like tuples, bags, and maps, that are missing from MapReduce.

- Pig Latin is easy to write and read.

Discover the secrets to harnessing big data for business success in our expert-led Big Data Online Course.

Why was Pig Created?

Pig was originally developed by Yahoo in 2006, for researchers to have an ad-hoc way of creating and executing MapReduce jobs on very large data sets. It was created to reduce the development time through its multi-query approach. Pig is also created for professionals from non-Java background, to make their job easier. You can even check out the details of Big Data with the Data Engineer Course.

Where Should Pig be Used?

Pig can be used under following scenarios:

- When data loads are time sensitive.

- When processing various data sources.

- When analytical insights are required through sampling.

Where Not to Use Pig?

- In places where the data is completely unstructured, like video, audio and readable text.

- In places where time constraints exist, as Pig is slower than MapReduce jobs.

- In places where more power is required to optimize the codes.

Applications of Apache Pig:

- Processing of web logs.

- Data processing for search platforms.

- Support for Ad-hoc queries across large data sets.

- Quick prototyping of algorithms for processing large data sets.

How Yahoo! Uses Pig:

Yahoo uses Pig for the following purpose:

- In Pipelines – To bring logs from its web servers, where these logs undergo a cleaning step to remove bots, company interval views and clicks.

- In Research – To quickly write a script to test a theory. Pig Integration makes it easy for the researchers to take a Perl or Python script and run it against a huge data set.

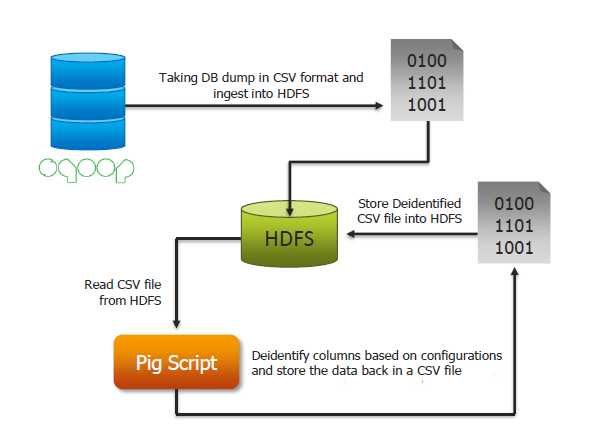

Use Case of Pig in Healthcare Domain:

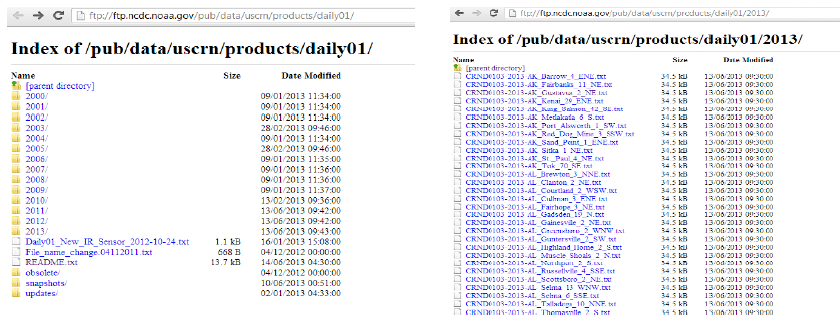

Comparing MapReduce and Pig using Weather Data:

Source: ftp://ftp.ncdc.noaa.gov/pub/data/uscrn/products/daily01/

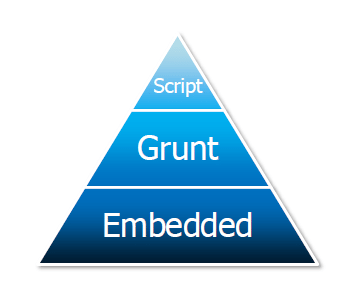

Basic Program Structure of Pig:

Here’s the hierarchy of Pig’s program structure:

- Script – Pig Can run a file script that contains Pig Commands. Eg: pig script .pig runs the command in the local file script.pig

- Grunt – It is an interactive shell for running Pig commands. It is also possible to run pig scripts from within Grunts using run and exec.

- Embedded – Can run Pig programs from Java, much like you can use JDBC to run SQL programs from Java.

Components of Pig:

What is Pig Latin Program?

Pig Latin program is made up of a series of operations or transformations that are applied to the input data to produce output. The job of Pig is to convert the transformations in to a series of MapReduce jobs. You can get a better understanding with the Azure Data Engineering Certification in Washington.

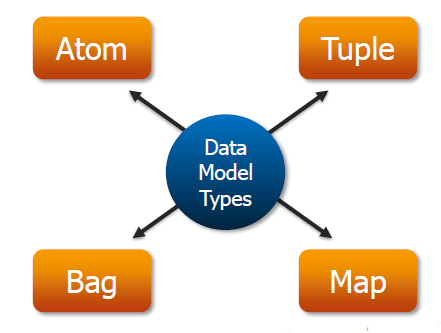

Basic Types of Data Models in Pig:

Pig comprises of 4 basic types of data models. They are as follows:

- Atom – It is a simple atomic data value. It is stored as a string but can be used as either a string or a number

- Tuple – An ordered set of fields

- Bag – An collection of tuples.

- Map – set of key value pairs.

Got a question for us? Mention them in the comments section and we will get back to you.

Related Posts:

Apache Pig UDF – Eval, Aggregate & Filter Functions

Hi….

pig -version

Exception in thread “main” java.lang.NoClassDefFoundError: jline/ConsoleReaderInputStream

at java.base/java.lang.Class.forName0(Native Method)

at java.base/java.lang.Class.forName(Class.java:398)

at org.apache.hadoop.util.RunJar.run(RunJar.java:214)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

Caused by: java.lang.ClassNotFoundException: jline.ConsoleReaderInputStream

at java.base/java.net.URLClassLoader.findClass(URLClassLoader.java:471)

at java.base/java.lang.ClassLoader.loadClass(ClassLoader.java:588)

at java.base/java.lang.ClassLoader.loadClass(ClassLoader.java:521)

… 4 more

How to resolve the above error…..

Hi,

I am a learner of hadoop. i dont know java. I have linux knowledge.

could you please explain about the UDF,UDAF,UDTF in hive & Pig.

can you please explain step by step.

Thanks,

Anbu k.

Hi Anbu, they help

us to write a user defined functions on our own and then use them in

Pig/Hive.