Agentic AI Certification Training Course

- 138k Enrolled Learners

- Weekend/Weekday

- Live Class

In 2005, there was an open-source project called Taste, which was only there for recommendation purpose. When Mahout came in 2008, it replaced Taste. In 2011, Myrrix was started as a separate project, which became an independent Apache project, which is also for recommendation purpose and considers the recommendation systems only.

In December 2013, Myrrix was bought by Cloudera and was moved to the new project, called Oryx.

Oryx is an open-source project, which also provides real-time large-scale machine learning and predictive analytics infrastructure. But the question is, when Oryx has come from Mahout, the general impression would be: It will take care of the recommendation systems only. But Cloudera is also working on the machine-learning algorithms. They encoded other clustering classification algorithms into this project. Now, it not only has the recommendation system, but also the classification and clustering algorithm. As of now, this project is equivalent to Mahout.

Suppose you’re creating a recommendation system, you can call that recommendation system with the REST API. So, you can integrate that particular recommendation system which is separately developed with other application also. That application need not to be Java. It could be the one developed in .net or any other.

One thing to be noted here is that it is not a library, visualization tool, exploratory analytics tool, or environment. Oryx simply represents a unified continuation of Myrrix and Cloudera/ml projects. This project is yet in its alpha stage, and there may be bugs in it. As they are working on it, it is expected that by the end of this year, they may come up with the beta releases, which means that when you’ll install the Cloudera distribution, Oryx will also be there by default.

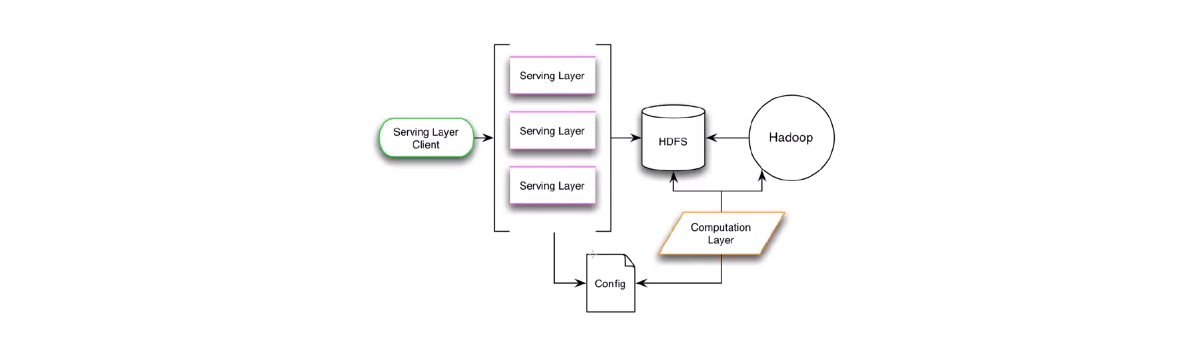

Oryx Architecture has Serving Layer Client. There could be many serving layers. For scalability, it also uses Hadoop and HDFS. The input files would be in HDFS, and computational layer will take up those files from HDFS. It can also run the MapReduce things. This computational layer and both serving layers will be bound by a config file. So once you install Oryx, if you want to try it out, there will be an oryx.config file, which you will have to configure for the input file location, output file location and model location. Once it is setup, then only you can run this computation layer and serving layers.

Check out this NLP Course by Edureka to upgrade your AI skills to the next level.

Related Posts

Thank you for registering Join Edureka Meetup community for 100+ Free Webinars each month JOIN MEETUP GROUP

Thank you for registering Join Edureka Meetup community for 100+ Free Webinars each month JOIN MEETUP GROUPedureka.co