Talend is said to be the next generation leader in cloud & data integration software and currently holds a market share of 19.3%. This means there’s going to be a huge demand for professionals having Talend Certification in near future. I think this is a good time to grab this opportunity and prepare yourself to ace the competition. In this Talend interview questions blog, I have selected the top 75 questions which will help you in cracking your interview. I have divided this list of Talend interview questions into 4 sections:

- General Talend Interview Questions

- Data Integration Talend Interview Questions

- Big Data Talend Interview Questions

- Multiple Choice Questions

Talend Interview Questions and Answers | Talend Online Training | Talend Tutorial | Edureka

General – Talend Interview Questions

Why use Talend over other ETL tools available in the market.

Following are few of the advantages of Talend:

Features Of Talend ETL Tool

Feature Description Faster Talend automates the tasks and further maintains them for you. Less Expense Talend provides open source tools which can be downloaded free of cost. Moreover, as the processes speed up, the developer rates are reduced as well. Future Proof Talend is comprised of everything that you might need to meet the marketing requirements today as well as in the future. Unified Platform Talend meets all of our needs under a common foundation for the products based on the needs of the organization. Huge Community Being open source, it is backed up by a huge community. What Is Talend?

Talend is an open source software integration platform/vendor.

- It offers data integration and data management solutions.

- This company provides various integration software and services for big data, cloud storage, data integration, data management, master data management, data quality, data preparation, and enterprise applications.

- But Talend’s first product i.e. Talend Open Studio for Data Integration is more popularly referred as Talend.

What is Talend Open Studio?

Talend Open Studio is an open source project that is based on Eclipse RCP. It supports ETL oriented implementations and is generally provided for the on-premises deployment. This acts as a code generator which produces data transformation scripts and underlying programs in Java. It provides an interactive and user-friendly GUI which lets you access the metadata repository containing the definition and configurations for each process performed in Talend.

What is a project in Talend?

‘Project’ is the highest physical structure which bundles up and stores all types of Business Models, Jobs, metadata, routines, context variables or any other technical resources.

Describe a Job Design in Talend.

A Job is a basic executable unit of anything that is built using Talend. It is technically a single Java class which defines the working and scope of information available with the help of graphical representation. It implements the data flow by translating the business needs into code, routines, and programs.

What is a ‘Component’ in Talend?

A component is a functional piece which is used to perform a single operation in Talend. On the palette, whatever you can see all are the graphical representation of the components. You can use them with a simple drag and drop. At the backend, a component is a snippet of Java code that is generated as a part of a Job (which is basically a Java class). These Java codes are automatically compiled by Talend when the Job is saved.

Explain the various types of connections available in Talend.

Connections in Talend define whether the data has to be processed, data output, or the logical sequence of a Job. Various types of connections provided by Talend are:

- Row: The Row connection deals with the actual data flow. Following are the types of Row connections supported by Talend:

- Main

- Lookup

- Filter

- Rejects

- ErrorRejects

- Output

- Uniques/Duplicates

- Multiple Input/Output

- Iterate: The Iterate connection is used to perform a loop on files contained in a directory, on rows contained in a file or on the database entries.

- Trigger: The Trigger connection is used to create a dependency between Jobs or Subjobs which are triggered one after the other according to the trigger’s nature. Trigger connections are generalized in two categories:

- Subjob Triggers

- OnSubjobOK

- OnSubjobError

- Run if

- Component Triggers

- OnComponentOK

- OnComponentError

- Run if

- Subjob Triggers

- Link: The Link connection is used to transfer the table schema information to the ELT mapper component.

- Row: The Row connection deals with the actual data flow. Following are the types of Row connections supported by Talend:

Differentiate between ‘OnComponentOk’ and ‘OnSubjobOk’.

OnComponentOk OnSubjobOk 1. Belongs to Component Triggers 1. Belongs to Subjob Triggers 2. The linked Subjob starts executing only when the previous component successfully finishes its execution 2. The linked Subjob starts executing only when the previous Subjob completely finishes its execution 3. This link can be used with any component in a Job 3. This link can only be used with the first component of the Subjob Why is Talend called a Code Generator?

Talend provides a user-friendly GUI where you can simply drag and drop the components to design a Job. When the Job is executed, Talend Studio automatically translates it into a Java class at the backend. Each component present in a Job is divided into three parts of Java code (begin, main and end). This is why Talend studio is called a code generator.

What are the various types of schemas supported by Talend?

Some of the major types of schemas supported by Talend are:

- Repository Schema: This schema can be reused across multiple jobs and any changes done will be automatically reflected to all the Jobs using it.

- Generic Schema: This schema is not tied to any particular source & is used as a shared resource across multiple types of data sources.

- Fixed Schema: These are the read-only schemas which will come predefined with some of the components.

Explain Routines.

Routines are the reusable pieces of Java code. Using routines you can write custom code in Java in order to optimize data processing, improve Job capacity, and extend Talend Studio features.

Talend supports two types of routines:

- System routines: These are the read-only codes which you can call directly in any Job.

- User routines: These are the routines which can be custom created by the users by either creating new ones or adapting the existing ones.

Can you define schema at runtime in Talend?

Schemas can’t be defined during runtime. As the schemas define the movement of data, it must be defined while configuring the components.

Differentiate between ‘Built-in’ and ‘Repository’.

Built-in Repository 1. Stored locally inside a Job 1. Stored centrally inside the Repository 2. Can be used by the local Job only 2. Can be used globally by any Job within a project 3. Can be updated easily within a Job 3. Data is read-only within a Job What are Context Variables and why they are used in Talend?

Context variables are the user-defined parameters used by Talend which are passed into a Job at the runtime. These variables may change their values as the Job promotes from Development to Test and Production environment. Context variables can be defined in three ways:

- Embedded Context Variables

- Repository Context Variables

- External Context Variables

Can you define a variable which can be accessed from multiple Jobs?

Yes, you can do that by declaring a static variable within a routine. Then you need to add the setter/getter methods for this variable in the routine itself. Once done, this variable will be accessible from multiple Jobs.

What is a Subjob and how can you pass data from parent Job to child Job?

A Subjob can be defined as a single component or a number of components which are joined by data-flow. A Job can have at least one Subjob. To pass a value from the parent Job to child Job you need to make use of context variables.

Define the use of ‘Outline View’ in TOS.

Outline View in Talend Open Studio is used to keep the track of return values available in a component. This will also include the user-defined values configured in a tSetGlobal component.

Explain tMap component. List down the different functions that you can perform using it.

tMap is one of the core components which belongs to the ‘Processing’ family in Talend. It is primarily used for mapping the input data to the output data. tMap can perform following functions:

- Add or remove columns

- Apply transformation rules on any type of field

- Filter input and output data using constraints

- Reject data

- Multiplex and demultiplex data

- Concatenate and interchange the data

Differentiate between tMap and tJoin.

tMap tJoin 1. It is a powerful component which can handle complicated cases 1. Can only handle basic Join cases 2. Can accept multiple input links (one is main and rest are lookups) 2. Can accept only two input links (main and lookup) 3. Can have more than one output links 3. Can have only two output links (main and reject) 4. Supports multiple types of join models like unique join, first join, and all join etc. 4. Supports only unique join 5. Supports inner join and left outer join 5. Supports only inner join 6. Can filter data using filter expressions 6. Can’t-do so What is a scheduler?

A scheduler is a software which selects processes from the queue and loads them into memory for execution. Talend does not provide a built-in scheduler.

Data Integration – Talend Interview Questions

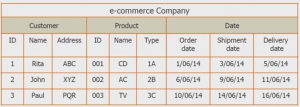

Describe the ETL Process.

ETL stands for Extract, Transform and Load. It refers to a trio of processes which are required to move the raw data from its source to a data warehouse, a business intelligence system, or a big data platform.

- Extract: This step involves accessing the data from all the Storage Systems like RDBMS, Excel files, XML files, flat files etc.

- Transform: In this step, entire data is analyzed and various functions are applied on it to transform that into the required format.

- Load: In this step, the processed data, i.e. the extracted and transformed data, is then loaded to a target data repository which usually is the database, by utilizing minimal resources.

Differentiate between ETL and ELT.

ETL ELT 1. Data is first Extracted, then it is Transformed before it is Loaded into a target system 1. Data is first Extracted, then it is Loaded to the target systems where it is further Transformed 2. With the increase in the size of data, processing slows down as entire ETL process needs to wait till Transformation is over 2. Processing is not dependent on the size of the data 3. Easy to implement 3. Needs deep knowledge of tools in order to implement 4. Doesn’t provide Data Lake support 4. Provides Data Lake support 5. Supports relational data 5. Supports unstructured data Can we use ASCII or Binary Transfer mode in SFTP connection?

No, the transfer modes can’t be used in SFTP connections. SFTP doesn’t support any kind of transfer modes as it is an extension to SSH and assumes an underlying secure channel.

How do you schedule a Job in Talend?

In order to schedule a Job in Talend first, you need to export the Job as a standalone program. Then using your OS’ native scheduling tools (Windows Task Scheduler, Linux, Cron etc.) you can schedule your Jobs.

Explain the purpose of tDenormalizeSortedRow.

tDenormalizeSortedRow belongs to the ‘Processing’ family of the components. It helps in synthesizing sorted input flow in order to save memory. It combines all input sorted rows in a group where the distinct values are joined with item separators.

Differentiate between “insert or update” and “update or insert”.

insert or update: In this action, first Talend tries to insert a record, but if a record with a matching primary key already exists, then it updates that record.

update or insert: In this action, Talend first tries to update a record with a matching primary key, but if there is none, then the record is inserted.

Explain the usage of tContextLoad.

tContextLoad belongs to the ‘Misc’ family of components. This component helps in modifying the values of the active context on the fly. Basically, it is used to load a context from a flow. It sends warnings if the parameters defined in the input are not defined in the context and also if the context is not initialized in the incoming data.

Discuss the difference between XMX and XMS parameters.

XMS parameter is used to specify the initial heap size in Java whereas XMX parameter is used to specify the maximum heap size in Java.

What is the use of Expression Editor in Talend?

From an Expression Editor, all the expressions like Input, Var or Output, and constraint statements can be viewed and edited easily. Expression Editor comes with a dedicated view for writing any function or transformation. The necessary expressions which are needed for the data transformation can be directly written in the Expression editor or you can also open the Expression Builder dialog box where you can just write the data transformation expressions.

Explain the error handling in Talend.

There are few ways in which errors in Talend can be handled:

- For simple Jobs, one can rely on the exception throwing process of Talend Open Studio, which is displayed in the Run View as a red stack trace.

- Each Subjob and component has to return a code which leads the additional processing. The Subjob Ok/Error and Component Ok/Error links can be used to direct the error towards an error handling routine.

- The basic way of handling an error is to define an error handling Subjob which should execute whenever an error occurs.

Differentiate between the usage of tJava, tJavaRow, and tJavaFlex components.

Functions tJava tJavaRow tJavaFlex 1. Can be used to integrate custom Java code Yes Yes Yes 2. Will be executed only once at the beginning of the Subjob Yes No No 3. Needs input flow No Yes No 4. Needs output flow No Only if output schema is defined Only if output schema is defined 5. Can be used as the first component of a Job Yes No Yes 6. Can be used as a different Subjob Yes No Yes 7. Allows Main Flow or Iterator Flow Both Only Main Both 8. Has three parts of Java code No No Yes 9. Can auto propagate data No No Yes How can you execute a Talend Job remotely?

You can execute a Talend Job remotely from the command line. All you need to do is, export the job along with its dependencies and then access its instructions files from the terminal.

Can you exclude headers and footers from the input files before loading the data?

Yes, the headers and footers can be excluded easily before loading the data from the input files.

Explain the process of resolving ‘Heap Space Issue’.

‘Heap Space Issue’ occurs when JVM tries to add more data into the heap space area than the space available. To resolve this issue, you need to modify the memory allocated to the Talend Studio. Then you have to modify the relevant Studio .ini configuration file according to your system and need.

What is the purpose of ‘tXMLMap’ component?

This component transforms and routes the data from single or multiple sources to single or multiple destinations. It is an advanced component which is sculpted for transforming and routing XML data flow. Especially when we need to process numerous XML data sources.

Big Data – Talend Interview Questions

Differentiate between TOS for Data Integration and TOS for Big Data.

Talend Open Studio for Big Data is the superset of Talend For Data Integration. It contains all the functionalities provided by TOS for DI along with some additional functionalities like support for Big Data technologies. That is, TOS for DI generates only the Java codes whereas TOS for BD generates MapReduce codes along with the Java codes.

What are the various Big data technologies supported by Talend?

In TOS for BD, the Big Data family is really very large and few of the most used technologies are:

- Cassandra

- CouchDB

- Google Storage

- HBase

- HDFS

- Hive

- MapRDB

- MongoDB

- Pig

- Sqoop etc.

How can you run multiple Jobs in parallel within Talend?

As Talend is a java-code generator, various Jobs and Subjobs in multiple threads can be executed to reduce the runtime of a Job. Basically, there are three ways for parallel execution in Talend Data Integration:

- Multithreading

- tParallelize component

- Automatic parallelization

What are the mandatory configurations needed in order to connect to HDFS?

In order to connect to HDFS you must provide the following details:

- Distribution

- NameNode URI

- User name

Which service is mandatory for coordinating transactions between Talend Studio and HBase?

Zookeeper service is mandatory for coordinating the transactions between TOS and HBase.

What is the name of the language used for Pig scripting?

Pig Latin is used for scripting in Pig.

When do you use tKafkaCreateTopic component?

This component creates a Kafka topic which the other Kafka components can use as well. It allows you to visually generate the command to create a topic with various properties at topic-level.

Explain the purpose of tPigLoad component.

Once the data is validated, this component helps in loading the original input data to an output stream in just one single transaction. It sets up a connection to the data source for the current transaction.

What component do you need to use to automatically close a Hive connection as soon as the main Job finishes execution?

Using a tPostJob and tHiveClose components you can close a Hive connection automatically.

MCQ – Talend Interview Questions

In Talend Studio, where can you find the components needed to create a job?

Repository

Run view

Designer Workspace

- Palette [Ans]

In the component view, where can you change the name of a component from?

- Basic settings

- Advanced settings

- Documentation

- View [Ans]

The HDFS components can only be used with Big Data batch or Big Data streaming Jobs.

- True

- False [Ans]

An analysis on Hive table content can be executed in which perspective of Talend Studio?

- Profiling [Ans]

- Integration

- Big Data

- Mediation

What does an asterisk next to the Job name signify in the design workspace?

- It is an active Job

- The Job contains unsaved changes [Ans]

- The job is currently running

- The Job contains errors

Suppose you have designed a Big Data batch using the MapReduce framework. Now you want to execute it on a cluster using Map Reduce. Which configurations are mandatory in the Hadoop Configuration tab of the Run view?

- Name Node [Ans]

- Data Node

- Resource Manager

- Job Tracker [Ans]

How to find configuration error message for a component?

- Right-click the component and select “Show Problems”

- Hover over the error symbol within the Designer view [Ans]

- Open the Errors view

- Open the Jobs view

What is the process of joining two input columns in the tMap configuration window?

- Dragging a column from the main input table to a column in another input table [Ans]

- Right-clicking one column in the input table and selecting “Join”

- Selecting two columns in two distinct input tables, right-clicking, and selecting “Join”

- Selecting two columns in two distinct input tables dragging them to the output table

To import a file from FTP, which of the following are the mandatory components?

- tFTPConnection, tFTPPut

- tFTPConnection, tFTPFileList, tFTPGet

- tFTPConnection, tFTPGet [Ans]

- tFTPConnection, tFTPExists, tFTPGet

Suppose you have three Jobs of which Jobs 1 and 2 are executed parallelly. Job 3 executes only after Jobs 1 and 2 complete their execution. Which of the following components can be used to set this up?

- tUnite

- tPostJob [Ans]

- tRunJob

- tParallelize [Ans]

For a tFileInputDelimited component, what is the default field separator parameter?

- Semicolon [Ans]

- Pipe

- Comma

- Colon

While saving the changes to a tMap configuration, sometimes Talend asks you for confirmation to propagate changes. Why?

- Because your changes affect the output schema and the source component should have a matching schema

- Because your changes affect the output schema and the target component should have a matching schema [Ans]

- Because your changes affect an input schema and the related source component should have a matching schema

- Because your changes have not been saved yet

In Talend, how to add a Shape into a Business Model?

- Click and place it from the palette

- Drag it from the repository

- Click in the quick access toolbar

- Drag and drop it from the palette [Ans]

How do you create a row link between two components?

- Drag the target component onto the source component

- Right-click the source component and then click on the target component

- Drag the source component onto the target component

- Right-click the source component, click the row followed by the row type and then the target component [Ans]

Talend Open Studio generates the Job documentation in which of the following format?

- HTML [Ans]

- TEXT

- CSV

- XML

We can directly change the generated code in Talend.

- True

- False [Ans]

What is the default date pattern in Talend Open Studio?

- MM-DD-YY

- DD-MM-YY [Ans]

- DD-MM-YYYY

- YY-MM-DD

MDM stands for

- Meta Data Management

- Mobile Device Management

- Master Data Management [Ans]

- Mock Data Management

In order to encapsulate and pass the collected log data to the output, which components must be used along with tLogCatcher?

- tWarn [Ans]

- tDie [Ans]

- tStatCatcher

- tAssertCatcher

Which component do you need to use in order to read data line by line from an input flow and store the data entries into iterative global variables?

- tIterateToFlow

- tFileList

- tFlowToIterate [Ans]

- tLoop

tMemorizeRows belongs to which component family in Talend?

- Misc [Ans]

- Orchestration

- Internet

- File

_________ is a powerful input component which holds the ability to replace a number of other components of the File family.

- tFileInputLDIF

- tFileInputRegex [Ans]

- tFileInputExcel

- tFileInputJSON

Which component do you need in order to prevent an unwanted commit in MySQL database?

- tMysqlRollback [Ans]

- tMysqlCommit

- tMysqlLookupInput

- tMysqlRow

A database connection defined in Repository can be reused by any Job within the project.

- True [Ans]

- False

Using which component can you integrate personalized Pig code with a Talend program?

- tPigCross

- tPigMap

- tPigDistinct

- tPigCode [Ans]

tKafkaOutput component receives messages serialized into which data type?

- byte

- byte[] [Ans]

- String[]

- Integer

Two which two component families do tHDFSProperties components belongs to?

- Big Data and Misc

- Orchestration and Big Data

- File and Big Data [Ans]

- Big Data and Internet

This component is used to read data from cache memory for high-speed data access

- tHashInput [Ans]

- tFileInputLDIF

- tHDFSInput

- tFileInputXML

Using which component you can calculate the processing time of one or more Subjobs in the main Job?

- tFlowMeter

tChronometerStart [Ans]

tFlowMeterCatcher

- tStatCatcher

tUnite component belongs which of the following two families?

- File and Processing

- Misc and Messaging

- Orchestration and Messaging

- Orchestration and Processing [Ans]

Using tJavaFlex how many parts of java-code you can add in your Job?

- One

- Two

- Three [Ans]

- Four

talend components always have a main connection as output?

Thanks for providing this interview questions. These are very helpful when i attend my interview for job

I found a few points that could be enhanced.

OnComponent vs OnSubjob

When using OnSubjob the previous function call is complete, and garbage collector could free up memory. Also when using OnComponent the data may not be committed, depending on where you put the link. These are 2 good reasons to use OnSubjobOK every time.

Since In some answers mention pieces which is for Enterprise studio (for example the tParallelize ) I feel that the following questions could be enhanced.

Can you define schema at runtime in Talend?

Talend Enterprise has a dynamic schema feature that doesn’t limit you to define the schema in runtime. However it makes hard to modify the data in runtime. (But one can write custom code to do it.)

Scheduling

Talend Enterprise has a web interface that lets you build and schedule jobs.

Hey Balazs, thank you for pointing this out. We hope that you liked our blogs and found it useful :)