In a very short span of time Apache Hadoop has moved from an emerging technology to an established solution for Big Data problems faced by today’s enterprises.

Also, from its launch in 2006, Amazon Web Services (AWS) has become synonym to Cloud Computing. CIA’s recent contract with Amazon to build a private cloud service inside the CIA’s data centers is the proof of growing popularity and reliability of AWS as a Cloud Computing vendor to various organizations.

If you are a Big Data, Hadoop, and Cloud Computing enthusiast, you can start your journey by creating an Apache Hadoop Cluster on Amazon EC2 without spending a single penny from your pocket! This exercise will not only help you in understanding the nitty-gritty of an Apache Hadoop Cluster but also make you familiar with AWS Cloud Computing Ecosystem. Check out this Big Data Certification blog will help you to guide and complete important hadoop certifications.

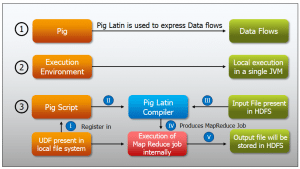

Amazon also provides a hosted solution for Apache Hadoop, named Amazon Elastic MapReduce (EMR). As of now, there is no free tier service available for EMR. Only Pig and Hive are available for use.From this Big Data Course , you will get a better understanding of Pig and Hive.

Apache Hadoop Cluster on Amazon EC2

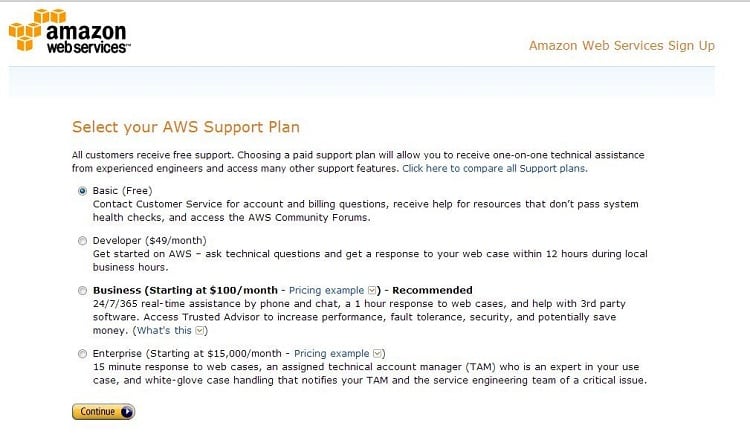

The first step towards your first Apache Hadoop Cluster is to create an account on Amazon.

The next step is to launch Amazon EC2 servers and configure these AWS EC2 servers to Apache Hadoop Installation.

The complete process can be summarized in three simple steps:

Step 1:

Create your own Amazon AWS account. It’s free and it’s outstanding in its intuitive design! It is the best place to start your Cloud Computing journey. Launch ‘t1.micro’ servers (free tier eligible usage) for your cluster.

Step 2

Prepare these AWS EC2 servers for Hadoop Installation i.e. Upgrade OS packages, Install JDK 1.6, setup the hosts and password-less SSH from Master to Slaves.

Step 3

Edit the following Core Hadoop Configuration files to setup the cluster.

- hadoop-env.sh

- core-site.xml

- hdfs-site.xml

- mapred-site.xml

- masters

- slaves

Copy these configuration files to Secondary Name Node and Slave nodes. Start the HDFS and MapReduce services.

So, isn’t it easy to install Apache Hadoop Cluster on Amazon EC2 free tier Ubuntu server in just 30 minutes?

This free cloud computing pdf guide provides step by step details with corresponding screen shots to setup a Multi-Node Apache Hadoop Cluster on AWS EC2.

Some Useful References:

where is the pdf attached doc?

is this updated ??

is this updated guide?

Hey Gaurav, thanks for checking out our blog. Yes, a few things have changed since this blog was posted.

1) The steps for installation are the same but you will find that the UI has changed since then.

2) In this guide we have used t1.micro instance but it has been updated to t2.micro now, which is eligible for free tier as well.

3) The Hadoop version which has been used in this blog is 1.7, but now 2.7 is used. Here’s a related blog on the newer version that you might find relevant: https://www.edureka.co/blog/setting-up-a-multi-node-cluster-in-hadoop-2.X/ .

Also, here’s a blog that will give you a better idea about EC2 AWS: https://www.edureka.co/blog/ec2-aws-tutorial-elastic-compute-cloud/. Hope this helps. Cheers!

Hi ,

I am having trouble setting the password less ssh between the master and the data nodes. can anyone please help?

Hey Tathagat, thanks for checking out our blog. Please refer to these blogs that will help you with the setup:

Hadoop Architecture – HA – https://goo.gl/hzouuA

Hadoop Installation – Single Node – https://goo.gl/t6XbaU

Hadoop Installation – Multi Node – https://goo.gl/YdTn1B

Hope this helps. Cheers!

Do we have the latest guide?

Hi, we will update as soon as we have the latest information.

Cant try now as its outdated, can we have installation guide with latest version of hadoop ?

Hi Aalap, we are in the process of updating it with the latest version of Hadoop. Please stay tuned for the updated post. Thanks!

Nice post, will surely try this.