In today’s post let’s discuss about the HBase Architecture. Let’s brush up our basics of HBase before we delve deeper into HBase architecture.

HBase – The Basics:

HBase is an open-source, NoSQL, distributed, non-relational, versioned, multi-dimensional, column-oriented store which has been modeled after Google BigTable that runs on top of HDFS. ‘’NoSQL” is a broad term meaning that the database isn’t an RDBMS which supports SQL as its primary access language.But there are many types of NoSQL databases and Berkeley DB is a good example of a local NoSQL database, whereas HBase is very much a distributed database.

HBase provides all the features of Google BigTable. It began as project by Powerset to process massive amounts of data for natural language search. It was developed as part of Apache’s Hadoop project and runs on top of HDFS (Hadoop Distributed File System). It provides fault-tolerant ways of storing large quantities of sparse data. HBase is really more a “Data Store” than “Data Base” because it lacks many of the features available in RDBMS, such as typed columns, secondary indexes, triggers, and advanced query languages, etc.

In the Column-Oriented databases, data table is stored as sections of columns of data rather than as rows of data. The Data model of column oriented database consists of Table name, row key, column family, columns, time stamp. While creating tables in HBase, the rows will be uniquely identified with the help of row keys and time stamp. In this data model the column family are static whereas columns are dynamic. Now let us look into the HBase Architecture.

When to go for HBase?

HBase is a good option only when there are hundreds of millions or billions of rows. HBase can also be used in places when considering to move from an RDBMS to HBase as a complete redesign as opposed to a port.In other words, HBase is not optimized for classic transactional applications or even relational analytics. It is also not a complete substitute for HDFS when doing large batch MapReduce. Then why should you go for HBase?? If your application has a variable schema where each row is slightly different, then you should look at HBase.

HBase Architecture:

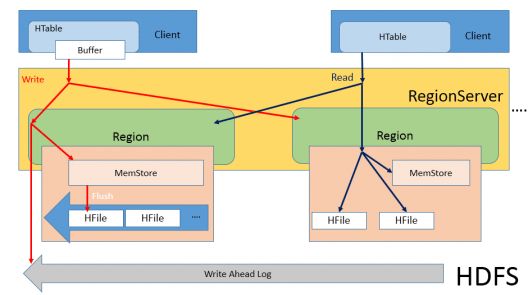

The following figure clearly explains the HBase Architecture.

In HBase, there are three main components: Master, Region server and Zoo keeper. The other components are Memstore, HFile and WAL.

As HBase runs on top of HDFS, it utilizes the Master-Slave architecture in which the HMaster will be the master node and the Region Servers are the slave nodes. When the client sends a write request, HMaster gets that request and forward it to the respective Region Server.

Region Server:

It is a system which acts similar to a data node. When Region Server (RS) receives write request, it directs the request to specific Region. Each Region stores set of rows. Rows data can be separated in multiple column families (CFs). Data of particular CF is stored in HStore which consists of Memstore and a set of HFiles.

What does Memstore do?

Memstore keeps track of all the logs for the read and write operations that has been performed within that particular region server. From this we can say that is acting similar to a name node in Hadoop. Memstore is an in-memory storage, hence the Memstore utilizes the in-memory storage of each data node to store the logs. When certain thresholds are met, Memstore data gets flushed into HFile.

The key purpose for using Memstore is the need to store data on DFS ordered by row key. As HDFS is designed for sequential reads/writes, with no file modifications allowed, HBase cannot efficiently write data to disk as it is being received: the written data will not be sorted (when the input is not sorted) which means not optimized for future retrieval. To solve this problem, HBase buffers last received data in memory (in Memstore), “sorts” it before flushing, and then writes to HDFS using fast sequential writes. Hence, HFile contains a list of sorted rows.

Every time Memstore flush happens one HFile created for each CF and frequent flushes may create tons of HFiles. Since during reading HBase will have to look at many HFiles, the read speed can suffer. To prevent opening too many HFiles and avoid read performance deterioration, HFiles compaction process is used. HBase will periodically (when certain configurable thresholds are met) compact multiple smaller HFiles into a big one. Obviously, the more files created by Memstore flushes, the more work (extra load) for the system. Added to that, while compaction process is usually performed in parallel with serving other requests and when HBase cannot keep up with compacting HFiles (yes, there are configured thresholds for that too), it will block writes on RS again. Like we discussed above, this is highly undesirable.

We cannot be sure that the data will be persistent throughout in Memstore. Assume that a particular datanode is down. Then the data that resides on that data node’s memory will be lost.

To overcome this problem, when the request comes from the master it written to WAL as well. WAL is nothing but Write Ahead Logs which resides on the HDFS, a permanent storage. Now we can make sure that even when if the data node is down the data will not be lost I.e. we have the copy of all the actions that you are supposed to do in the WAL. When the data node is up it will perform all the activities again. Once the operation is completed, everything is flushed out from Memstore and WAL and is written in HFile in order to make sure that we are not running out of memory.

Let us take a simple example that I want to add row 10 then that write request comes in, it says it gives all the meta data to the Memstore and WAL. Once that particular row is written into HFile everything in Memstore and WAL is flushed out.

Zoo Keeper:

HBase comes integrated with Zoo keeper. When I start HBase, Zoo keeper instance is also started. The reason is that the Zoo keeper helps us in keeping a track of all region servers that are there for HBase. Zoo keeper keeps track of how many region servers are there, which region servers are holding from which data node to which data node. It keeps track of smaller data sets where Hadoop is missing out. It decreases the overhead on top of Hadoop which keeps track of most of your Meta data. Hence HMaster gets the details of region servers by actually contacting Zoo keeper.

Gain hands-on experience in building and managing data storage, processing, and analytics solutions with the Azure Data Engineer Certification Course.

Got a question for us? Mention them in the comments section and we will get back to you.

Related Posts:

good one