Hive is a data warehouse system for Hadoop that facilitates easy data summarization, ad-hoc queries, and the analysis of large datasets stored in Hadoop compatible file systems. Hive structures data into well-understood database concepts such as tables, rows, columns and partitions. It supports primitive types like Integers, Floats, Doubles, and Strings. Hive also supports Associative Arrays, Lists, Structs, and Serialize and Deserialized API is used to move data in and out of tables.

Let’s look at Hive Data Models in detail;

Hive Data Models:

The Hive data models contain the following components:

- Databases

- Tables

- Partitions

- Buckets or clusters

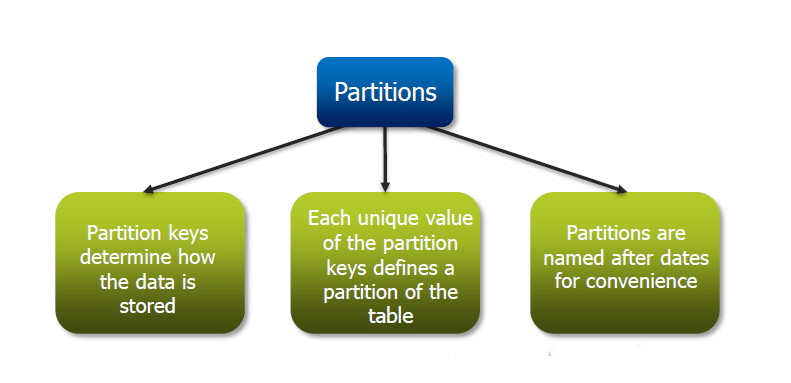

Partitions:

Partition means dividing a table into a coarse grained parts based on the value of a partition column such as ‘data’. This makes it faster to do queries on slices of data

So, what is the function of Partition? The Partition keys determine how data is stored. Here, each unique value of the Partition key defines a Partition of the table. The Partitions are named after dates for convenience. It is similar to ‘Block Splitting’ in HDFS.

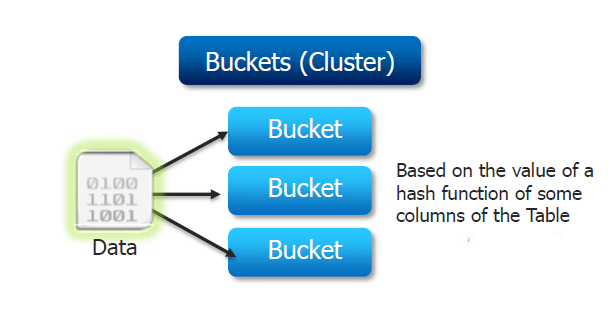

Buckets:

Buckets give extra structure to the data that may be used for efficient queries. A join of two tables that are bucketed on the same columns, including the join column can be implemented as a Map-Side Join. Bucketing by used ID means we can quickly evaluate a user-based query by running it on a randomized sample of the total set of users.

Become a master of data architecture and shape the future with our comprehensive Big Data Architect Course.

Got a question for us? Please mention them in the comments section and we will get back to you.

Related Posts:

consider 2000 year data.

test.csv

country_code,product_code,rpt_period

us,crd,2000

us,pcl,2000

us,mtg,2000

in,crd,2000

in,pcl,2000

in,mtg,2000

now i am appending newly generated 2001 records to test.csv. after appending new data to test.csv my data looks like below.

append.csv

country_code,product_code,rpt_period

us,crd,2000

us,pcl,2000

us,mtg,2000

in,crd,2000

in,pcl,2000

in,mtg,2000

us,crd,2001

us,pcl,2001

us,mtg,2001

in,crd,2001

in,pcl,2001

in,mtg,2001

Below scenarios are possible in the hive? If yes, please answer questions.

1. How to create Partition table schema for this data?. and also I want partition columns as country_code and product_code.

2. For instance, i want to load (from test.csv:2000 year records only ) to table Foo? how to load?

3. How to load append.csv (only 2001 records) to table Foo.

Thanks.

Hey Hareesh, thanks for checking out our blog. Here’s the explanation to your query:

1.How to create Partition table schema for this data?. and also I want partition columns as country_code and product_code.

Create a non partioned table ;

create table Foo1(country_code STRING, product_code STRING, rpt_period INT)

row format delimited

fields terminated by ‘,’

stored as textfile;

load data local inpath ‘/home/cloudera/filename’ into table Foo1;

Create a partioned table ;

create table txnrecsByCatstate(country_code STRING, product_code STRING, rpt_period INT)

partitioned by (country_code STRING,rpt_period INT)

row format delimited

fields terminated by ‘,’

stored as textfile;

2. For instance, i want to load (from test.csv:2000 year records only ) to table Foo? how to load?

Now insert only the part of data from the Foo1 table to txnrecsByCatstate by.

INSERT OVERWRITE TABLE txnrecsByCatstate SELECT * FROM Foo1 where rpt_period == “2000” ;

3. How to load append.csv (only 2001 records) to table Foo.

you cannot append the data to Hive table . because update command was not supported in hive.

Only you can override the existing data using insert command.

Hope this helps. Cheers!