Advanced DevOps Certification Training with G ...

- 23k Enrolled Learners

- Weekend/Weekday

- Live Class

We often are ready to wait in order to obtain the best solution to our problem. But what if, you just don’t have the time? Hill Climbing is one such Algorithm is one that will find you the best possible solution to your problem in the most reasonable period of time!

Hill Climbing is a heuristic search used for mathematical optimisation problems in the field of Artificial Intelligence.

So, given a large set of inputs and a good heuristic function, the algorithm tries to find the best possible solution to the problem in the most reasonable time period. This solution may not be the absolute best(global optimal maximum) but it is sufficiently good considering the time allotted.

The definition above implies that hill-climbing solves the problems where we need to maximise or minimise a given real function by selecting values from the given inputs.

A great example of this is the Travelling Salesman Problem where we need to minimise the distance travelled by the salesman.

A heuristic function is one that ranks all the potential alternatives in a search algorithm based on the information available. It helps the algorithm to select the best route to its solution.

This basically means that this search algorithm may not find the optimal solution to the problem but it will give the best possible solution in a reasonable amount of time.

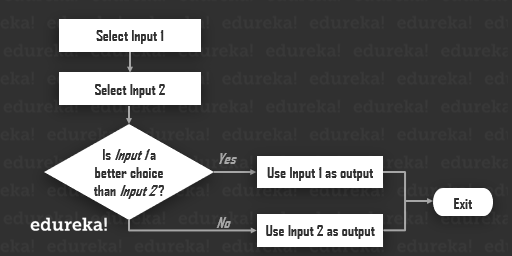

The algorithm is as follows :

Step1: Generate possible solutions.

Step2: Evaluate to see if this is the expected solution.

Step3: If the solution has been found quit else go back to step 1.

Hill climbing takes the feedback from the test procedure and the generator uses it in deciding the next move in the search space. Hence, we call it as a variant of the generate-and-test algorithm.

At any point in state space, the search moves in that direction only which optimises the cost of function with the hope of finding the most optimum solution at the end.

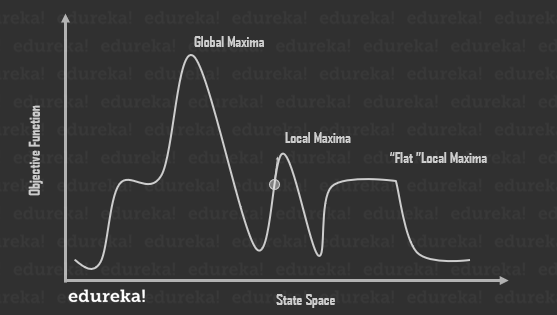

The State-space diagram is a graphical representation of the set of states(input) our search algorithm can reach vs the value of our objective function(function we intend to maximise/minimise). Here;

1. The X-axis denotes the state space ie states or configuration our algorithm may reach.

2. The Y-axis denotes the values of objective function corresponding to a particular state.

The best solution will be that state space where objective function has maximum value or global maxima.

Following are the different regions in the State Space Diagram;

Local maxima: It is a state which is better than its neighbouring state however there exists a state which is better than it (global maximum). This state is better because here the value of the objective function is higher than its neighbours.

Global maxima: It is the best possible state in the state space diagram. This because at this state, objective function has the highest value.

Plateau/flat local maxima: It is a flat region of state space where neighbouring states have the same value.

Ridge: It is a region which is higher than its neighbour’s but itself has a slope. It is a special kind of local maximum.

Current state: The region of state space diagram where we are currently present during the search. (Denoted by the highlighted circle in the given image.)

Hill Climbing is the simplest implementation of a Genetic Algorithm. Instead of focusing on the ease of implementation, it completely rids itself of concepts like population and crossover. It has faster iterations compared to more traditional genetic algorithms, but in return, it is less thorough than the traditional ones.

Hill Climbing works in a very simple manner. So, we’ll begin by trying to print “Hello World”.

Even though it is not a challenging problem, it is still a pretty good introduction.

So, here’s a basic skeleton of the solution.

best = generate_random_solution()

best_score = evaluate_solution(best)

while True:

print('Best score so far', best_score, 'Solution', best)

new_solution = copy_solution(best)

mutate_solution(new_solution)

score = evaluate(new_solution)

if evaluate(new_solution) < best_score:

best = new_solution

best_score = score

def generate_random_solution(length=11): return [random.choice(string.printable) for _ in range(length)]

This function needs to return a random solution. In a hill-climbing algorithm, making this a separate function might be too much abstraction, but if you want to change the structure of your code to a population-based genetic algorithm it will be helpful.

def evaluate(solution):

target = "Hello, World!".split("")

diff = 0

for i in range(len(target)):

s = solution[i]

t = target[i]

diff += abs(ord(s) - ord(t))

return diffSo our evaluation function is going to return a distance metric between two strings.

Step3: Make a copy of the solution and mutate it slightly.

def mutate_solution(solution): index = random.randint(0, len(solution) - 1) solution[index] = random.choice(string.printable)

Basically, to reach a solution to a problem, you’ll need to write three functions.

Let’s get the code in a state that is ready to run.

import random

import string

def generate_random_solution(length=13):

return [random.choice(string.printable) for _ in range(length)]

def evaluate(solution):

target = list("Hello, World!")

diff = 0

for i in range(len(target)):

s = solution[i]

t = target[i]

diff += abs(ord(s) - ord(t))

return diff

def mutate_solution(solution):

index = random.randint(0, len(solution) - 1)

solution[index] = random.choice(string.printable)

best = generate_random_solution()

best_score = evaluate(best)

while True:

print('Best score so far', best_score, 'Solution', "".join(best))

if best_score == 0:

break

new_solution = list(best)

mutate_solution(new_solution)

score = evaluate(new_solution)

if evaluate(new_solution) < best_score:

best = new_solution

best_score = scoreTesting the CodeBest score so far 392 Solution #KAKZ'yjrJo/5

Best score so far 392 Solution #KAKZ'yjrJo/5

Best score so far 390 Solution #KAKZ/yjrJo/5

Best score so far 347 Solution #KAKZ/yjrJon5

...

Best score so far 27 Solution Jojon,"osld

...

Best score so far 12 Solution H_mmn, Vosld

...

Best score so far 4 Solution Hemmo, Vorld

...

Best score so far 0 Solution Hello, World!Simple hill climbing is the simplest way to implement a hill-climbing algorithm. It only evaluates the neighbour node state at a time and selects the first one which optimizes current cost and set it as a current state. It only checks it’s one successor state, and if it finds better than the current state, then move else be in the same state. This algorithm has the following features:

Less time consuming

Less optimal solution

The solution is not guaranteed

Step 1: Evaluate the initial state, if it is goal state then return success and Stop.

Step 2: Loop Until a solution is found or there is no new operator left to apply.

Step 3: Select and apply an operator to the current state.

Step 4: Check new state:

If it is goal state, then return success and quit.

else if it is better than the current state then assign new state as a current state.

else if not better than the current state, then return to step 2.

Step 5: Exit.

The steepest-Ascent algorithm is a variation of the simple hill-climbing algorithm. This algorithm examines all the neighbouring nodes of the current state and selects one neighbour node which is closest to the goal state. This algorithm consumes more time as it searches for multiple neighbours.

Step 1: Evaluate the initial state, if it is goal state then return success and stop, else make the current state as your initial state.

Step 2: Loop until a solution is found or the current state does not change.

Let S be a state such that any successor of the current state will be better than it.

For each operator that applies to the current state;

Apply the new operator and generate a new state.

Evaluate the new state.

If it is goal state, then return it and quit, else compare it to the S.

If it is better than S, then set new state as S.

If the S is better than the current state, then set the current state to S.

Step 5: Exit.

3. Stochastic hill climbing

Stochastic hill climbing does not examine for all its neighbours before moving. Rather, this search algorithm selects one neighbour node at random and evaluate it as a current state or examine another state.

Hill climbing cannot reach the best possible state if it enters any of the following regions :

1. Local maximum: At a local maximum all neighbouring states have values which are worse than the current state. Since hill-climbing uses a greedy approach, it will not move to the worse state and terminate itself. The process will end even though a better solution may exist.

To overcome the local maximum problem: Utilise the backtracking technique. Maintain a list of visited states. If the search reaches an undesirable state, it can backtrack to the previous configuration and explore a new path.

2. Plateau: On the plateau, all neighbours have the same value. Hence, it is not possible to select the best direction.

To overcome plateaus: Make a big jump. Randomly select a state far away from the current state. Chances are that we will land at a non-plateau region

3. Ridge: Any point on a ridge can look like a peak because the movement in all possible directions is downward. Hence, the algorithm stops when it reaches such a state.

To overcome Ridge: You could use two or more rules before testing. It implies moving in several directions at once.

A hill-climbing algorithm which never makes a move towards a lower value guaranteed to be incomplete because it can get stuck on a local maximum. And if algorithm applies a random walk, by moving a successor, then it may complete but not efficient.

Simulated Annealing is an algorithm which yields both efficiency and completeness.

Mechanically, the term annealing is a process of hardening a metal or glass to a high temperature then cooling gradually, so this allows the metal to reach a low-energy crystalline state.

The same process is used in simulated annealing in which the algorithm picks a random move, instead of picking the best move. If the random move improves the state, then it follows the same path. Otherwise, the algorithm follows the path which has a probability of less than 1 or it moves downhill and chooses another path.

Hill Climbing is used in inductive learning methods too. This technique is also used in robotics for coordinating multiple robots in a team.

So with this, I hope this article has sparked your interest in hill climbing and other such interesting algorithms in Artificial Intelligence.

Edureka’s Data Science Masters Training is curated by industry professionals as per the industry requirements & demands. You will master the concepts such as Statistics, Data Science, Python, Apache Spark & Scala, Tensorflow and Tableau. The course has been specially curated by industry experts with real-time case studies .Advance your career in AI with specialized Agentic AI Training course, focusing on building and deploying autonomous AI solutions.