HDFS Commands

In my previous blogs, I have already discussed what is HDFS, its features, and architecture. The first step towards the journey to Big Data training is executing HDFS commands & exploring how HDFS works. In this blog, I will talk about the HDFS commands using which you can access the Hadoop File System.

So, let me tell you the important HDFS commands and their working which are used most frequently when working with Hadoop File System.

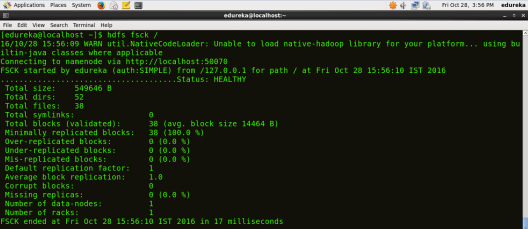

fsck

HDFS Command to check the health of the Hadoop file system.

Command: hdfs fsck /

ls

HDFS Command to display the list of Files and Directories in HDFS.

Command: hdfs dfs –ls /

mkdir

HDFS Command to create the directory in HDFS.

Usage: hdfs dfs –mkdir /directory_name

Command: hdfs dfs –mkdir /new_edureka

Note: Here we are trying to create a directory named “new_edureka” in HDFS.

You can get a better understanding with the Azure Data Engineering certification.

touchz

HDFS Command to create a file in HDFS with file size 0 bytes.

Usage: hdfs dfs –touchz /directory/filename

Command: hdfs dfs –touchz /new_edureka/sample

Note: Here we are trying to create a file named “sample” in the directory “new_edureka” of hdfs with file size 0 bytes.

du

HDFS Command to check the file size.

Usage: hdfs dfs –du –s /directory/filename

Command: hdfs dfs –du –s /new_edureka/sample

cat

HDFS Command that reads a file on HDFS and prints the content of that file to the standard output.

Usage: hdfs dfs –cat /path/to/file_in_hdfs

Command: hdfs dfs –cat /new_edureka/test

text

HDFS Command that takes a source file and outputs the file in text format.

Usage: hdfs dfs –text /directory/filename

Command: hdfs dfs –text /new_edureka/test

copyFromLocal

HDFS Command to copy the file from a Local file system to HDFS.

Usage: hdfs dfs -copyFromLocal <localsrc> <hdfs destination>

Command: hdfs dfs –copyFromLocal /home/edureka/test /new_edureka

Note: Here the test is the file present in the local directory /home/edureka and after the command gets executed the test file will be copied in /new_edureka directory of HDFS.

copyToLocal

HDFS Command to copy the file from HDFS to Local File System.

Usage: hdfs dfs -copyToLocal <hdfs source> <localdst>

Command: hdfs dfs –copyToLocal /new_edureka/test /home/edureka

Note: Here test is a file present in the new_edureka directory of HDFS and after the command gets executed the test file will be copied to local directory /home/edureka

put

HDFS Command to copy single source or multiple sources from local file system to the destination file system.

Usage: hdfs dfs -put <localsrc> <destination>

Command: hdfs dfs –put /home/edureka/test /user

Note: The command copyFromLocal is similar to put command, except that the source is restricted to a local file reference.

You can even check out the details of Big Data with the Data Engineering Certification in Canada.

get

HDFS Command to copy files from hdfs to the local file system.

Usage: hdfs dfs -get <src> <localdst>

Command: hdfs dfs –get /user/test /home/edureka

Note: The command copyToLocal is similar to get command, except that the destination is restricted to a local file reference.

count

HDFS Command to count the number of directories, files, and bytes under the paths that match the specified file pattern.

Usage: hdfs dfs -count <path>

Command: hdfs dfs –count /user

rm

HDFS Command to remove the file from HDFS.

Usage: hdfs dfs –rm <path>

Command: hdfs dfs –rm /new_edureka/test

rm -r

HDFS Command to remove the entire directory and all of its content from HDFS.

Usage: hdfs dfs -rm -r <path>

Command: hdfs dfs -rm -r /new_edureka

cp

HDFS Command to copy files from source to destination. This command allows multiple sources as well, in which case the destination must be a directory.

Usage: hdfs dfs -cp <src> <dest>

Command: hdfs dfs -cp /user/hadoop/file1 /user/hadoop/file2

Command: hdfs dfs -cp /user/hadoop/file1 /user/hadoop/file2 /user/hadoop/dir

mv

HDFS Command to move files from source to destination. This command allows multiple sources as well, in which case the destination needs to be a directory.

Usage: hdfs dfs -mv <src> <dest>

Command: hdfs dfs -mv /user/hadoop/file1 /user/hadoop/file2

expunge

HDFS Command that makes the trash empty.

Command: hdfs dfs -expunge

rmdir

HDFS Command to remove the directory.

Usage: hdfs dfs -rmdir <path>

Command: hdfs dfs –rmdir /user/hadoop

usage

HDFS Command that returns the help for an individual command.

Usage: hdfs dfs -usage <command>

Command: hdfs dfs -usage mkdir

Note: By using usage command you can get information about any command.

help

HDFS Command that displays help for given command or all commands if none is specified.

Command: hdfs dfs -help

This is the end of the HDFS Commands blog, I hope it was informative and you were able to execute all the commands. For more HDFS Commands, you may refer Apache Hadoop documentation here.

Now that you have executed the above HDFS commands, check out the Hadoop training by Edureka, a trusted online learning company with a network of more than 250,000 satisfied learners spread across the globe. The Edureka’s Big Data Masters Course helps learners become expert in HDFS, Yarn, MapReduce, Pig, Hive, HBase, Oozie, Flume and Sqoop using real-time use cases on Retail, Social Media, Aviation, Tourism, Finance domain.

Got a question for us? Please mention it in the comments section and we will get back to you.

Hi Team,

Can you please share the more real time experience commands with examples which is used by Admins and developer.

Under the cat command header, the explanation is given for copyFromLocal command. Please correct the same

Hey Shweta, thanks for checking out our blog.

You are right. We have corrected it. Cheers! :)

This blog was very useful. I was getting confused with local path and hdfs path. This blog gave me confidence over hdfs commands. Thank you

Hey Kuldeep, thanks for checking out our blog. We’re glad you found it useful.

You might also like our YouTube tutorials; check them out here: https://www.youtube.com/edurekaIN

Do subscribe to stay posted on upcoming blogs. Cheers!