Security is a major concern when it comes to dealing with confidential data. Hadoop being the superior in data handling operations also faces the same issue. It doesn’t have its own dedicated security. Let us understand how was the issue solved through this Hadoop Security article.

You can even check out the details of Big Data with the Data Engineering Courses.

Why do we need Hadoop Security?

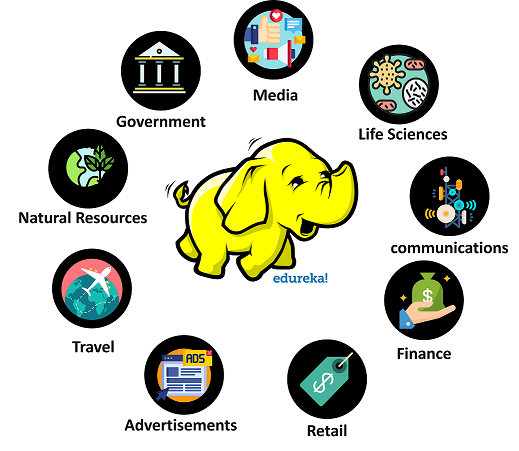

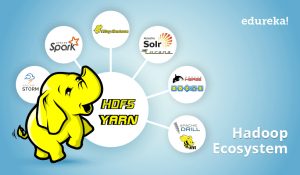

Apache Hadoop is the most powerful, robust and highly scalable big data processing framework capable enough to crunch petabytes of data with ease. Due to its unmatched capabilities, back then, every business sector, health, military and finance departments started using Hadoop.

Hadoop started gaining popularity. This is when the Hadoop developers found a monumental miscalculation. Hadoop lacked a dedicated security software inside it. This affected many areas where Hadoop was in use.

Multiple business sectors

National Security

Health and Medical Departments

Social Media

Military

The above-mentioned areas are the major users of Hadoop. Now, Security is the major leap that Hadoop needs to take.

What is Hadoop Security?

Hadoop Security is generally defined as a procedure to secure the Hadoop Data Storage unit, by offering a virtually impenetrable wall of security against any potential cyber threat. Hadoop attains this high-calibre security wall by following the below security protocol.

Authentication

Authentication is the first stage where the user’s credentials are verified. The credentials typically include the user’s dedicated User-Name and a secret password. Entered credentials will be checked against the available details on the security database. If valid, the user will be authenticated.

Authorization

Authorization is the second stage where the system gets to decide whether to provide permission to the user, to access data or not. It is based on the predesignated access control list. The Confidential information is kept secure and only authorized personnel can access it.

Auditing

Auditing is the last stage, it simply keeps track of the operations performed by the authenticated user during the period in which he was logged into the cluster. This is solely done for security purposes only.

Types of Hadoop Security

- Kerberos Security

Kerberos is one of the leading Network Authentication Protocol designed to provide powerful authentication services to both Server and Client-ends through Secret-Key cryptography techniques. It is proven to be highly secure since it uses encrypted service tickets throughout the entire session.

- HDFS Encryption

HDFS Encryption is a formidable advancement that Hadoop ever embraced. Here, the data from source to destination(HDFS) gets completely encrypted. This procedure does not require any changes to be made on to the original Hadoop Application, making the client to be the only authorized personnel to access the data.

Traffic Encryption

![]()

Traffic Encryption is none other than HTTPS(HyperText Transfer Protocol Secure). This procedure is used to secure the data transmission, from the website as well as data transmission to the website. Much online banking gateways use this method to secure transactions over a Security Certificate

HDFS File and Directory Permissions

HDFS file directory permissions work in a simple POSIX format. The Read and Write permissions are provided as r and s respectively. The permissions to the Super User and Client are set differently based on the confidentiality of the file.

Kerberos

Kerberos is one of the simplest and safest network authentication protocol used by Hadoop for its data and network security. It was invented by MIT. The main objective of Kerberos is to eliminate the need to exchange passwords over a network, and also, to secure the network from any potential cyber sniffing.

To understand Kerberos Terminology, we first need to learn about the components involved in the Kerberos Software.

KDC or Key Distribution Center is the Heart of Kerberos. It mainly consists of three components. Namely:

Database

The database stores the user credentials like user name and its respective passwords. It also stores the access right privileges provided to the user. Kerberos KDC unit also stores additional information like Encryption key, Ticket Validity etc.

Authentication Server

The user credentials entered will be cross-checked. If valid, the Authentication Server will provide TGT or Ticket Generation Ticket. A TGT can be generated only if the user enters valid credentials.

Ticket Granting Server

The next stage is the TGS or Ticket Granting Server. It is basically an application server of KDC which will provide you with the Service ticket. The service ticket is required by the user to interact with Hadoop and obtain the service he needs or to perform an operation upon Hadoop.

You can install Kerberos by the following command:

sudo apt-get install krb5-kdc krb5-admin-server

Now, let us assume that you wish to access a Kerberos secured Hadoop Cluster. You need to go through the following stages to access the Hadoop cluster as described in the steps below:

You need to obtain authentication of the Hadoop Cluster. You can get authenticated by executing Kinit command on the Hadoop Cluster.

kinit root/admin

The Kinit Command execution will redirect you to the Login Credentials page where you are expected to enter your user name and password.

The Kinit will send an Authentication Request to the Authentication Server.

If your credentials are valid, then Authentication Server will respond with a Ticket Generation Ticket(TGT).

The Kinit will store the TGT in your Credentials Cache Memory. The following command will help you to read your credentials

The best way to become a Data Engineer is by getting the Data Engineering Course in Bangalore.

klist

Now, you are successfully Authenticated into the KDS.

Before you access the Hadoop cluster, you need to set up Kerberos clients. to do so, use the following command.

sudo apt-get install krb5-user libpam-krb5 libpam-ccreds auth-client-config

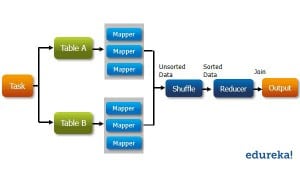

Now, you try to execute a Hadoop Command. That is, a Hadoop Client.

The Hadoop Client will use your TGT and requests TGS for approval.

The TGS will approve the request and it will provide you with a Service Ticket.

This Service Ticket will be cached by the Hadoop Client.

This Service Ticket will be used by the Hadoop Client to communicate with Hadoop Namenode.

The Namenode will identify itself with its Ticket.

Both the Namenode ticket and Hadoop Client Service tickets will be cross-checked by each other.

Both are sure that they are communicating with an authenticated entity.

This is called Mutual Authentication.

The next stage is Authorization. The Namenode will provide you with the service for which you have received the authorization.

Finally, the Last stage is Auditing. Here your activity will be logged for security purposes.

With this, we come to an end of this article. I hope I have thrown some light on to your knowledge on a Hadoop Security.

Now that you have understood Hadoop and its Security, check out the Hadoop training by Edureka, a trusted online learning company with a network of more than 250,000 satisfied learners spread across the globe. The Edureka Big Data Hadoop Certification Training course helps learners become expert in HDFS, Yarn, MapReduce, Pig, Hive, HBase, Oozie, Flume and Sqoop using real-time use cases on Retail, Social Media, Aviation, Tourism, Finance domain.

If you have any query related to this “Hadoop Security” article, then please write to us in the comment section below and we will respond to you as early as possible.