With increased adoption of Hadoop in traditional enterprise IT solutions and increased number of Hadoop implementations in production environment, the need for Hadoop Operations and Administration experts to take care of the large Hadoop Clusters is becoming vital.

Hadoop Admin Responsibilities:

- Responsible for implementation and ongoing administration of Hadoop infrastructure.

- Aligning with the systems engineering team to propose and deploy new hardware and software environments required for Hadoop and to expand existing environments.

- Working with data delivery teams to setup new Hadoop users. This job includes setting up Linux users, setting up Kerberos principals and testing HDFS, Hive, Pig and MapReduce access for the new users.

- Cluster maintenance as well as creation and removal of nodes using tools like Ganglia, Nagios, Cloudera Manager Enterprise, Dell Open Manage and other tools.

- Performance tuning of Hadoop clusters and Hadoop MapReduce routines.

- Screen Hadoop cluster job performances and capacity planning

- Monitor Hadoop cluster connectivity and security

- Manage and review Hadoop log files.

- File system management and monitoring.

- HDFS support and maintenance.

- Diligently teaming with the infrastructure, network, database, application and business intelligence teams to guarantee high data quality and availability.

- Collaborating with application teams to install operating system and Hadoop updates, patches, version upgrades when required.

- Point of Contact for Vendor escalation

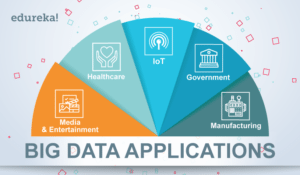

Hadoop Administration is a rewarding and lucrative career with plenty of growth opportunities. If the job responsibilities listed above interest you, then it’s time to up-skill with Hadoop Administration and get on the Hadoop Admin career path. Learn more about Big Data and its applications from the Azure Data Engineer Associate.

DBA Responsibilities Performed by a Hadoop Administrator:

- Data modelling, design & implementation based on recognized standards.

- Software installation and configuration.

- Database backup and recovery.

- Database connectivity and security.

- Performance monitoring and tuning.

- Disk space management.

- Software patches and upgrades.

- Automate manual tasks.

You can even check out the details of Big Data with the Azure Data Engineering Training in Mumbai.

Find out our Big Data Hadoop Course in Top Cities

DWH Development Responsibilities Performed by Hadoop Administrator:

DWH admins job responsibilities includes developing, testing and monitoring batch jobs for the following tasks:

- Ensure Referential integrity.

- Perform primary key execution.

- Accomplish data restatements.

- Load large data volumes in a timely manner.

Now that you know about the job responsibilities of a Hadoop administrator, check out the Hadoop Admin Training in Hyderabad.

Skills Required to become a Hadoop Administrator:

- General operational expertise such as good troubleshooting skills, understanding of system’s capacity, bottlenecks, basics of memory, CPU, OS, storage, and networks.

- Hadoop skillslike HBase, Hive, Pig, Mahout, etc.

- The most essential requirements are: They should be able to deploy Hadoop cluster, add and remove nodes, keep track of jobs, monitor critical parts of the cluster, configure name-node high availability, schedule and configure it and take backups.

- Good knowledge of Linux as Hadoop runs on Linux.

- Familiarity with open source configuration management and deployment tools such as Puppet or Chef and Linux scripting.

- Knowledge of Troubleshooting Core Java Applications is a plus.

Edureka has specially curated a course on Hadoop Administration. From this Big Data Hadoop Course designed by a Big Data professional, you will get 100% real-time project experience in Hadoop tools, commands and concepts. Click on the button below to get started with Hadoop Administration and learn how the course helps you become a Hadoop Administrator.

Related Posts:

Get Started with Hadoop Administration

I am oracle database admin..so switching to hadoop admin is a good choice

Hi I am Madhan, i am having around 4 yrs exp in Java but i am interested to learn Hadoop Admin and switched to Hadoop Admin. So can you please suggest us, which is better scope hadoop Devp or Hadoop Admin? but i am planned to go Hadoop Admin. If i learn Hadoop Admin i will get calls ?

I dont have any experience..

But i love to take hadoop..

Which one can i take like admin side or developer side???

Waiting for your reply

I dont have any experience..

But i love to take hadoop..

Which one can i take like admin side or developer side???

Waiting for your reply

Hi,

I’ve non IT background and wanted to learn the Hadoop Administrator or Analyst. Can non technical learn and get the job for the Hadoop Administrator’s or Analyst job? Please help me to understand this. Any quick response appreciated.

Hi Prateek,

Thank you for reaching out to us.

The current job scenario has witnessed several experienced professionals switching to Big Data/Hadoop technologies in order to advance in their careers. Adding Hadoop as a skill will greatly benefit you in progressing in your career.

The prerequisite for learning Hadoop Administration is basic knowledge of Linux, as Hadoop runs on Linux. If you are already aware of Linux, learning Hadoop Administration should be fairly easy for you. Edureka also provides a complementary self-paced course called ‘Linux Fundamentals’ which will help you gain the necessary Linux knowledge before joining the Hadoop Admin sessions.

You can check out this link for more details about the course: https://www.edureka.co/hadoop-administration-training-certification

We would recommend that you get in touch with us for further clarification by contacting our sales team on +91-8880862004 (India) or 1800 275 9730 (US toll free). You can mail us on sales@edureka.co.

Hi,

I’m into S/W Testing. Working as a Test Manager with about 10 yrs of exp. I would like to explore Hadoop Administration as career change. What is your take on it & what do you suggest.

I have a fair bit of idea about Java, Unix/Linux.

Thanks,

Andre

Hi Andre, professionals from various backgrounds are taking up Hadoop as it is one of the in-demand skill. The prerequisite for learning Hadoop Administration is basic knowledge of Linux, as Hadoop runs on Linux. Since you are already aware of Linux, learning Hadoop Administration should be fairly easy for you. You can check out this link for more details about the course: https://www.edureka.co/hadoop-administration-training-certification

In case of any clarifications feel free to call us at US: 1800 275 9730 (Toll Free) or India: +91 8880862004

Hi, I have 7 years of exp in Unix administration(Red hat linux & Solaris). I am thinking to move my technology to hadoop, can you suggest me is this the right choice, if yes, can you tell which certification I need to complete for to become Hadoop Administrator.

Hi Srikanth, moving from Admin background to Hadoop Administration is a natural progression. You can take up ‘Hadoop Administration’ course. Check out this link to know more about this course: https://www.edureka.co/hadoop-administration-training-certification

Here’s a post on Hadoop Admin job responsibilities that you might find interesting:

https://www.edureka.co/blog/hadoop-admin-responsibilities/

In case of any clarifications, you can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004.

I am working as a storage administrator and I would like to move to Hadoop platform . Which course will be the best (Admin or Development). What is the nature of work in both profiles and how are the job openings for Hadoop in industry.

Hi Lalit, based on your experience I would suggest you to go for ‘Hadoop Administration’ course. The pre-requisite for learning this course is good knowledge in Linux. You can check out these posts to know about the daily responsibilities of Hadoop Developer and Administrator:

https://www.edureka.co/blog/hadoop-admin-responsibilities/

https://www.edureka.co/blog/hadoop-developer-job-responsibilities-skills/

As far as jobs are concerned what we are seeing as of now is just a start and there is a lot of potential for professionals who will be the early movers in Big Data space. Hadoop is a relatively new field, and professionals with experience like you are updating themselves with Hadoop for better career opportunities.

Please refer to this link for more information: https://www.edureka.co/hadoop-administration-training-certification

You can call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 for futher clarifications.

Hi I have 2 years of java experience. Can i go for hadoop developer or hadoop administrator?

Hi Team,

I am working in VMware Vsphere platform so i would like to move to Hadoop platform .so how can i proceeds and which cource is suits for my skill.how are the job openings for Hadoop in industry how it will help in my career.

Thanks,

Sreeram.

Hi Sreram, a lot of deployments are happening over the cloud, your exposure to related technologies will be a good mix with Hadoop Administration course. A lot of professionals are taking Hadoop Administration course. It is like a natural progression. Do not worry, there is no pre-requisite of any programming language, however exposure to basic Linux fundamental commands will be beneficial. Please visit this link for more information: https://www.edureka.co/hadoop-administration-training-certification You can also call us at US: 1800 275 9730 (Toll Free) or India: +91 88808 62004 to discuss in detail.

What are Hadoop developer responsibilities and what are the skills required for hadoop developer?

thanks

hareesh

Hi Haresh, the following are some of the daily responsibilties of a Hadoop Developer: Model Development – Build Model, Test, Validate, Measure technical environment setup time, data preparation time, build model time; test model time, validate model time, troubleshooting and tuning Hadoop clusters, Plannning and executing on system upgrades for existing

Hadoop clusters. e.t.c

Thank you :)