When it comes to learning Hadoop, this is a very common question that comes to the mind of each & every learner i.e., “Do I need Java to learn Hadoop”. This blog will help you in clarifying all your doubts.

Do You Need Java to Learn Hadoop?

A simple answer to this question is – NO, knowledge of Java is not mandatory to learn Hadoop.

You might be aware that Hadoop is written in Java, but, on contrary, I would like to tell you, the Hadoop ecosystem is fairly designed to cater different professionals who are coming from different backgrounds.

Talking about the professionals from non-programming background Hadoop ecosystem provides various tools, which they can leverage to process Big Data stored in Hadoop. Without Java knowledge, you will get Online big data courses easily.

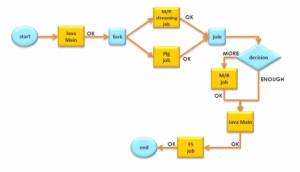

Two important Hadoop components endorse the fact that you can work with Hadoop without having functional knowledge of Java – Pig and Hive.

Pig is a high-level data flow language and execution framework for parallel computation, while Hive is a data warehouse infrastructure that provides data summarization and ad-hoc querying. Pig is widely used by researchers and programmers while Hive is favorite among data analysts.

One interesting fact for you:

10 lines of Pig = approx. 200 lines of Java code. Check out this blog for a Pig demo.

So, without writing complex Java code, you can achieve the same implementations very easily using Pig. Again talking about SQL, it was widely used by Facebook engineers and analysts, therefore, Facebook developed Hive to provide SQL-like queries on the top of Hadoop.

These languages are easy to learn, and more than 80% of Hadoop projects revolve around them.

How to Align Yourself with Hadoop Jobs

In order to explore job roles related to Hadoop without having Java as a prerequisite, you need to just orient yourself to two critical aspects of Hadoop; Storage and Processing. For a job around Hadoop storage, you can learn how Hadoop cluster functions, and how Hadoop makes its data secure and stable. For this, knowing the various nuances of the Hadoop Distributed File System (HDFS) and HBase, i.e., Hadoop’s distributed NoSQL database, will help tremendously.

If you choose to work on the processing side of Hadoop, you have Pig and Hive at your disposal, that automatically convert your code in the backend to work with the Java-based MapReduce cluster programming model.

So, without running MapReduce, you can still control the entire life cycle of your project. As long as you master Pig and Hive, along with HDFS and HBase, Java can take a backseat. Learn more about Big Data and its applications from the Azure Data Engineering Certification.

I hope this image proves my points.

The Big Data and Hadoop training course from Edureka is designed to enhance your knowledge and skills to become a successful Hadoop developer. Click this Big Data course in case you wish to know more.

Rare Requirements for Java coding

However, Java coding is needed if you wish to add user-defined functions to Pig, Hive and other tools. This is required only if you wish to create custom input/output formats. We are happy to inform that this requirement is a rarity.

Another rare scenario where basic Java coding might be necessary is for debugging. In the rare event of a Hadoop program crashing, you might need to debug the program using Java.

Still not convinced that you can learn Hadoop without knowing Java? Watch the webinar below and learn how Hadoop is relevant for a person from a non-programming background! You can even check out the details of Big Data with the Azure Data Engineering Training in Singapore.

Edureka is a global e-learning platform for live, instructor-led training in trending technologies. They offer short term courses supported by online resources, along with 24×7 lifetime support. Edureka has an unwavering commitment to help working professionals keep up with changing technologies and to cater to academic institutions’ inability to keep pace with changing needs. With an existing learner community in more than 100 countries, Edureka’s vision is to make learning easy, interesting, affordable and accessible to millions of learners across the globe.

Related Posts:

Get Started with Big Data and Hadoop

Hi Vishnu,

I am having total 13 years of exp as a MS SQL DBA, Could you please let me know how I can start career in Hadoop

Hey Rammohan, thanks for checking out our blog.

There are no pre-requisites as such to learn Hadoop. Knowledge of core Java and SQL are beneficial to learn Hadoop, but it’s not mandatory. So your SQL skills will come in handy. Also, we provide you with a complimentary self-paced course on Java essentials for Hadoop when you enroll for our course so you don’t need to worry. You can start off by enrolling into our course here: https://www.edureka.co/big-data-hadoop-training-certification. This course will teach you Hadoop from scratch. Hope this helps. Cheers!

Thanks for the one more informative article :-) Vishnu!

I have read your whole post and I would love to say that Hadoop course skills are in demand and I totally agree with this line – “Hadoop is on top of every CIO’s to-do list, today. This had led to a burgeoning growth in career opportunities around Hadoop”

But do the course from the right institute like – Koenig Solutions

hi Vishnu,

I am commerce graduate and i dont have any coding knowledge of java and other but i have to learn hadoop.

Please guide me.

Thanking you,

Sanjay Bahgwat

sanjaybhagwat143@gmail.com

Hey Sanjay, thanks for checking out our blog.

There are no pre-requisites as such to learn Hadoop. Knowledge of core Java and SQL are beneficial, but it’s not mandatory. Also, we provide you with a complimentary self-paced course on Java essentials for Hadoop when you enroll for our course so you don’t need to worry.

We have forwarded your email address to our team and they will get in touch with you for more details very soon. Hope this helps. Cheers!

Hi Vishnu,

I have a 5 years of experience as Mainframe developer . I know SQL and plsql. So can I learn Hadoop ?

please suggest which technology will be better for me to boost my growth in IT services.

Hey Eti, thanks for checking out our blog.

While knowledge of core Java and SQL are beneficial to learn Hadoop, it’s not mandatory. Also, we provide you with a complimentary self-paced course on Java essentials for Hadoop when you enroll for our course so you don’t need to worry.

We have examples of our learners from Mainframe backgrounds going on to learn Hadoop and get into a Big Data career path. You can check out our course details here: https://www.edureka.co/big-data-hadoop-training-certification. Hope this helps. Cheers!

Hi vishnu,

I know oracle sql and plsql, unix.but not having knowledge of Java. So can i learn hadoop?

Hey Purvi, thanks for checking out our blog. There are no pre-requisites as such to learn Hadoop. Knowledge of core Java and SQL help, but it’s not mandatory. In fact, we also provide a complementary self-paced course on Java essentials for Hadoop when you enroll for our Hadoop certification course. You can check out more details here: https://www.edureka.co/big-data-hadoop-training-certification. Please feel free to reach out to us if you have any questions or doubts. Alternatively, you can reach out to us at +91 88808 62004 24X7. Hope this helps. Cheers!

Hi Vishnu,

This was a nice article.

I am working as an Associate Web Developer for the past 2 years, I have no exprience working in Java and I want to switch to Hadoop, how is the career opportunities for a fresher in this field?

Thanks in advance!

Hey Darshan, thanks for checkin gout our blog. We’re glad you liked it.

Core Java knowledge helps while learning Hadoop, but it’s not mandatory.

However, considering your background in web development, you can go for AngularJS 2 Certification Training to boost your career growth. You can check out more details here: https://www.edureka.co/angular-js. Please feel free to reach out to us if you have any questions or doubts. Alternatively, you can reach out to us at +91 88808 62004 24X7. Hope this helps. Cheers!

Hi Vishnu,

I am a .NET developer with around 10 years of experience, I am trying to peruse in Bigdata/Hadoop however want to understand the technical difficulty (out of 10) in switching from Microsoft to Hadoop envrionment.

Hey Shailesh, thanks for checking out our blog. Considering your background, you could use Hadoop in Microsoft Azure which would be easier. But, to work on the more widely used Hadoop environment, some experience working on a Linux environment would be beneficial. While there are no pre-requisites as such to learn Hadoop, basic Java/Python knowledge would help you. If you do not have Java knowledge then, you do not need to worry, as there are Hadoop ecosystem tools such as Pig and Hive which are similar to SQL that you can work on. Also, when you enroll in our Hadoop course, we even provide you with a complementary self-paced course on Java essentials for Hadoop, so it won’t be a problem. You can check out more course details here: https://www.edureka.co/big-data-hadoop-training-certification. Hope this helps. Cheers!