Advanced Certification in Agentic AI Engineer ...

- 63k Enrolled Learners

- Weekend

- Live Class

Being an important subset of Machine Learning, the demand for Deep Learning Certification has seen an immense rise, especially among those interested in unlocking the limitless possibilities of AI. Inspired by the growing popularity of Deep Learning, I thought of coming up with a series of blogs that will educate you about this new trend in the field of Artificial Intelligence and help you understand what is it all about. This is the first of the many blogs in the series called as – Deep Learning Tutorial.

In this Deep Learning Tutorial blog, I will take you through the following things, which will serve as fundamentals for the upcoming blogs:

You may go through this recording of Deep Learning Tutorial where our instructor has explained the topics in a detailed manner with examples that will help you to understand this concept better.

Now think about this, instead of you doing all your work, you have a machine to finish it for you or it can do something which you thought was not possible at all. For instance:

| Predicting the Future: It can help us in predicting Earthquakes, Tsunami’s, etc. beforehand so that preventive measures can be taken to save many lives from falling into the clutches of natural calamities. |

| Chat-bots: All of you would have heard about Siri, which is Apple’s voice controlled virtual assistant. Believe me, with the help of Deep Learning these virtual assistance are getting smarter day by day. In fact, Siri can adapt itself according to the user and provide better personalized assistance. |  |

| Self-driving Cars: Imagine, how incredible it would be for physically disabled and elderly people who find it difficult to drive on their own. Apart from this, it will save millions of innocent lives who meet road accident every year because of human error. |

Google AI Eye Doctor: It is a recent initiative taken by Google where they are working with an Indian Eye Care Chain to develop an AI software which can examine retina scans and identify a condition called diabetic retinopathy, which can cause blindness. |  |

| AI Music Composer: Well, who thought we can have an AI music composer using Deep Learning. Hence, I would not be surprised to hear that the next best music is given by a machine. |

| A Dream Reading Machine: This is one of my favorites, a machine that can capture your dreams in the form of video or something. With so many un-realistic applications of AI & Deep Learning we have seen so far, I was not surprised to find out that this was tried in Japan few years back on three test subjects and they were able to achieve close to 60% accuracy. That is something quite unbelievable, yet true. |  |

I am pretty sure that some of these real life applications of AI & Deep Learning would have given you goosebumps. Alright then, this sets the base for you and now, we are ready to proceed further in this Deep Learning Tutorial and understand what is Artificial intelligence.

Artificial Intelligence is nothing but the capability of a machine to imitate intelligent human behavior. AI is achieved by mimicking a human brain, by understanding how it thinks, how it learns, decides, and work while trying to solve a problem.

For example: A machine playing chess, or a voice activated software which helps you with various things in your iPhone or a Number plate Recognition system which captures the number plate of a over speeding car and processes it to extract the registration number and identify the owner of the car. All these wasn’t very easy to implement before Deep Learning. Now, let’s understand the various subsets of Artificial Intelligence.

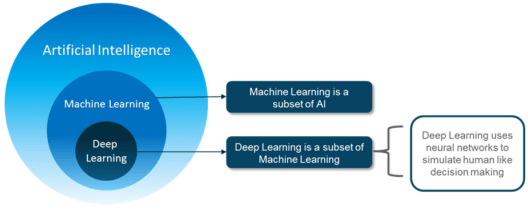

Till now, you would have heard a lot about Artificial Intelligence, Machine Learning and Deep Learning. However, do you know the relationship between all three of them? Basically, Deep learning is a sub-field of Machine Learning and Machine Learning is a sub-field of Artificial Intelligence as shown in the image below:

When we look at something like AlphaGo, it’s often portrayed as a big success for deep learning, but it’s actually a combination of ideas from several different fields of AI and machine learning. In fact, you would be surprised to hear that the idea behind deep neural networks is not new but dates back to 1950’s. However, it became possible to practically implement it because of the high-end resource capability available nowadays.

So, moving ahead in this deep learning tutorial blog, let’s explore Machine Learning followed by its limitations.

Machine Learning is a subset of Artificial Intelligence which provide computers with the ability to learn without being explicitly programmed. In machine learning, we do not have to define explicitly all the steps or conditions like any other programming application. On the contrary, the machine gets trained on a training dataset, large enough to create a model, which helps machine to take decisions based on its learning.

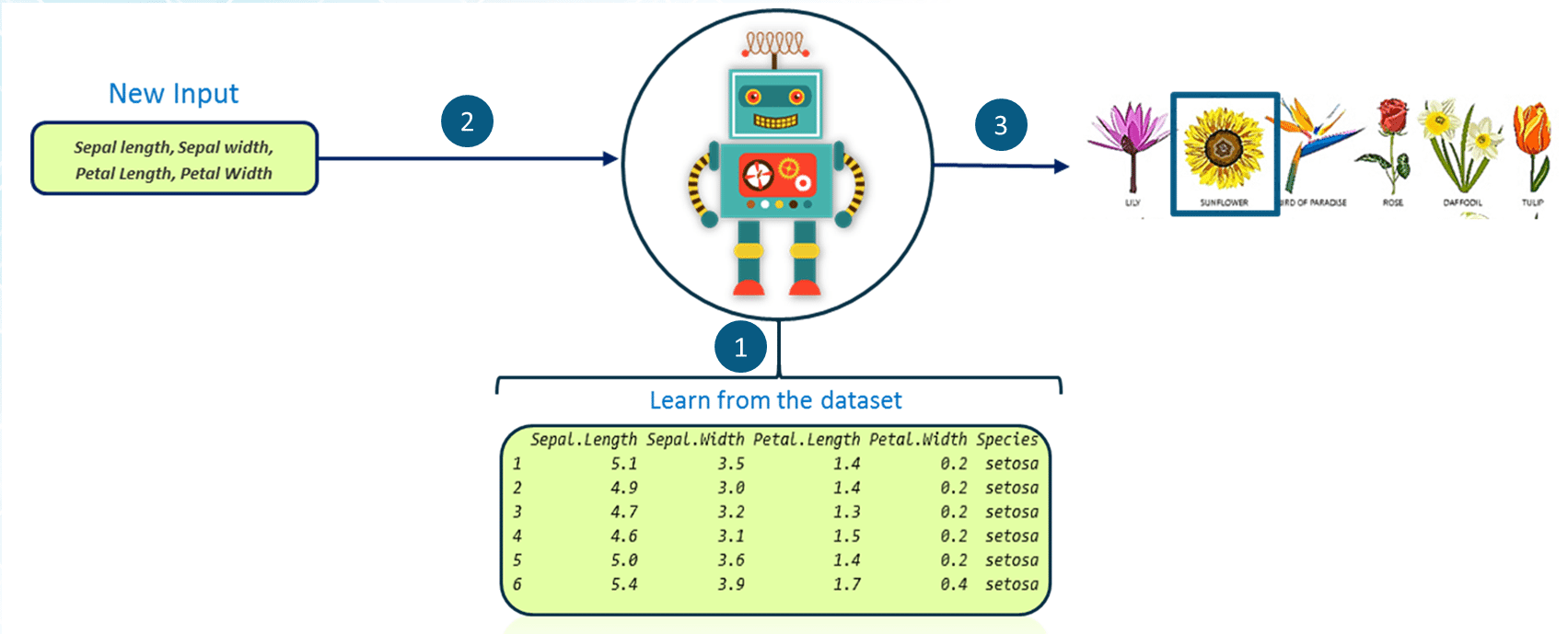

For example: We want to determine the species of a flower based on its petal and sepal length (leaves of a flower) using machine learning. Then, how will we do it?

| We will feed the flower data set which contains various characteristics of different flowers along with their respective species into our machine as you can see in the above image. Using this input data set, the machine will create and train a model which can be used to classify flowers into different categories. |

| Once our model has been trained, we will pass on a set of characteristics as input to the model. |

| Finally, our model will output the species of the flower present in the new input data set. This process of training a machine to create a model and use it for decision making is called Machine Learning. However this process has some limitations. |

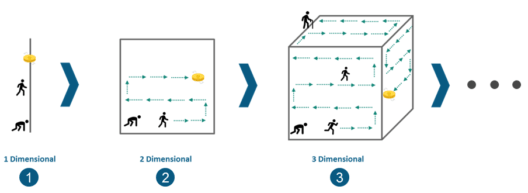

Machine Learning is not capable of handling high dimensional data that is where input & output is quite large. Handling and processing such type of data becomes very complex and resource exhaustive. This is termed as Curse of Dimensionality. To understand this in simpler terms, let’s consider the following image:

| Consider a line of 100 yards and you have dropped a coin somewhere on the line. Now, it’s quite convenient for you to find the coin by simply walking on the line. This very line is a single dimensional entity. |

| Next, consider you have a square of side 100 yards each as shown in the above image and yet again, you dropped a coin somewhere in between. Now, it is quite evident that you are going to take more time to find the coin within that square as compared to the previous scenario. This square is a 2 dimensional entity. |

| Lets take it a step ahead by considering a cube of side 100 yards each and you have dropped a coin somewhere in between. Now, it is even more difficult to find the coin this time. This cube is a 3 dimensional entity. |

Hence, you can observe the complexity is increasing as the dimensions are increasing. And in real-life, the high dimensional data that we were talking about has thousands of dimensions that makes it very complex to handle and process. The high dimensional data can easily be found in use-cases like Image processing, NLP, Image Translation etc.

Machine learning was not capable of solving these use-cases and hence, Deep learning came to the rescue. Deep learning is capable of handling the high dimensional data and is also efficient in focusing on the right features on its own. This process is called feature extraction. Now, let’s move ahead in this Deep Learning Tutorial and understand how deep learning works.

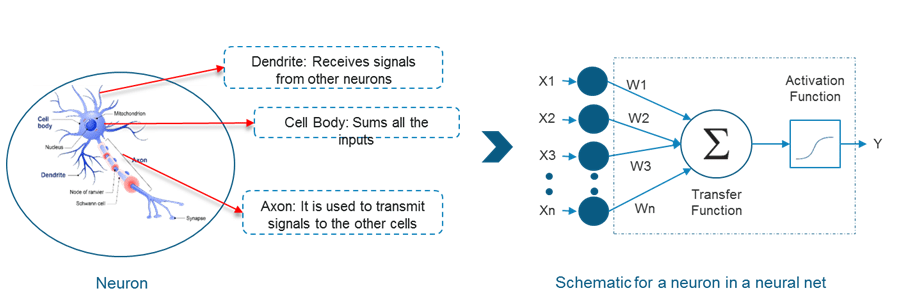

In an attempt to re-engineer a human brain, Deep Learning studies the basic unit of a brain called a brain cell or a neuron. Inspired from a neuron an artificial neuron or a perceptron was developed. Now, let us understand the functionality of biological neurons and how we mimic this functionality in the perceptron or an artificial neuron:

If we focus on the structure of a biological neuron, it has dendrites which is used to receive inputs. These inputs are summed in the cell body and using the Axon it is passed on to the next biological neuron as shown in the above image.

Similarly, a perceptron receives multiple inputs, applies various transformations and functions and provides an output.

As we know that our brain consists of multiple connected neurons called neural network, we can also have a network of artificial neurons called perceptrons to form a Deep neural network. So, let’s move ahead in this Deep Learning Tutorial to understand how a Deep neural network looks like.

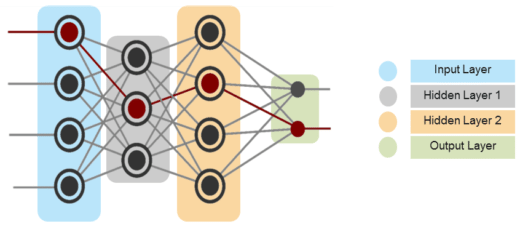

| In the above diagram, the first layer is the input layer which receives all the inputs and the last layer is the output layer which provides the desired output. |

| All the layers in between these layers are called hidden layers. There can be n number of hidden layers thanks to the high end resources available these days. |

| The number of hidden layers and the number of perceptrons in each layer will entirely depend on the use-case you are trying to solve. |

Now that you have a picture of a Deep Neural Networks, let’s move ahead in this Deep Learning Tutorial to get a high level view of how Deep Neural Networks solves a problem of Image Recognition.

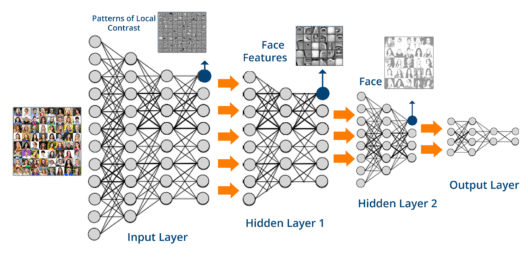

We want to perform Image recognition using Deep Networks:

| Here, we are passing the high dimensional data to the input layer. To match the dimensionality of the input data, the input layer will contain multiple sub-layers of perceptrons so that it can consume the entire input. |

| The output received from the input layer will contain patterns and will only be able to identify the edges of the images based on the contrast levels. |

| This output will be fed to the Hidden layer 1 where it will be able to identify various face features like eyes, nose, ears etc. |

| Now, this will be fed to the hidden layer 2 where it will able to form the entire faces. Then, the output of layer 2 is sent to the output layer. |

| Finally, the output layer performs classification based on the result obtained from the previous and predicts the name. |

Let me ask you a question, what will happen if any of these layers is missing or the neural network is not deep enough? Simple, we will not be able to accurately identify the images. This is the very reason why these use-cases did not have a solution all these years prior to Deep Learning. Just to take this further, we will try to apply Deep networks on a MNIST Data-set.

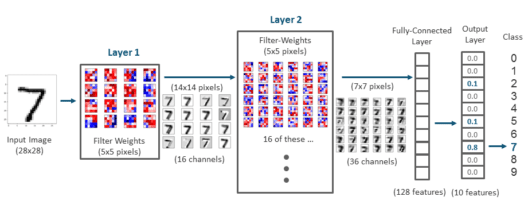

The Mnist data-set consists of 60,000 training samples and 10,000 testing samples of handwritten digit images. The task here is to train a model which can accurately identify the digit present on the image.

Similarly, index 2 which has a value of 0.1, actually represents the probability of 2 being the digit present on the input image. So, if we see the highest probability in this array is 0.8 which is present at index 7 of the array. Hence the number present on the image is 7.

So guys, this was all about deep learning in a nutshell. In this deep learning tutorial, we saw various applications of deep learning and understood its relationship with AI and Machine Learning. Then, we understood how we can use perceptron or an artificial neuron basic building blocks for creating deep neural network that can perform complex tasks such. At last, we went through one of the use-cases of deep learning where we performed image recognition using deep neural networks and understood all the steps that happen behind the scene. If you want to get certified in Deep learning and gain an extra advantage around the peers of data science, just check out the Artificial Intelligence Course and get certified!

Now, in the next blog of this Deep Learning Tutorial series, we will learn how to implement a perceptron using TensorFlow, which is a Python based library for Deep Learning. ML makes computers learn the data and making own decisions and using in multiple industries. It resolves the complex problem very easily and makes well-planned management. Our MLOps certification provides certain skills to streamline this process, ensuring scalable and robust machine learning operations. What Is MLOps? It is the practice of streamlining machine learning model development, deployment, and monitoring efficiently. Machine Learning Algorithms are mathematical models that help machines identify patterns and make decisions from data.

Got a question for us? Please mention it in the comments section and we will get back to you.

Thank you for registering Join Edureka Meetup community for 100+ Free Webinars each month JOIN MEETUP GROUP

Thank you for registering Join Edureka Meetup community for 100+ Free Webinars each month JOIN MEETUP GROUPedureka.co

Thanks for sharing such great information.

How can we cite your article and images?

Thank you Edureka!, for your honest to share your knowledge for all of us!

am doing medical image segmentation using CNN, and am new for python programming, if you are interested to help me, am doing on windows 10 ,please help me from how to install python on windows 10 with examples please

Hey Biruk, thank you for reading our blog. We are glad that you liked it. If you are looking or step by step procedure, then check out this link: https://www.python.org/downloads/windows/

Hope this helps :)

thank you for tutorial.

Hey Kim, thank you for appreciating our work, we are glad that you liked it. Cheers :)

Yes Artificial Intelligence, Machine Learning and Deep Learning are trending technologies and have applications in the field of Healthcare, Banking and finance, Airways, Fraud detection and in some more fields.

Hey Andrea! Yes, you are right. We update our blogs and youtube channel regularly with all he latest trending techs that will help you up-skill. This video on Deep Learning will help you get started: https://www.youtube.com/watch?v=nl_4WFHQ4LU&t=43s

Hope this helps.

Best tutorial i really like it…..

Thank you Praful! Do browse through our other blogs and let us know how you liked it. Cheers :)