In this age, the transition to e-commerce is happening at a very fast pace. These e-commerce companies, both small and big would like to have to their website up and running 24 hours and 365 days a year not to lose customers and business. For instance, think about amazon.com going down for a couple of hours.

This is where Cloud Computing comes into the picture. Cloud provides agility to build Highly Available (HA) and fault-tolerant applications which was not possible with the on-premise data centers. The Cloud vendors have Data Centers across multiple geographical locations with redundancy to help us build an HA website. The same applies to any of the business-critical applications that need to run all the time. Currently, AWS Global Infrastructure spans 21 geographical Regions with 66 Availability Zones (AZ) with more Regions and AZs to come in the near future. In this article, we will focus on building HA applications in the Cloud.

AWS Global Infrastructure

AWS Global Infrastructure has:

- Regions and Availability Zones (AZ)

- Data Centers in AZs

- Edge Locations

Let’s discuss each of these in detail.

Regions & Availability Zones

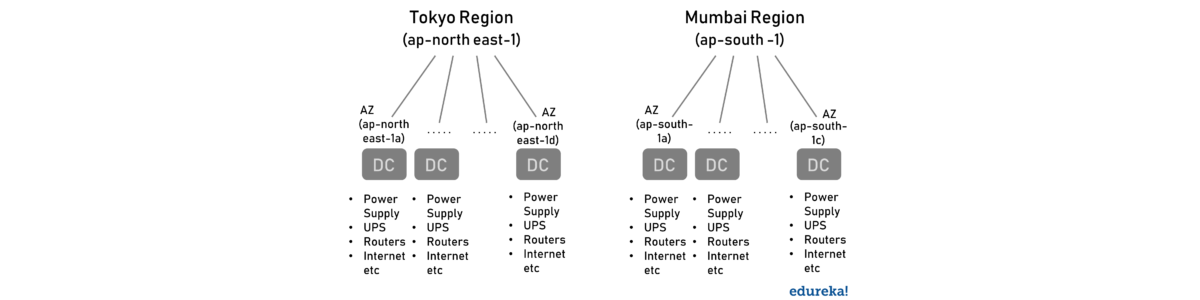

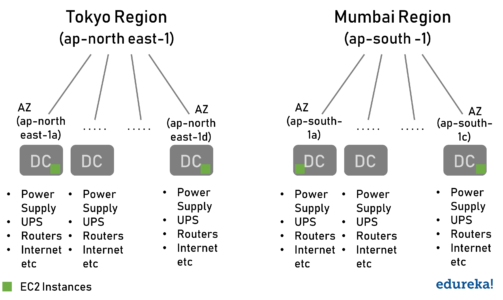

Each Region is a geographical area with more than one isolated location called Availability Zones (AZ). Each AZ can be a combination of one or more Data Centers. For Example, North Virginia is a Region with a code of us-east-1 and has 6 AZ. You can learn more about regions in AWS documentation.

As shown in the above diagram, there are multiple AWS Regions. And each Region has a minimum of two AZs. Each AZ has multiple Data Centers.

Note: Some of the resources in AWS are global, while some are region-specific. When we create an IAM User it is Global and when we create an EC2 Instance it is regional. While creating an EC2 Instance, we got an option to pick the region and which AZ. It is not the same with the IAM User as it is global.

Join the Cloud Revolution with Our AWS Training Classes!

Data Centers in Availability Zones

Amazon is very secretive of the DC locations but that is not the case with AZs.

For Example, the Mumbai AZ ap-south-1a might be in the east of Mumbai and ap-south-1c in the west. This way if there is any natural catastrophe like flood or fire around one of the AZs, it doesn’t affect the other AZs. Also, each of the Data Center (DC) has its own redundancy of Power Supply, UPS, Routers, Internet Connectivity, etc to avoid any Single-Point-Of-Failure. There is no common infrastructure between two DCs and the DCs are connected by high-speed internet connectivity for low latency.

While creating a resource, AWS provides us an option to pick the Region and the AZs, but not the DC within it. The following factors are used for picking an AWS Region.

- Pricing (North Virginia Region is the oldest and the cheapest Region)

- Security and compliance requirement

- User/customer location

- Service availability

- Latency

Note: For the sake of learning AWS, North Virginia is best as it is one the cheapest AWS Region and usually is the first one to support any new AWS feature for us to try it out.

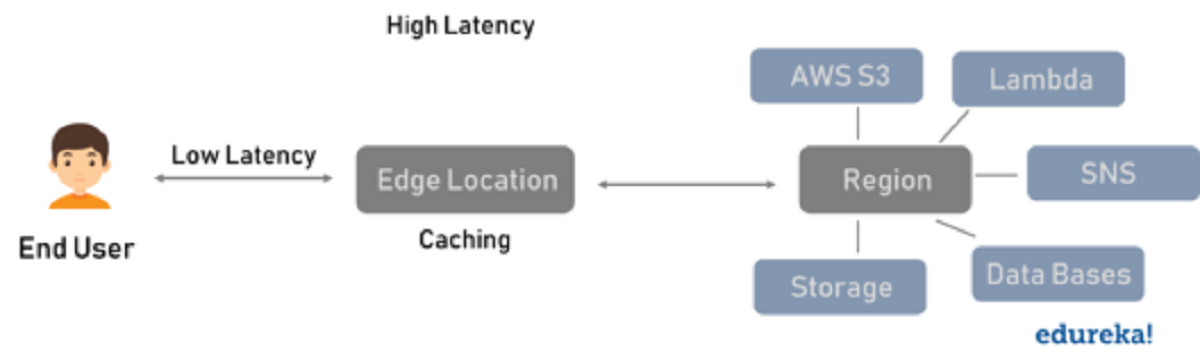

Edge Locations

In AWS Global Infrastructure, the Edge Locations used for caching the static and streaming data. Currently, there is a global network of 187 Points of Presence (176 Edge Locations and 11 Regional Edge Caches) in 69 cities across 30 countries. When compared to the AZs, the number of edge locations is almost triple and close to the end-user. This makes the Edge Locations a ripe candidate for caching data, while the regions are for hosting web servers, databases and so on.

These Edge Locations provide lower latency when compared to the Regions. When a request is made by the user, the Edge Location checks if the data is there locally and if not then gets the data from the appropriate Region, stores it locally and then passes it on to the user.

Edge Locations is an AWS term provided by the AWS CloudFront Service. An AWS Edge Location is called PoP (Points of Presence) in general term. A similar service is provided by Content Distribution Network (CDN) providers like Akamai, Cloudflare, etc. These CDN providers cache the data like streaming video during a live match to provide a better experience to the end-user. Below is the table comparing the AWS and the general terminology.

| AWS | General |

| Edge Location | POP (Point Of Presence) |

| Cloud Front Service | POP (Point Of Presence) |

| Provided By AWS | Provided by Akamai, Cloudflare |

Creating a Highly Available Application using the AWS Global Infrastructure

AWS Global Infrastructure provides a set of Regions, AZs and Edge Locations to create a Highly Available and Fault Tolerant application. Learn more about Migrating to AWS and its framework from the AWS Cloud Migration.

Example

If a web server is hosted in a single AZ, any problem with that AZ will make the website unavailable. To get around this the webserver can be deployed in multiple AZs within the same region as shown below. Similarly, any problem with a region which will also make the website unavailable. To make the website even more available, we can have the webserver across multiple regions, this way the availability of the website is not dependent on the availability of a single region.

HA website and applications are created by using redundancy, but the problem with redundancy is that it comes at a cost. We need to run the same website at multiple locations. Also, the same web server can be deployed in some other Cloud like GCP or Azure. Here we are running the same web server in different Clouds and so the configuration is called Multi-Cloud Configuration. Google Anthos helps in building hybrid and Multi-Cloud applications using Kubernetes.

When we have the webserver across multiple locations, how does the traffic gets distribute among them? We can’t simply keep them sitting idle. This is where the AWS Elastic Load Balancers come into play. The AWS ELB takes the request from the end-user and distributes the same across multiple web servers.

Check out our AWS Certification Training in Top Cities

| India | Other Countries/Cities |

| Hyderabad | Atlanta |

| Bangalore | Canada |

| Chennai | Dubai |

| Mumbai | London |

| Pune | UK |

Conclusion

While setting up our own DC gives the flexibility to design our hardware and software, it comes at the cost of time and money. By leveraging AWS, with a few clicks or API calls we can create a highly available, secure, fault-tolerant, reliable, performant application. The different Cloud Vendors like Google, Amazon, Microsoft have been spending billions to set up new DC in different geographical locations. Get the latest about the AWS Global Infrastructure here.