In this article on How To Create Hadoop Cluster With Amazon EMR we would see how to easily Run and Scale Hadoop and Big Data applications. Following pointers will be covered in this article,

Moving on with this How To Create Hadoop Cluster With Amazon EMR?

How To Create Hadoop Cluster With Amazon EMR?

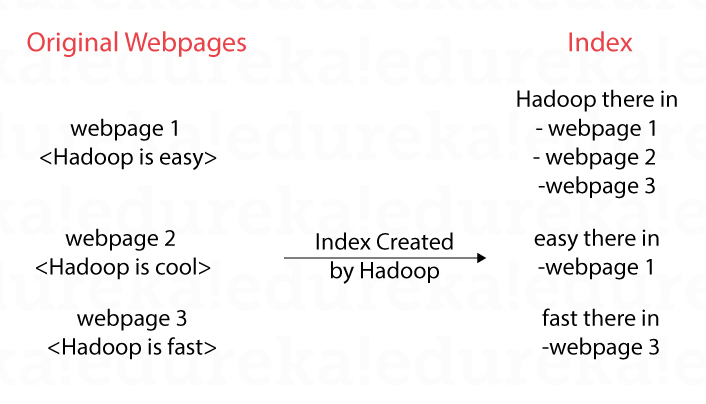

When we search for something in Google or Yahoo, we do get the response in a fraction of second. How is it possible that Google, Yahoo and other search engines return the results so fast from the ever growing web? The search engines crawl through the internet, download the webpages and create an index as shown below. For any query from us, they use the index to figure out what are all the web pages containing the text we were searching for. By looking at the below index on the right side, we can clearly know that Hadoop is there is web page 1, 2 and 3.

With the explosion of the web pages these search engines were finding challenges to create index and do the PageRanking calculations. This is where the birth of Hadoop took place in Yahoo and later became FOSS (Free and Open Source Software) under the ASF (Apache Software Foundation). Once under the ASF a lot of companies started taking interest in Hadoop and started contributing to improve it. Hadoop was the one to start the Big Data revolution, but a lot of other softwares like Spark, Hive, Pig, Sqoop, Zookeeper, HBase, Cassandra, Flume started evolving to address the limitations and gaps in Hadoop.

With the explosion of the web pages these search engines were finding challenges to create index and do the PageRanking calculations. This is where the birth of Hadoop took place in Yahoo and later became FOSS (Free and Open Source Software) under the ASF (Apache Software Foundation). Once under the ASF a lot of companies started taking interest in Hadoop and started contributing to improve it. Hadoop was the one to start the Big Data revolution, but a lot of other softwares like Spark, Hive, Pig, Sqoop, Zookeeper, HBase, Cassandra, Flume started evolving to address the limitations and gaps in Hadoop.

Web search engines were the first ones to use Hadoop, but later a lot of use-cases started to evolve as more and more data was generated. Let’s take the example of an eCommerce application used for recommending books to user. As per the below diagram, user1 bought book1, book2 and book3, user2 bought some books and so on. Looking closely, we can observe that user1 and user2 have similar taste as they have bought book1 and book2. So, book3 can be recommended to user2 and book4 can be recommended to user1. This is called Collaborative Filtering, a type of Machine Learning algorithm. We can flip the below diagram and get similar books.

In the above case we have created index, PageRanked and recommended to the user, the size of the data was small and so we were able to visualize the data and infer some results out of it. As the size of data gets bigger day-by-day and out of control, this is where Big Data tools like Hadoop come into picture.

In the above case we have created index, PageRanked and recommended to the user, the size of the data was small and so we were able to visualize the data and infer some results out of it. As the size of data gets bigger day-by-day and out of control, this is where Big Data tools like Hadoop come into picture.

Hadoop solves a lot of problems, but installing Hadoop and other Big Data software had never been an easy task. There are a lot of configuration parameters to tweak, like integration, installation and configuration issues to work with. This is where companies like Cloudera, MapR and Databricks help. They make the installing Big Data software easier and do provide commercial support, for example let’s say something happens in the production. AWS EMR (Elastic MapReduce) takes the ease of using Hadoop etc much easier. The name Elastic MapReduce is a bit of misnomer as EMR also supports other distributed computing models like Resilient Distributed Datasets and not just MapReduce.

In this tutorial, we will explore how to setup an EMR cluster on the AWS Cloud and in the upcoming tutorial, we will explore how to run Spark, Hive and other programs on top it.

For a detailed, You can even check out the details of Migrating to AWS with the AWS Cloud Migration Course.

Moving on with this How To Create Hadoop Cluster With Amazon EMR?

Demo: Creating an EMR Cluster in AWS

Step 1: Go to the EMR Management Console and click on “Create cluster”. In the console, the metadata for the terminated cluster is also saved for two months for free. This allows for the terminated cluster to be cloned and created again.

Step 2: From the quick options screen, click on “Go to advanced options” to specify much more details about the cluster.

Step 3: In the Advanced Options tab, we can select different software to be installed on the EMR cluster. For an SQL interface, Hive can be selected. For a data flow language interface, Pig can be selected. For distributed application coordination ZooKeeper can be selected and so on. This tab also allows us to add steps, which is an optional task. Steps are Big Data processing jobs using MapReduce, Pig, Hive etc. They can be added in this tab or later once the cluster has been created. Click on “Next” to select the Hardware required for the EMR cluster.

Step 4: Hadoop follows the master-worker architecture where the master does all the coordination like scheduling and assigning the work and checking their progress, while the workers do the actual work of processing and storing the data. A single master is a Single-Point-Of-Failure (SPOF). Amazon EMR supports multi-master for High Availability (HA). The previous step allows to setup a multi-master cluster in EMR.

EMR allows two types of nodes, Core and Task. The core node is used for both processing and storing the data, the task node is used for just processing of the data. For this tutorial, we can select only one Core and no Task nodes as it involves less cost for us. Also, choose Spot instances over On-Demand as the Spot instances are cheaper. The catch with the Spot instances is that they can be terminated by AWS automatically with a two minute notice. This is fine for the sake of practice and in some actual scenarios also. Spot instances are terminated automatically as they have low priority over other instance types. Click on “Next”.

Step 5: Specify the Cluster name. and click on “Next”. Notice that “Termination protection” is turned on by default, this makes sure that the EMR cluster is not deleted accidently by introducing a few steps while terminating the cluster.

Step 6: In the tab, the different security options for the EMR cluster are specified. The KeyPair needs to be selected for logging into the EC2 instance. EMR will automatically create the appropriate roles and Security Groups and attach them to the master and the worker EC2 nodes. Click on “Create cluster”.

The creation of the cluster takes a few minutes as the EC2 instances must be bought up and the different Big Data softwares must be installed and configured. Initially the cluster status would be in the “Starting” state and move on to “Waiting” state. In the “Waiting” state the EMR cluster is simply waiting for us to submit different Big Data processing jobs like MR, Spark, Hive etc.

Also, notice from the EC2 Management Console and note that the master and the worker EC2 instances should be in a running state. These are the Spot instances which have been created as part the EMR cluster creation. The same EC2 can be observed from the Hardware tab in the EMR Management Console also. Note that in the Hardware tab the price for the Spot EC2 instances is mentioned as 0.032$/hour. The price of the Spot instances keep on changing with time and is much lower than on the On-Demand EC2 pricing.

Step 7: Now that the EMR cluster has been added successfully, Steps or Big Data processing jobs can be added. Go to the Steps tab and click on “Add Step” and select the type of Step (MR, Hive, Spark etc). We will explore the same in the upcoming tutorial. For now, click on Cancel.

Step 8: Now that we have seen how to start the EMR, lets see how to stop the same.

Step 8.1: Click on Terminate.

Step 8.2: As mentioned in the previous steps, “Termination protection” is On for the EMR cluster and the Terminate button has been disabled. Click on Change.

Step 8.3: Select the “Off” radio button and click on the tick mark. Now the Terminate button should be enabled. This is the additional step EMR has introduced, just to make sure that we don’t accidently delete the EMR cluster.

Notice that the EMR cluster will be in the Terminating status and the EC2s will be terminated. Finally, the EMR cluster will be moved to the Terminated status, from here our billing with AWS stops. Make sure to terminate the cluster, so as not to incur additional AWS costs.

Conclusion

In this tutorial we have seen how to start the EMR cluster within a few minutes from the web console (browser), the same can be automated using the AWS CLI, AWS SDK or by using AWS CloudFormation. As noticed setting up an EMR cluster can be done is a matter of minutes and the Big Data processing can be started immediately, once the processing is done the output can be stored in S3 or DynamoDB and so the cluster shutdown to stop the billing. Because of this pricing model and the ease of use, EMR is a big hit with those who are doing the Big Data processing. No need to buy server in huge numbers, get licenses for the Big Data software and maintain them.’

So this is it guys, this brings us to the end of this article on How To Create Hadoop Cluster With Amazon EMR? In case if you wish to gain expertise in this subject, Edureka has come up with a curriculum that covers exactly, what you would need to crack the Solution Architect Exam! You can have a look at the course details for AWS Online Certification Training.

In case of any queries related to this blog, please feel free to put questions in the comments section below and we would be more than happy to reply to you the earliest.