Big Data has been truly hyped enough in recent times, so as the skilled professionals that comes with the knowledge of it. Not utilizing your primary skills and starting from ground zero is not always an easy job. However, utilizing your square cuts and adapting to the bouncers will do wonders for you. Bingo, we are talking about learning Big Data using ETL technology.

ETL developers who design data transformation workflows can very well use tools and translate the workflows to Hadoop jobs. Hadoop is an open source framework that is used extensively to process BigData using MapReduce programme (which is another open source technology that helps to process large amounts of data on Hadoop). Most of the time, finding skilled resources in Big Data can be challenging.

If an ETL developer has to find the IP addresses that have made more than a million requests on the bank’s website, he needs to write a MapReduce job which processes the web-log data stored in Hadoop. However, with the advancement in ETL technology, a job developer can use the standard ETL design tools to create an ETL flow which can read data from multiple sources in Hadoop (Files, Hive, HBase), join, aggregate, filter and transform the data to find an answer to the query on IP addresses.

Talend is the only Graphical User Interface tool which is capable enough to “translate” an ETL job to a MapReduce job. Thus, Talend ETL job gets executed as a MapReduce job on Hadoop and get the big data work done in minutes. This is a key innovation which helps to reduce entry barriers in Big Data technology and allows ETL job developers (beginners and advanced) to carry out Data Warehouse offloading to greater extent. You can even check out the details of Big Data with the Data Engineering Course.

Life in Big Data city is much easier with Talend around

A Graphical Abstraction Layer on Top of Hadoop Applications – this makes life so much easier in the Big Data world.

What Talend has to say: “In keeping with our history as an innovator and leader in open source data integration, Talend is the first provider to offer a pure open source solution to enable big data integration. Talend Open Studio for Big Data, by layering an easy to use graphical development environment on top of powerful Hadoop applications, makes big data management accessible to more companies and more developers than ever before.

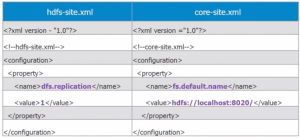

With its eclipse-based graphical workspace, Talend Open Studio for Big Data enables the developer and data scientist to leverage Hadoop loading and processing technologies like HDFS, HBase, Hive, and Pig without having to write Hadoop application code. By simply selecting graphical components from a palette, arranging and configuring them, you can create Hadoop jobs. For example:

- Load data into HDFS (Hadoop Distributed File System)

- Use Hadoop Pig to transform data in HDFS

- Load data into a Hadoop Hive based data warehouse

- Perform ELT (extract, load, transform) aggregations in Hive

- Leverage Sqoop to integrate relational databases and Hadoop

Hadoop Applications, Seamlessly Integrated within minutes using Talend.

For Hadoop applications to be truly accessible to your organization, they need to be smoothly integrated into your overall data flows. Talend Open Studio for Big Data is the ideal tool for integrating Hadoop applications into your broader data architecture. Talend provides more built-in connector components than any other data integration solution available, with more than 800 connectors that make it easy to read from or write to any major file format, database, or packaged enterprise application. For example, in Talend Open Studio for Big Data, you can use drag ‘n drop configurable components to create data integration flows that move data from delimited log files into Hadoop Hive, perform operations in Hive, and extract data from Hive into a MySQL database (or Oracle, Sybase, SQL Server, and so on).

Want to see how easy it can be to work with cutting-edge Hadoop applications?

No need to wait — Talend Open Studio for Big Data is an open source software, free to download and used under an Apache license.

You can even check out the details of Big Data with the Data Engineering Course in Mumbai.

Talk in Town

Talend has been a Visionary in the Magic Quadrant for Data Integration Tools since 2009. Recently, they have also emerged as pioneers in Data Quality and MDM area as well; all ingredients to cook a fantastic Big Data dish.

They claim that: “Big Data Integration increases the performance and scalability by 45 percent in your organization”.

Only Talend 5.5 (and higher) allows developers to generate high performance Hadoop code without needing to be an expert in MapReduce or Pig.

A few months back, one of the article from Talend said: “Adoption of Hadoop is skyrocketing and companies large and small are struggling to find enough knowledgeable Hadoop developers to meet this growing demand”. Only Talend 5.5 allows any data integration developer to use a visual development environment to generate native, high performance and highly scalable Hadoop code. This unlocks a large pool of development resources that can now contribute to big data projects. In addition, Talend is staying on the cutting edge of new developments in Hadoop that allow big data analytics projects to power real-time customer interactions.

Talend for Big Data can help understand organizations by collecting datasets from heterogeneous source systems – such as third parties, APIs, and social networking feeds – and transforming that data into a visual picture of the end-to-end customer journey.

Be it banking Industry, pharmaceuticals, E-commerce, insurance – Talend can integrate data at any scale with an easy blend with Hadoop proving to be the most cutting-edge technology to meet the demand of present and future.

Use Cases Around the World

Starting from marketing campaign to customer service in banking industry to fraud detection, big data is everywhere.

Having more than 800+ connectors alone in their open-source edition, it claims to be the largest most widely supported platforms to connect to anything and can fetch everything.

With the changing pattern and aligned towards NoSQL, Open Source, Hadoop, choice of learning Big Data and ETL style using Talend would be the most logical decision for anyone who deals with data in any form and anytime.

In summary, ETL tools are far from being passé. They are central to the Big Data ecosystem and play a crucial role in enabling data analytics.

That’s why Talend shines stating “Zero to Big Data without Coding, in under 10 minutes”.

If you also want to learn Big data and make your career in it then I would suggest you must take up the following Big Data Architect Certification.

Got a question for us? Mention them in the comment section and we will get back to you.

Related Posts:

Why should a Datawarehouse Professional move to Big Data Hadoop

I am a 3 years Informatica (ETL) developer and with basic knowledge of Datastage. know Sql and unix.

Please suggest me as i am confused to choose Bigdata or Artificial Intellegence.

Thanks for sharing this article !

+Luke Lonergan, thanks for checking out our blog. Do subscribe to stay posted on upcoming blogs. Cheers!

You can learn about the Fascinating and confusing reading to help in Computer Technology and also your system.

I am a 3+ years datastage (ETL) devloper and basic knowledge of informatica.

know the basics of sql nd java.

I confused to choose hadoop or Talend.

please suggest.

Hey Jagadeesh Kumar, thanks for checking out our blog. Since you have 3+ years of datastage experience and knowledge of Informatica, Talend could be a good option for you, if you wish to continue in ETL domain. But, if you wish to move away from ETL and want a career change, then Hadoop can be a great option. Your knowledge of SQL and Java will also be beneficial. You can read up about the career opportunities available in Hadoop here: https://www.edureka.co/blog/hadoop-career/. Hope this helps. Cheers!

It’s really a great pleasure to provide an opinion about ETL tools. These are very important and useful to all the people from all over the world. ETL tools are useful to everyone which help to transform any data into any database fast and easy and comfortably.

Thanks for your comment, Wilbert.

Hi I have 8 years of experience in various etl technologies.As i am not from java background,will it be advisable to move to hadoop. What are the job prospects for Talend developer.?

Hi Hariom,

Thank you for reaching out to us.

The current job scenario has witnessed several experienced professionals switching to Big Data/Hadoop technologies in order to advance in their careers. Since you have a solid ETL background, it can be beneficial for you to take Edureka’s Talend for Big data course which can help you leverage the power of HDFS, Pig and Hive using Talend for ETL and Data Warehousing without getting into the programming depth required for Hadoop. The basic prerequisite to learn this course is familiarity with Databases /SQL concepts. You can go through this link for more details: https://www.edureka.co/talend-for-big-data

In case you wish to pursue Hadoop, you can opt for the Big Data and Hadoop course at Edureka, which has the basic prerequisite of Core Java understanding. Edureka also provides a self-based course called ‘Java essentials for Hadoop’ which will help you gain the necessary Java knowledge before joining the Hadoop sessions. You can go through this link for further information: https://www.edureka.co/big-data-hadoop-training-certification

You can get in touch with us for further clarification by contacting our sales team on +91-8880862004 (India) or 1800 275 9730 (US toll free). You can also mail us on sales@edureka.co.