AWS Data Pipeline Tutorial

With advancement in technologies & ease of connectivity, the amount of data getting generated is skyrocketing. Buried deep within this mountain of data is the “captive intelligence” that companies can use to expand and improve their business. Companies need to move, sort, filter, reformat, analyze, and report data in order to derive value from it. They might have to do this repetitively and at a rapid pace, to remain steadfast in the market. AWS Data Pipeline service by Amazon is the perfect solution. To learn more about Amazon Web Services, you can refer to the AWS Online Training.

Need for AWS Data Pipeline

Data is growing exponentially and that too at a faster pace. Companies of all sizes are realizing that managing, processing, storing & migrating the data has become more complicated & time-consuming than in the past. So, listed below are some of the issues that companies are facing with ever increasing data:

Bulk amount of Data: There is a lot of raw & unprocessed data. There are log files, demographic data, data collected from sensors, transaction histories & lot more.

Variety of formats: Data is available in multiple formats. Converting unstructured data to a compatible format is a complex & time-consuming task.

Different data stores: There are a variety of data storage options. Companies have their own data warehouse, cloud-based storage like Amazon S3, Amazon Relational Database Service(RDS) & database servers running on EC2 instances.

Time-consuming & costly: Managing bulk of data is time-consuming & a very expensive. A lot of money is to be spent on transform, store & process data.

All these factors make it more complex & challenging for companies to manage data on their own. This is where AWS Data Pipeline can be useful. It makes it easier for users to integrate data that is spread across multiple AWS services and analyze it from a single location. So, through this AWS Data Pipeline Tutorial lets explore Data Pipeline and its components.

AWS Data Pipeline Tutorial | AWS Tutorial For Beginners | AWS Certification Training | Edureka

What is AWS Data Pipeline?

With AWS Data Pipeline you can easily access data from the location where it is stored, transform & process it at scale, and efficiently transfer the results to AWS services such as Amazon S3, Amazon RDS, Amazon DynamoDB, and Amazon EMR. It allows you to create complex data processing workloads that are fault tolerant, repeatable, and highly available.

Now why choose AWS Data Pipeline?

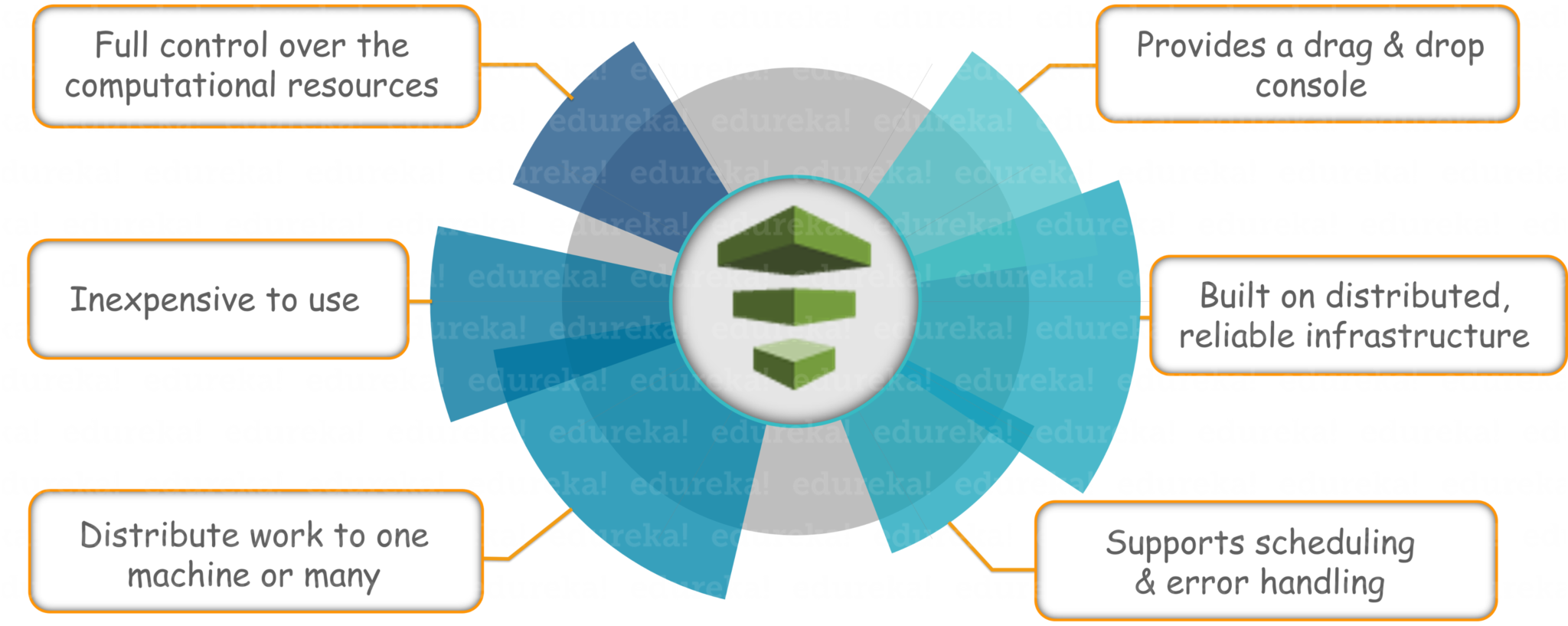

Benefits of AWS Data Pipeline

- Provides a drag-and-drop console within the AWS interface

- AWS Data Pipeline is built on a distributed, highly available infrastructure designed for fault tolerant execution of your activities

- It provides a variety of features such as scheduling, dependency tracking, and error handling

- AWS Data Pipeline makes it equally easy to dispatch work to one machine or many, in serial or parallel

- AWS Data Pipeline is inexpensive to use and is billed at a low monthly rate

- Offers full control over the computational resources that execute your data pipeline logic

So, with benefits out of the way, let’s take a look at different components of AWS Data Pipeline & how they work together to manage your data.

Components of AWS Data Pipeline

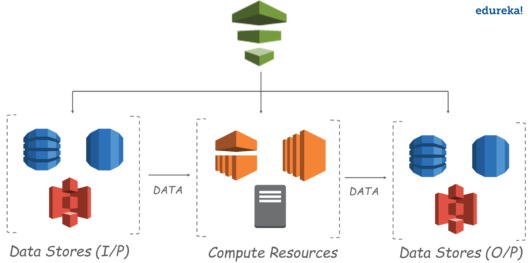

AWS Data Pipeline is a web service that you can use to automate the movement and transformation of data. You can define data-driven workflows so that tasks can be dependent on the successful completion of previous tasks. You define the parameters of your data transformations and AWS Data Pipeline enforces the logic that you’ve set up.

Basically, you always begin designing a pipeline by selecting the data nodes. Then data pipeline works with compute services to transform the data. Most of the time a lot of extra data is generated during this step. So optionally, you can have output data nodes, where the results of transforming the data can be stored & accessed from.

Data Nodes: In AWS Data Pipeline, a data node defines the location and type of data that a pipeline activity uses as input or output. It supports data nodes like:

- DynamoDBDataNode

- SqlDataNode

- RedshiftDataNode

- S3DataNode

Now, let’s consider a real-time example to understand other components.

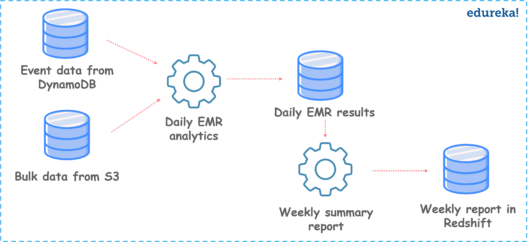

Use Case: Collect data from different data sources, perform Amazon Elastic MapReduce(EMR) analysis & generate weekly reports.

In this use case, we are designing a pipeline to extract data from data sources like Amazon S3 & DynamoDB to perform EMR analysis daily & generate weekly reports on data.

Now the words that I italicized are called activities. Optionally, for these activities to run we can add preconditions.

Activities: An activity is a pipeline component that defines the work to perform on schedule using a computational resource and typically input and output data nodes. Examples of activities are:

- Moving data from one location to another

- Running Hive queries

- Generating Amazon EMR reports

Preconditions: A precondition is a pipeline component containing conditional statements that must be true before an activity can run.

- Check whether source data is present before a pipeline activity attempts to copy it

- If or not a respective database table exists

Resources: A resource is a computational resource that performs the work that a pipeline activity specifies.

- An EC2 instance that performs the work defined by a pipeline activity

- An Amazon EMR cluster that performs the work defined by a pipeline activity

Finally, we have a component called actions.

Actions: Actions are steps that a pipeline component takes when certain events occur, such as success, failure, or late activities.

- Send an SNS notification to a topic based on success, failure, or late activities

- Trigger the cancellation of a pending or unfinished activity, resource, or data node

Now that you have the basic idea of AWS Data Pipeline & its components, let’s see how it works.

Demo on AWS Data Pipeline

In this demo part of AWS Data Pipeline Tutorial article, we are going to see how to copy the contents of a DynamoDB table to S3 Bucket. AWS Data Pipeline triggers an action to launch EMR cluster with multiple EC2 instances(make sure to terminate them after you are done to avoid charges). EMR cluster picks up the data from dynamoDB and writes to S3 bucket.

Creating an AWS Data Pipeline

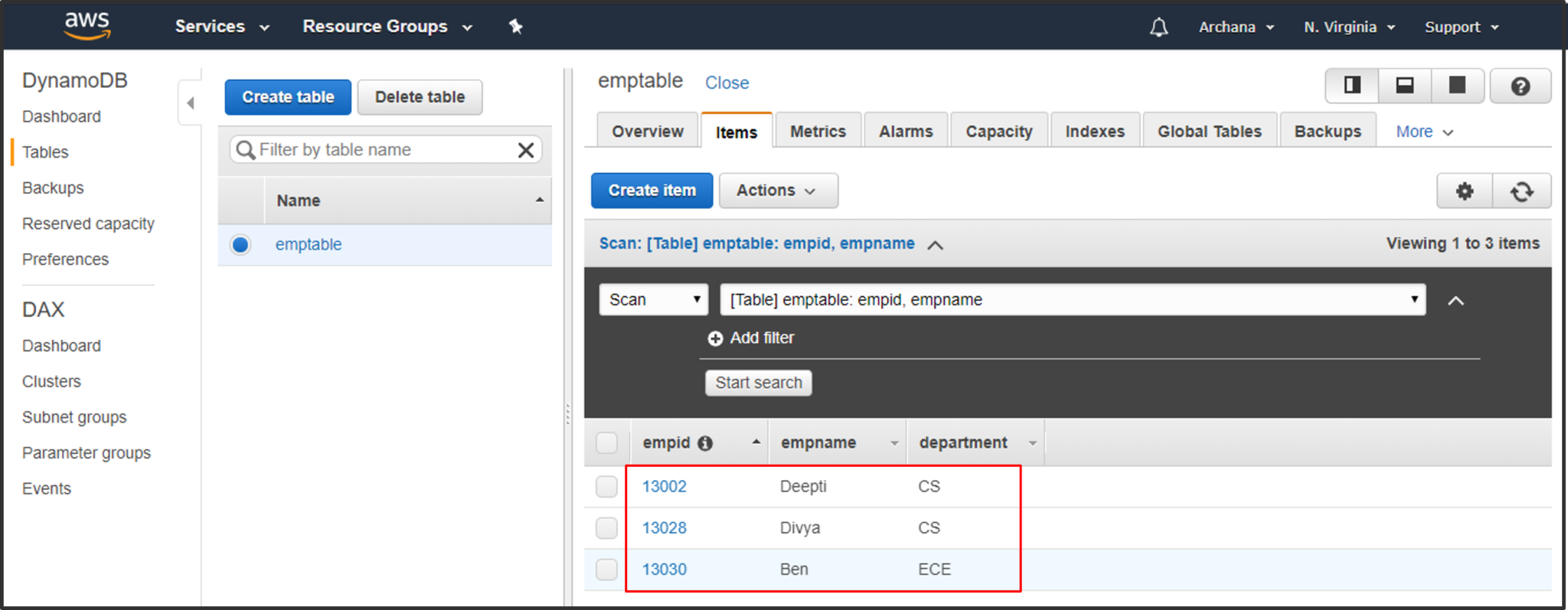

Step1: Create a DynamoDB table with sample test data.

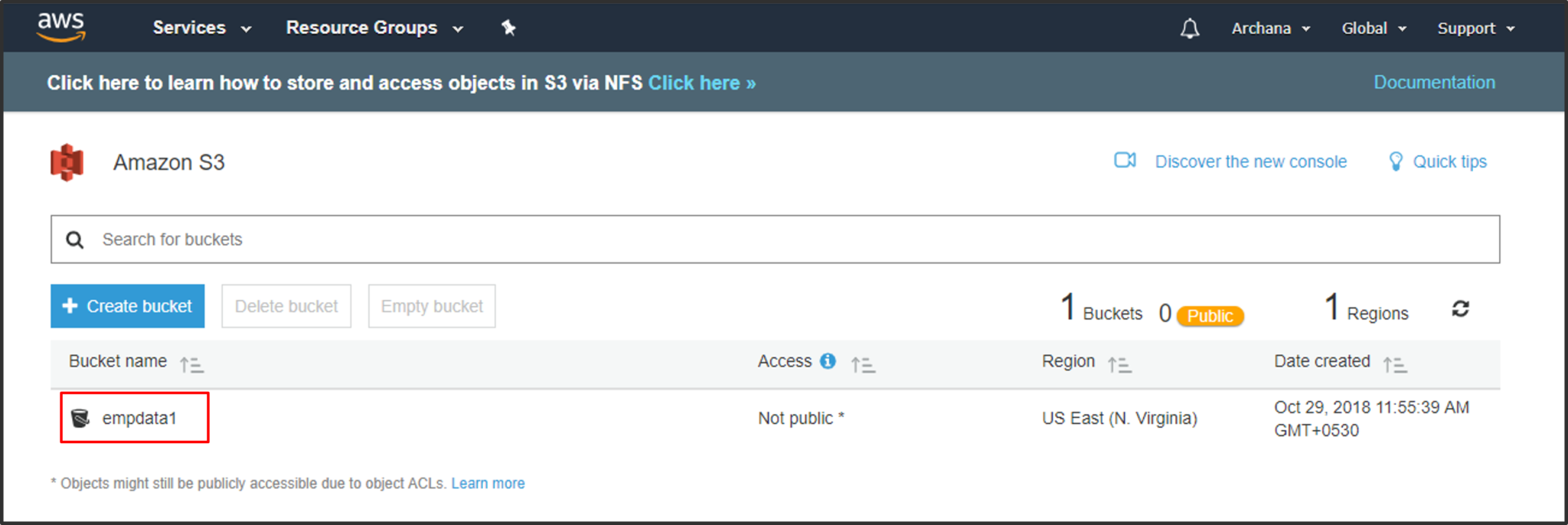

Step2: Create a S3 bucket for the DynamoDB table’s data to be copied.

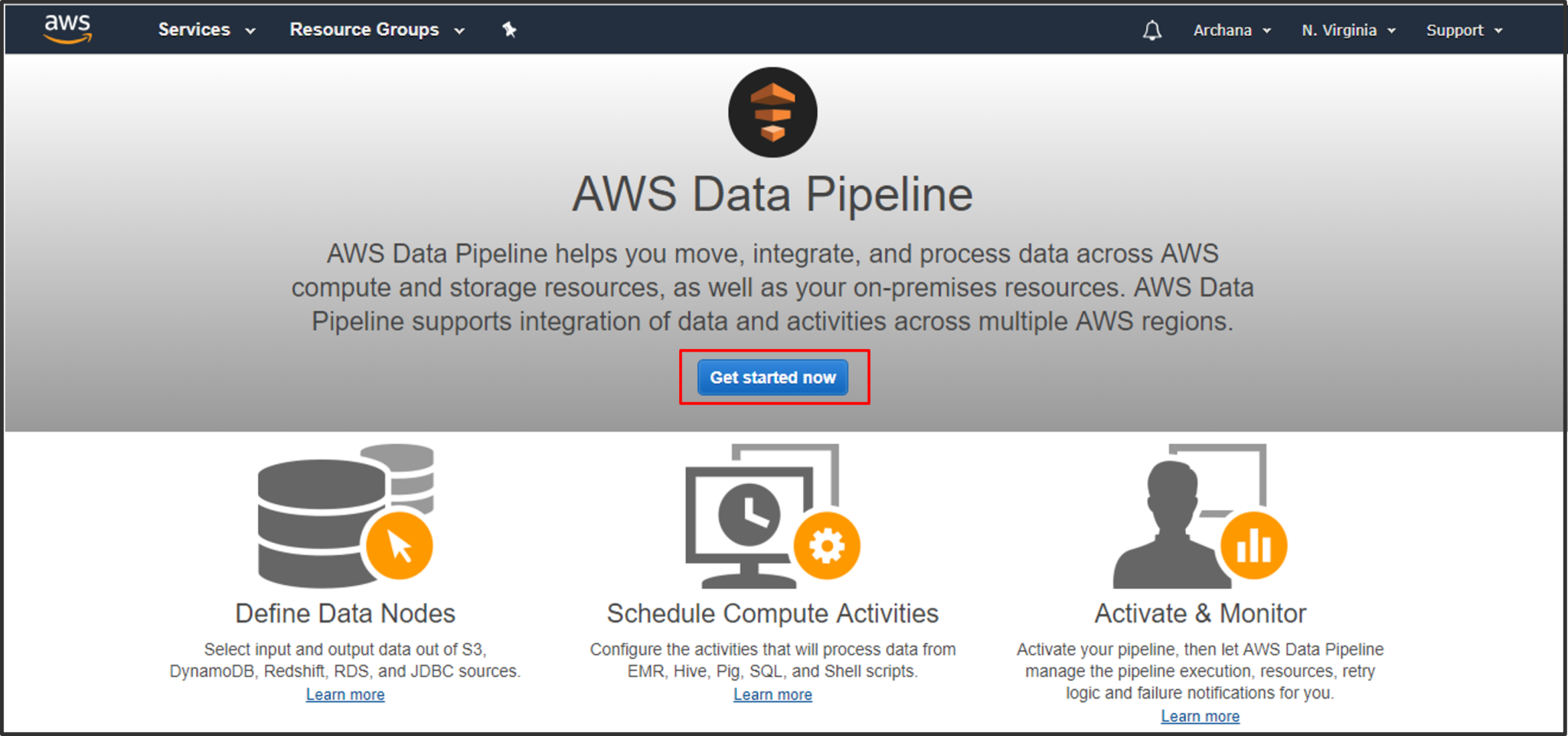

Step3: Access the AWS Data Pipeline console from your AWS Management Console & click on Get Started to create a data pipeline.

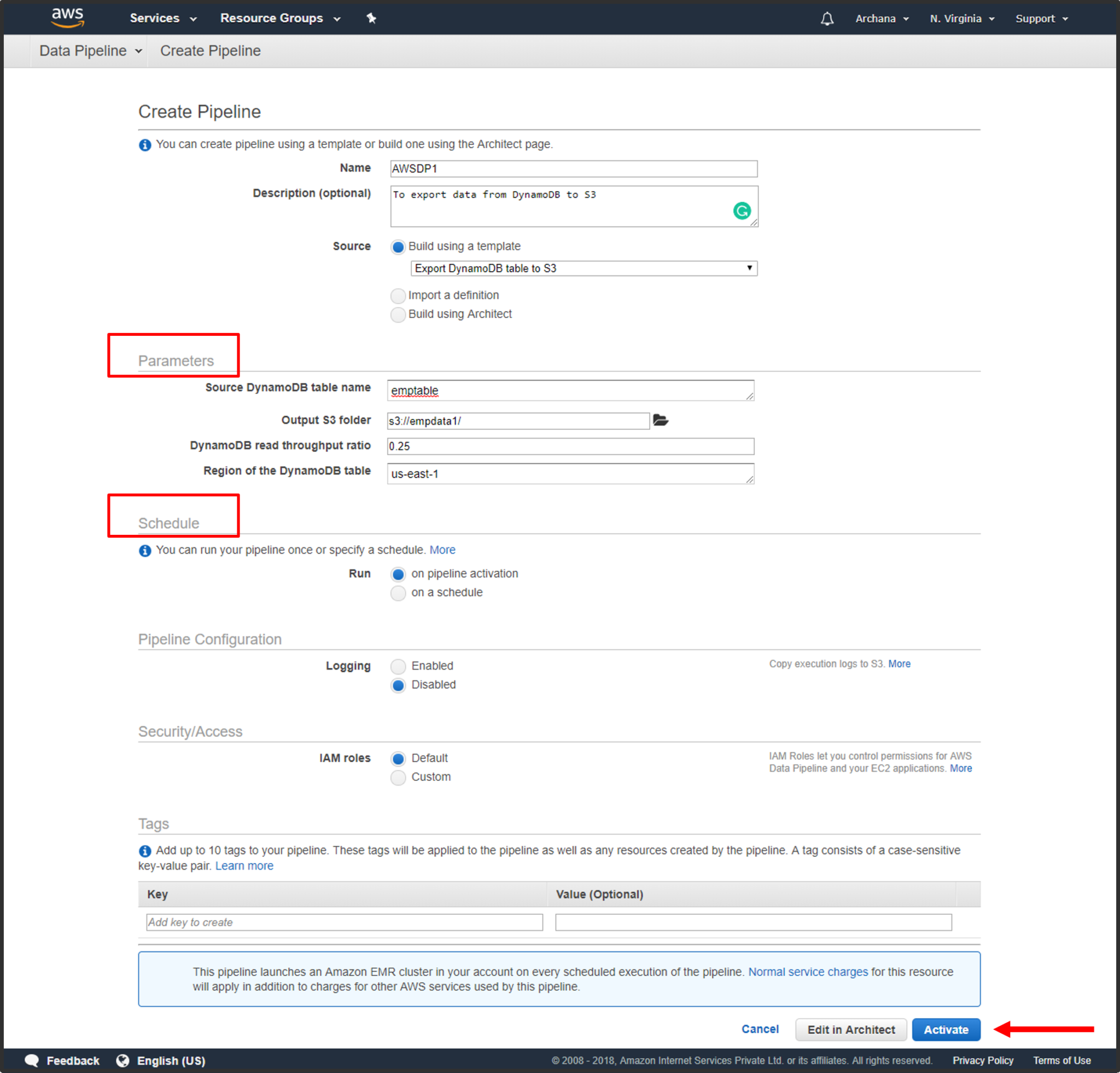

Step4: Create a data pipeline. Give your pipeline a suitable name & appropriate description. Specify source & destination data node paths. Schedule your data pipeine & click on activate.

Monitoring & Testing

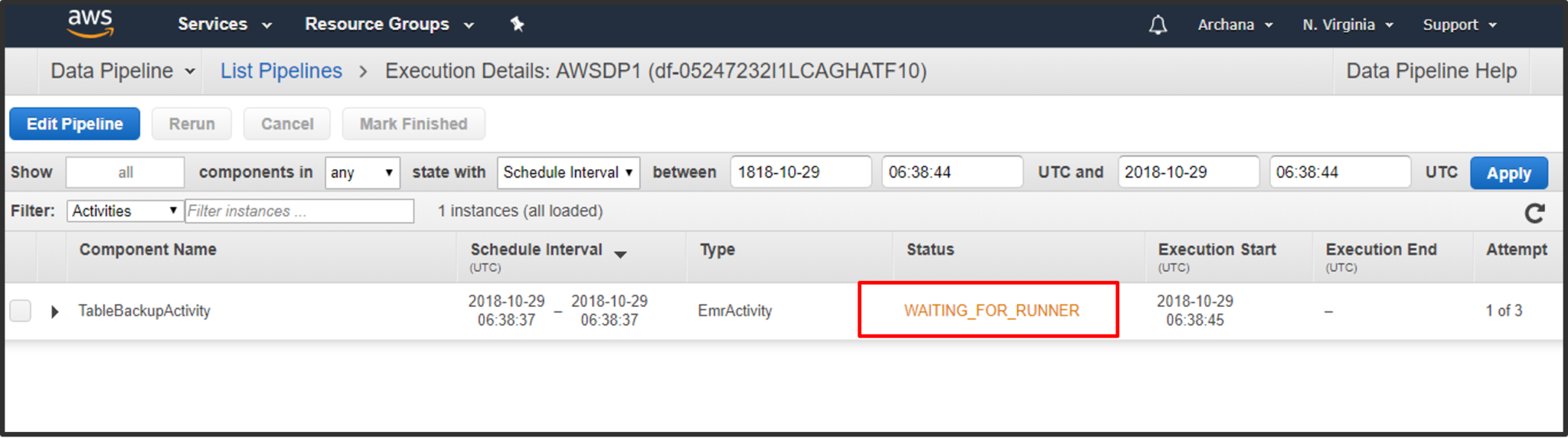

Step5: In the List Pipelines you can see the status as “WAITING FOR RUNNER”.

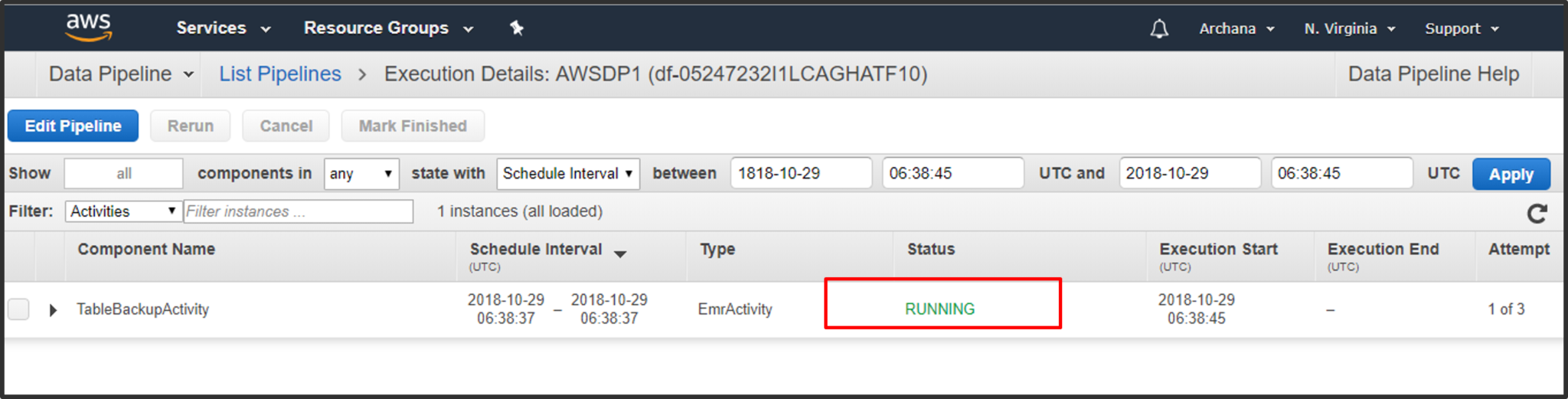

Step6: After a few minutes you can see the status has again changed to “RUNNING”. At this point, if you go to EC2 console, you can see two new instances created automatically. This is because of the EMR cluster triggered by Pipeline.

Step7: After finishing, you can access S3 bucket and find out if the .txt file is created. It contains the DynamoDB table’s contents. Download it an open in a text editor.

So, now you know how to use AWS Data Pipeline to export data from DynamoDB. Similarly, by reversing source & destination you can import data to DynamoDB from S3.

Go ahead and explore!

Unlock your potential as an AWS Developer by earning your AWS Developer Certification. Take the next step in your cloud computing journey and showcase your expertise in designing,

Got a question for us? Please mention it in the comments section of “AWS Data Pipeline” and we will get back to you.