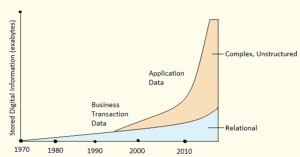

Apache Hadoop is quickly becoming the technology of choice for organizations investing in big data, powering their next generation data architecture. With Hadoop serving as both a scalable data platform and computational engine, data science is re-emerging as a centerpiece of enterprise innovation, with applied data solutions such as online product recommendation, automated fraud detection and customer sentiment analysis.

In this article, we provide an overview of data science and how to take advantage of Hadoop for large scale data science projects.

How is Hadoop Useful to Data Scientists?

Hadoop is a boon to data scientists. Let’s look at how Hadoop helps in boosting productivity of Data Scientists. Hadoop has a unique capability where all the data can be stored and retrieved from a single place. Through this manner, the following can be achieved:

- Ability to store all data in the RAW format

- Data Silo Convergence

- Data Scientists will find innovative uses of combined data assets.

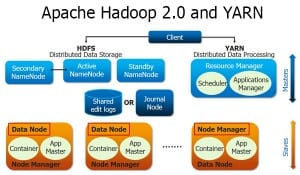

Key to Hadoop’s Power:

- Reducing Time and Cost – Hadoop helps in dramatically reducing the Time and Cost of building large scale data products.

- Computation is co-located with Data – Data and Computation system is codesigned to work together.

- Affordable at Scale – Can use ‘commodity’ hardware nodes, is self-healing, excellent at batch processing of large datasets.

- Designed for one write and multiple reads – There are no random Writes and is Optimized for minimum seek on hard drives

Become a master of data architecture and shape the future with our comprehensive Data Architect Certification.

Why Hadoop With Data Science?

Reason#1: Explore Large Datasets

The First and foremost reason being one can Explore Large Datasets directly with Hadoop by integrating Hadoop in the Data Analysis flow.

This is achieved by utilizing simple statistics like:

- Mean

- Median

- Quantile

- Pre-processing: grep, regex

One can also use Ad-hoc Sampling /filtering to achieve Random: with or without Replacement, Sample by unique Key and K-fold Cross-validation.

Reason#2: Ability to Mine Large Datasets

Learning algorithms with large datasets has its own challenges. The challenges being:

- Data won’t fit in memory.

- Learning takes a lot longer time.

When using Hadoop one can perform functions like distribute data across nodes in the Hadoop cluster and implement a distributed/parallel algorithm. For recommendations, one can Alternate Least Square algorithm and for clustering K-Means can be used.

Reason#3: Large Scale Data Preparation

We all know 80% of Data Science Work involves ‘Data Preparation’. Hadoop is ideal for batch preparation and cleanup of large Datasets.

Reason#4: Accelerate Data Driven Innovation:

Traditional data architectures have barriers to speed. RDBMS uses schema on Write and therefore change is expensive. It’s also a high barrier for data-driven innovation.

Hadoop uses “Schema on Read” which means faster time to Innovation and thus adds a low barrier on data driven innovation.

Therefore to summarize the four main reasons why we need Hadoop with Data Science would be:

- Mine Large Datasets

- Data Exploration with full datasets

- Pre-Processing At Scale

- Faster Data Driven Cycles

We, therefore, see that Organizations can leverage Hadoop to their advantage for mining data and gathering useful results from it.

Edureka has a specially curated Data Science Course Online that helps you gain expertise in Machine Learning Algorithms like K-Means Clustering, Decision Trees, Random Forest, and Naive Bayes. You’ll learn the concepts of Statistics, Time Series, Text Mining and an introduction to Deep Learning as well. You’ll solve real-life case studies on Media, Healthcare, Social Media, Aviation, HR. New batches for this course are starting soon!!

Also, If you are Ready to supercharge your career in data science then don’t miss out on the opportunity to earn your Data Science with Python Certification. With this certification, you’ll gain the skills and knowledge needed to excel in the world of data analysis, machine learning, and predictive modeling. Take the first step toward a brighter future in data science – enroll now, study diligently, and earn your certification to open doors to exciting career opportunities. Get started today and unlock your potential in the data-driven world!

Got a question for us?? Please mention them in the comments section and we will get back to you.